taowang

Posts

-

Infrastructure Comparisons (Server, Storage, CDN, DNS, -

How to configure storage for Typebot on CloudronThis is very easy to solve! You don't need any S3 storage just to serve images. That's like using nuke to bomb an ant. Here is the solution:

Step1: Create a repository on your github account.

Step2: Upload an image (e.g. bot avatar) to your repository.

Step3: Copy the link of your image, then paste to jsdelivr (select "convert from Github").

Step4: Copy the JSdelivr link to your Typebot.

Now, you have a world-class CDN provider serving your image FOR FREE!!!

This link is permanent unless you purge it! -

Infrastructure Comparisons (Server, Storage, CDN, DNS,Here is a spreadsheet (comparing infrastructure providers) I found on Reddit.

link textIMO (my current and near future infrastructure decision):

- Best VPS Provider: Hetzner Cloud (Shared & Dedicated)

- Best Bare Metal Provider: Hetzner Dedicated (Server Auction > Root Server)

- Best DNS Provider: Cloudflare (and Porkbun)

- Best CDN Provider: Cloudflare (and Bunny)

- Best PaaS Provider: Cloudron (and Coolify)

- Best S3 Compatible Storage Provider: Backblaze B2 (and Cloudflare R2, iDrive E2)

- Best non-S3 Storage Provider: Hetzner Storage Box

-

FlowiseAI Deployment Guide (Tested)Update: The Easiest Approach to Deploy Flowise

I just figured out an approach that is much better than using Dockerfile (as described above).

If you are using Huggingface, then you must use Dockerfile.

However, using Dockerfile means the server will build the image from scratch.

This requires at least 2vCPU and 16GB of RAM.

Huggingface offers 2vCPU and 16GB of RAM for free.

But if you are using Coolify, then you have to scale up your server (at least for the building process).A better way to deploy Flowise is to use a prebuilt image.

Easypanel is actually using this way to deploy Flowise.

The image it is using: flowiseai/flowise:latest

But if you are using Coolify, this image does not work very well. I tried many many times.

I recommand using another image built by Elestio: elestio/flowiseai:latest

Just copy "elestio/flowiseai:latest" and paste it to Coolify, and expose a PORT (such as 3000, 8000), and hit Deploy!

-

PocketBase youtube tutorialI just watched a Youtube tutorial on Pocketbase.

This is awesome. Thank you! -

FlowiseAI Deployment Guide (Tested)Update

If you want to connect FlowiseAI with Supabase which supports postgresql, here is what you need to do:

Step 1: create a project on Supabase.

Step 2: click "connect" on the top right, and copy the URL which looks like this:postgres://postgres.tmunbfajkfjakfdjqlaaepjv:[YOUR-PASSWORD]@aws-0-eu-east-1.pooler.supabase.com:5432/postgres

Step 3: take this URL apart

DATABASE_TYPE=postgres

DATABASE_USER=postgres.tmunbfajkfjakfdjqlaaepjv

DATABASE_PASSWORD=Your Password

DATABASE_HOST=aws-0-eu-east-1.pooler.supabase.com

DATABASE_PORT=5432

DATABASE_NAME=postgresVariables below depend on your cloud provider. See last update.

DATABASE_SSL=false

PGSSLMODE=disableI struggled with connecting FlowiseAI with Postgresql and Supbase for two days.

I succeeded with Postgresql yesterday on my own after hours of trial and errors.

And today GPT4o just lanuched. I asked her for help with Supabase.

And I got it working in 1 min. This is insane!!!!

For non-technical people like me, this is a wonderful era!!!!! -

FlowiseAI Deployment Guide (Tested)Update

Some cloud providers might not support internal SSL connection between FlowiseAI and PostgreSQL.

For example, Hetzner. I cannot follow the official documentation and set:- DATABASE_SSL=true

- PGSSLMODE=require

Instead, I have to set:

- DATABASE_SSL=false

- PGSSLMODE=disable

Your cloud providers may be different, but this update may save you some time from trial and error which is quite painful.

-

FlowiseAI Deployment Guide (Tested)Update

The SFTP backup approach is not perfect, because it is not designed with version control.

I figured out a much better solution for deployment, update, backup and restore process.

- Install PostgreSQL on Coolify.

- Install FlowiseAI on Coolify with the Dockerfile from the offcial documentation.

- Set Persistent Storage (destination path aka volume mount) as /app/storage.

- Environment Variables (tricky part):

Give these variables the save path as your persistent storage (volume):

APIKEY_PATH=/app/storage

LOG_PATH=/app/storage

SECRETKEY_PATH=/app/storage

BLOB_STORAGE_PATH=/app/storage

Add these variables:

DATABASE_TYPE=postgres

DATABASE_PORT=5432

DATABASE_HOST=

DATABASE_NAME=

DATABASE_USER=

DATABASE_PASSWORD=Note: You do not need DATABASE_PATH any more.

You can now back up your PostgreSQL database to your S3 storage.

-

FlowiseAI Deployment Guide (Tested)I really hope FlowiseAI will available on Cloudron, as Cloudron is by far the best PaaS I have tried.

And there are other AI-related apps that could add to Cloudron. For example, vector store for building RAG apps.

Here is a list of them with detailed comparison:

PGvector is supported by Supabase which is another great open-source app. -

FlowiseAI Deployment Guide (Tested)For those of you who wish to deploy FlowiseAI, here are 3 cost-effective approaches I have tested:

Approach 1: Huggingface

Just follow this tutorial: link text

Huggingface is much cheaper than render, railway and replit.

It is free to deploy the app on Huggingface. You get 2 vCPU and 16GB for free!!

But you need to pay for the persistant storage ($5 per month for 20GB).

Note: This approach does not have backup and restore feature.Approach 2: Easypanel

Flowise is available on Easypanel as a one-click app.

The free version of Easypanel does not have backup and restore feature.

But I figured a workarrount (see the end).Approach 3: Coolify

Flowise is not available as a one-click app on Coolify, but you can install it with Dockerfile.

It is very similar to deploying on Huggingface: link text

Step1: Copy the Dockerfile (from the link above) to Coolify.

Step2: Add Environment Variables. I compiled a list of environment variables, so you can just copy and edit it based on your use cases.FLOWISE_USERNAME= FLOWISE_PASSWORD= CORS_ORIGINS= IFRAME_ORIGINS= LANGCHAIN_TRACING_V2=true LANGCHAIN_ENDPOINT= LANGCHAIN_API_KEY= LANGCHAIN_PROJECT= TOOL_FUNCTION_BUILTIN_DEP=* TOOL_FUNCTION_EXTERNAL_DEP=axios,moment STORAGE_TYPE=s3 S3_STORAGE_BUCKET_NAME= S3_STORAGE_ACCESS_KEY_ID= S3_STORAGE_SECRET_ACCESS_KEY= S3_STORAGE_REGION= OVERRIDE_DATABASE=false NUMBER_OF_PROXIES=1 FLOWISE_SECRETKEY_OVERWRITE= DATABASE_PATH=/app/storage APIKEY_PATH=/app/storage LOG_PATH=/app/storage SECRETKEY_PATH=/app/storage BLOB_STORAGE_PATH=/app/storageStep3: Set the Persistent Storage (destination path) as /app/storage

Step4: Enable health checksFlowiseAI uses SQlite as its database by default, but Coolify currently does not have backup method for SQLite.

Backup and restore workarround for Coolify and Easypanel

Step1: Install a SFTP tool (cyberduck or filezilla) on your PC.

Step2: Use SFTP to log into your server.

Step3: Create a folder under the root folder, and name it as flowise.

Step4: Go to your Coolify or Easypanel dashboard, and create a bind mount (do not use volume mount).

Step5: Create a folder (named flowise) on your local PC.

Step6: Use cyberduck or filezilla to sync the bind mount database (.sqlite file) to your local folder.

Step7: If you want to backup to S3 compatible storage, use Cyberduck to connect to your bucket. -

Flowise - UI for LangChainthis is the docker file: https://docs.flowiseai.com/configuration/deployment/hugging-face

-

Flowise - UI for LangChainWhen can we have Flowise? This tool is great!

-

n8n Content Security Policy not workingI built a chatbot with N8N. I want to embed it on my own website and prevent other websites embedding it.

I set up CORS both inside N8N and on Cloudron, but I can still embed on W3school.

Did I make any mistake?

-

apt update and apt upgradeHi, just wondering if we should run apt update and upgrade mannually?

I ran these commands right before installing Cloudron.

Should I run these commands to update and upgrade mannually in the future?

Or Cloudron will do these automatically?

I searched the documentation, but this information is not mentioned. -

Cost of Hosting Videoshow is bunny video privacy like?

I am learning the privacy settings of these video hosting platforms, since the videos are paid courses.

with vimeo, there is a setting called "Hide from Vimeo" that allows you to embed videos on your own site. Your users cannot share your video link because the vimeo source link will not play it (it is hidden).

but with peertube, it does not have this feature. the only way to protect your video is to set the privacy to "password protected". your users must fill in the password in order to watch it. but they can also easily leak the link and the password. you have to change the password frequently. more dangerously, users can right click on the embedded video and download it....this is ridiculous for paid courses.

-

minio and Hetzner Storage BoxThat works with object storage that meets 2 requirements: https://docs.joinpeertube.org/maintain/remote-storage

- S3 compatible

- support virtual hosting of bucket.

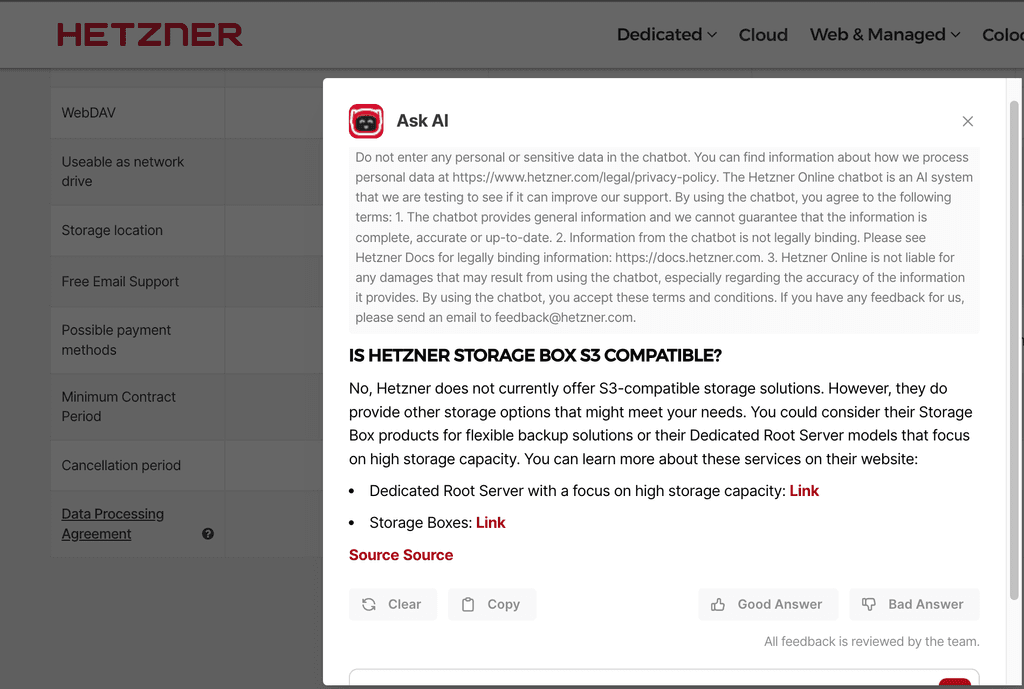

I thought Hetzner storage box is not S3 compatible. Is it?

-

Cost of Hosting VideosCloudflare R2 and Backblaze B2 do not charge egress fee.

I am considering PeerTube + Backblaze B2 + Cloudflare CDN

But I am not sure if the free tier Cloudflare CDN supports video streaming.

I read some reddit and hackernews posts that say video streaming is not supported by cloudflare CDN.

-

Cost of Hosting VideosPeertube + Hetzner Storage Box may not work according to this thread: https://forum.cloudron.io/topic/11521/minio-and-hetzner-storage-box/14

I am wondering if Emby supports video embedding, since I need to embed videos on Moodle.

-

minio and Hetzner Storage BoxHere is my understanding for now (correct me if I am wrong):

- In order to stream videos, I need to use "Data Directory" rather than "Mounts".

- Only volumes with Mount Type EXT4 and NFS can be used as Data Directory.

- Hetzner storage box does not support either EXT4 or NFS.

I was confused with Data Directory and Mounts.

I thought I could use Mounts for video streaming purposes.