A pity that this does not show up in the /srv/status endpoint as well.

mononym

Posts

-

Discourse 2026.4.0 does not start Sidekiq -

Discourse 2026.4.0 does not start SidekiqThe admin dashboard on our instance indicates "Translation missing: en.dashboard.problem.sidekiq_check" maybe corroborating the findings.

-

What's coming in 9.2As this is about email, I wonder if there's a way to have a chatmail relay. Kind of "batteries included" for the couldron email addresses or so. But I guess that's out of scope. Just came across that project again because it got some larger grant: https://www.sovereign.tech/tech/chatmail

c.f. https://forum.cloudron.io/topic/13952/chatmail -

LAUTI - Open Source Community Calendar for events, groups and placesI found a docker compose.yaml which could maybe be helpful.

-

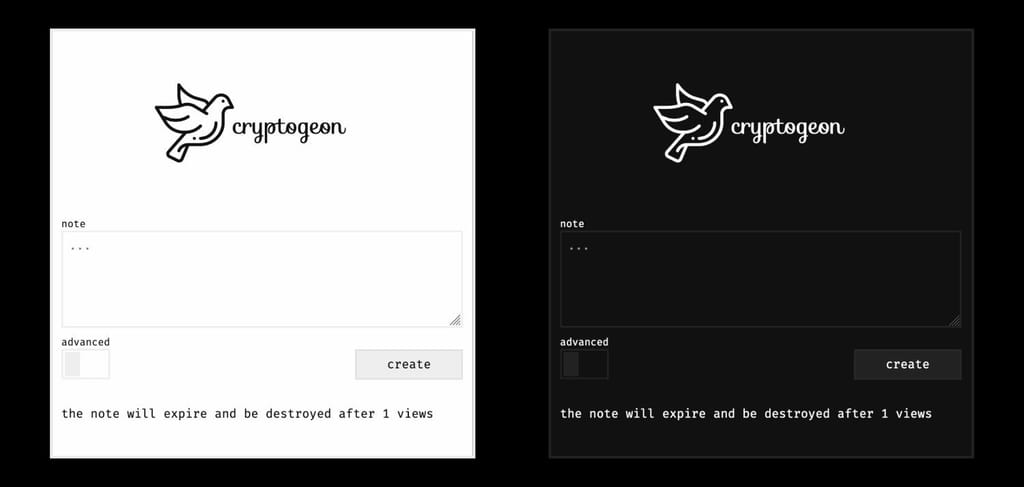

Cryptgeon - note / file sharing service written in rust & svelte.- Main Page: https://cryptgeon.org/

- Git: https://github.com/cupcakearmy/cryptgeon

- Licence: MIT

- Dockerfile: Yes

- Demo: https://cryptgeon.org/

- Summary: Easily send fully encrypted, secure notes or files with one click. Just create a note and share the link.

- Alternative to: jirafeau, microbin, privatebin

-

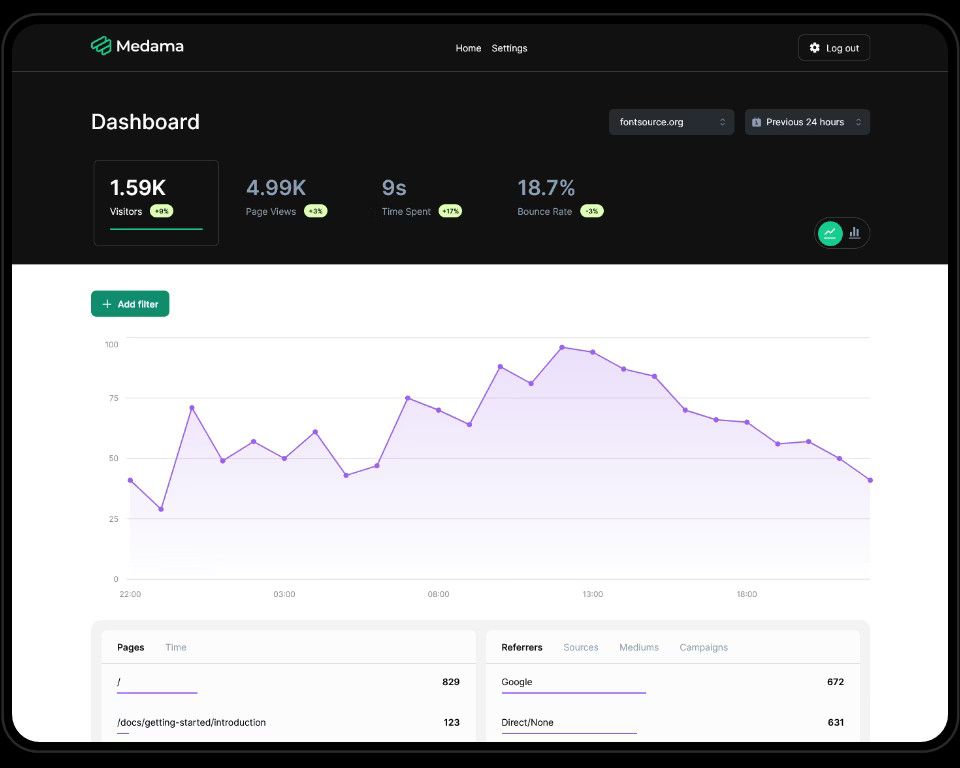

Medama - Self-hostable, privacy-focused website analytics- Main Page: https://oss.medama.io/

- Git: https://github.com/medama-io/medama

- Licence: Apache License 2.0 + MIT

- Dockerfile: Yes

- Demo: https://demo.medama.io/

- Summary: Medama Analytics is an open-source project dedicated to providing self-hostable, cookie-free website analytics. With a lightweight tracker of less than 1KB, it aims to offer useful analytics while prioritising user privacy.

- Alternative to link: https://alternativeto.net/software/medama/

-

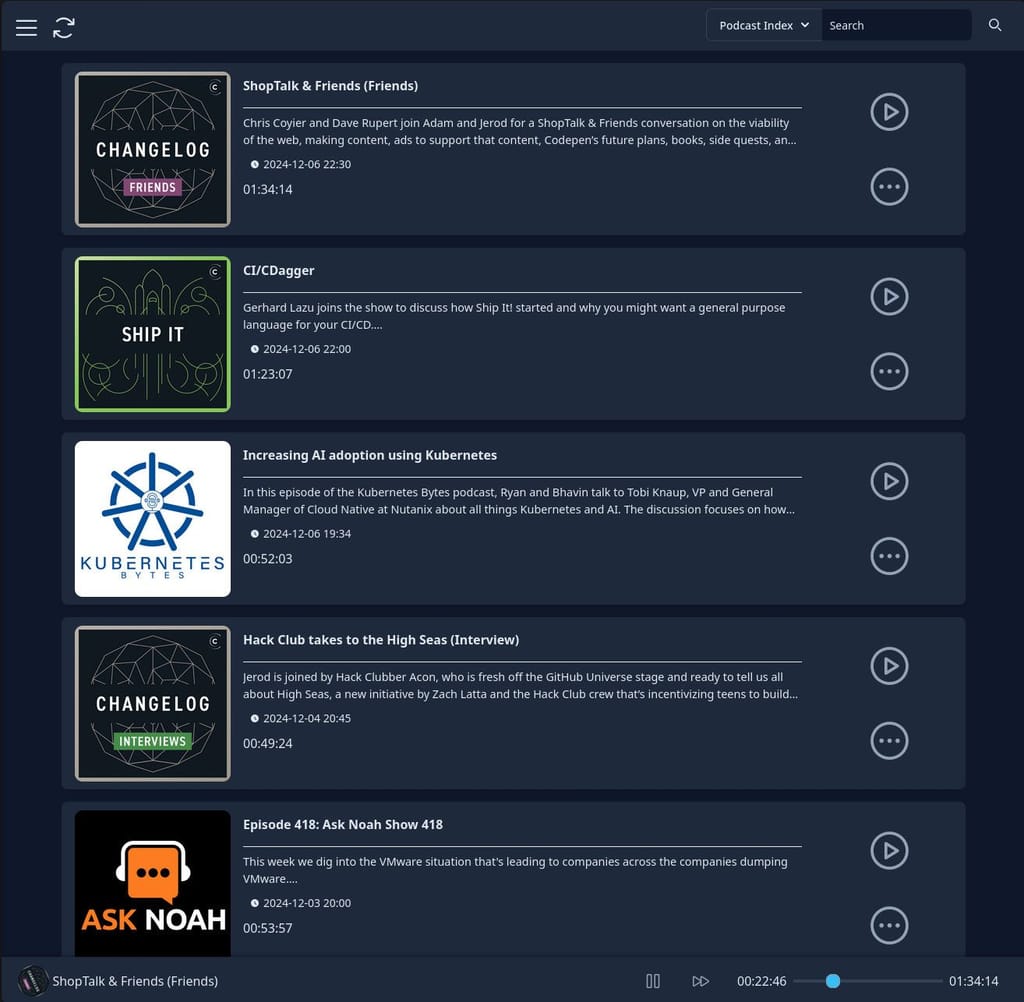

Pinepods - Your Complete Podcast Ecosystem- Main Page: https://www.pinepods.online/

- Git: https://github.com/madeofpendletonwool/PinePods

- Licence: GPL-3.0

- Dockerfile: Yes

- Demo: https://try.pinepods.online/

- Summary: Lightning-fast Rust-powered podcast server with seamless sync across all you and your family's devices. Everything you need for podcasts, nothing you don't.

- Alternative to link: https://alternativeto.net/software/pinepods/about/

- Screenshots: more here

-

Registration not disabledThe oPodSync documentation states that registration is disabled after the first account creation, but that seems not to be the case for the cloudron package.

The env.sh content

export TITLE="My oPodSync Server" #Enable below to open up registration after initial account creation #export ENABLE_SUBSCRIPTIONS=1reflects the settings, but I was still able to register accounts.

-

App documentation linkHello. The link towards the documentation page from the app dropdown menu is broken: it is currently

https://docs.cloudron.io/apps/opodsyncbut should behttps://docs.cloudron.io/packages/opodsyncinstead. -

Holos Relay Server - A Mobile-First Federated Social Network- Main Page: https://holos.social/

- Git: https://codeberg.org/tom79/Holos-Relay-Server

- Licence: AGPL-3.0

- Dockerfile: Yes

- Summary: Connect to the fediverse through a relay server designed specifically for mobile devices. Join a decentralized network where you control your data, your privacy, and your social experience.

-

Wikibase - collaborative knowledge bases- Main Page: https://wikiba.se/

- Git: https://github.com/wmde/wikibase-release-pipeline

- Licence: BSD-3-Clause

- Dockerfile: Yes (?)

- Demo: https://wikiba.se/showcase/

- Summary: Wikibase is an open-source software suite for creating collaborative knowledge bases, opening the door to the Linked Open Data web.

- Notes: currently looking into SKOS and other research software.

- Alternative to: Omeka S

- Screenshots: c.f. Demo

-

Element Server SuiteToday's blog post by Element: "Introducing the ESS Community migration tool"

https://element.io/blog/introducing-the-ess-community-migration-tool/ -

Looking for an App?Hello. That's very kind of you. As a start, maybe an easy one. I was currently in touch with the developer of ControlR. He was very optimistic about the feasibility to get it on Cloudron via Github actions from his repo. The project is taking off right now so there was a lot on his plate. Maybe you could have a look?

https://forum.cloudron.io/topic/14995/controlr-remote-control-and-remote-access

-

How to create Sender from outbound mail ?@james said in How to create Sender from outbound mail ?:

Currently, there is an issue with the Keila shared mailer admin ui.

I saw that error after I started this thread. My guess was that it wasn't implemented yet as the app is marked as unstable.

@james said in How to create Sender from outbound mail ?:

This will give you all the details.

That works perfectly, thanks !

-

git-pages - The final word in static site hosting- Main Page: https://git-pages.org/

- Git: https://codeberg.org/git-pages/git-pages

- Licence: 0-clause BSD

- Dockerfile: Yes -> https://git-pages.org/running-a-server/#via-docker

- Demo: https://codeberg.page/

- Summary: Deploy your website with a single HTTP request or a git push. git-pages is an application that serves static websites from either filesystem or an S3 compatible object store and updates them when directed by the site author through an HTTP request. The server scales linearly from a personal instance running on a Raspberry Pi to a highly available, geographically distributed cluster powering Grebedoc. It is written in Go and does not depend on any other services, although most installations will use a reverse proxy like Caddy or Nginx to serve sites using the https:// protocol.

- Notes: Stable and able to scale, as the Codeberg Pages backend switches to git-pages (https://docs.codeberg.org/codeberg-pages/).

- Alternative to: GitHub Pages, Netlify

-

How to create Sender from outbound mail ?Hello.

I think setting up Keila is straight forward, but I can't figure out how to add the outbound mail as Sender. The main question is: can I simply use the outbound email provided by Cloudron fot the app or do I need to set up a Cloudron mailbox for the app ?

In the Cloudron app dashboard of Keila, the email tab shows an outbound email. I set it to

keila@domain.netand save. In Keila, I need to set up a Sender: https://www.keila.io/docs/senders. I attempted to follow the answer here: https://forum.cloudron.io/topic/3247/need-help-with-the-smtp-settings/3?_=1771073978666Server =

my.domain.net

Username =keila@domain.net

Password =my domain cloudron password

Connection security =STARTTLS

Port =587The above settings fail to send the email:

Feb 14 14:19:28 2026-02-14T13:19:28.248 [warning] Failed sending email to valid_email@mailbox.org for campaign nmc_xogqjL2z: {:no_more_hosts, {:permanent_failure, ~c"109.77.148.140", :auth_failed}}109.77.148.140= IP of the Cloudron serverDo you think I need to create a distinct mailbox in Cloudron and connect it to Keila instead ?

Thanks -

Best practices for email security?The following app is exactly what I was looking for in order to get past emails off the mail server (and reduce the number of maybe sensitive attachment files just lying around): Bichon Email Archiving System https://forum.cloudron.io/topic/15066/bichon-lightweight-rust-based-email-archiving-system-with-full-text-search

P.S.: Keeping a tidy inbox can be rewarding as well: https://posteo.de/en/blog/new-posteo-doubles-storage-space-to-4gb

-

Element Server SuiteMention and live install+demo of ESS during a FOSDEM talk: https://fosdem.org/2026/schedule/event/BRRQYU-sustainable-matrix-at-element/

-

Element Server SuiteThat's grant ! Thank you very much. I would like see if my wish from https://forum.cloudron.io/post/119520 could already be implemented in the package ? No pressure

️

️ -

OIDC customization settings not persistentYes, this makes perfect sense to me. That's also why I only want to change two specific parameters (

localpart_templateanddisplay_name_template) and not the whole OIDC setup, which should be unmutable so to say. And in my case, I also wanted to ensure thatemail_templateis kept in sync with the Cloudron account email, only giving freedom to set a desired handle and display name (although that one can be modified afterwards by the user).P.S.: I did not test yet if other settings are persistent or not, as I intend to set a retention policy for synapse as well.