Sorry, I didn't answer your original question directly...

Real world server specs for OpenWebUI itself are very low. My Cloudron OpenWebUI app instance fits into a few Gb of storage, barely uses any CPU on its own, and runs in well under 1 Gb of RAM.

But if you want to use the embedded ollama system to interact with locally hosted LLMs, your server needs to support the actual LLMs aswell as OpenWebUI. So you need all of this:

- Enough disk storage for all the models you want to use.

- Individual models you can typically run locally for a reasonable cost range from 2-3 Gb (e.g. for a 3B model) up to 40-50Gb (e.g. for a 70B model). You might want to store multiple models.

- Enough RAM (or VRAM) to fully load the model you want to use into memory, separately for each concurrent chat.

- To roughly calculate, you need the size of the model file plus some room for chat context depending on how much you want it to know/remember during chats, e.g. 3-6Gb per chat for 3-8B models, more for the bigger ones.

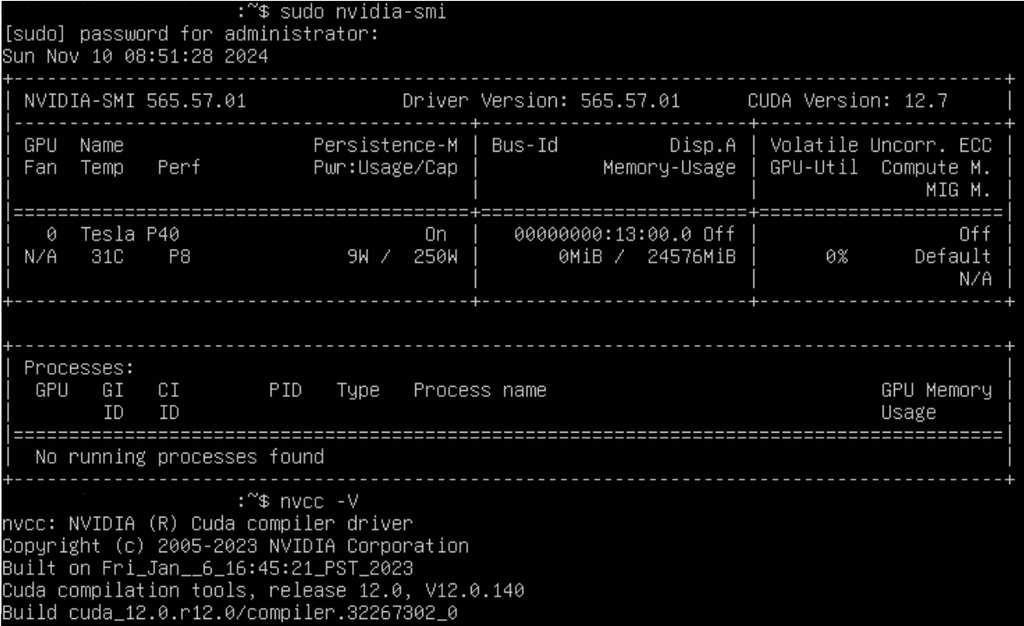

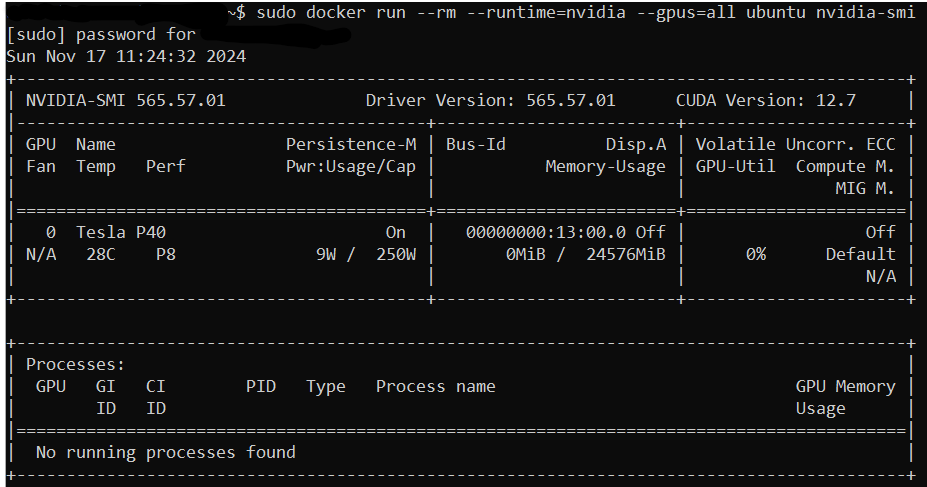

- Enough CPU (or GPU) compute power to run the model fast.

- For tiny (3-8B) models, expect 1-2 minutes per chat response on a typical CPU+RAM system and don't imagine you can use bigger models at all, or seconds per chat response using GPU+VRAM. (Note: You might do better than that on the very latest CPUs, but GPU+VRAM is still going to be hundreds of times faster.)

- If you're using CPU+RAM (as opposed to GPU+VRAM):

- You'll find that your disk I/O will be hammered (particularly during model loading) too, so you'll want very fast SSDs.

- Expect your CPU and your RAM to be fully consumed during inference (chats), so don't expect to be running other apps on your server at the same time.

In short, I'm not sure that a VPS hosted OpenWebUI instance running only on CPU+RAM is ever going to be useful for self hosted LLMs.

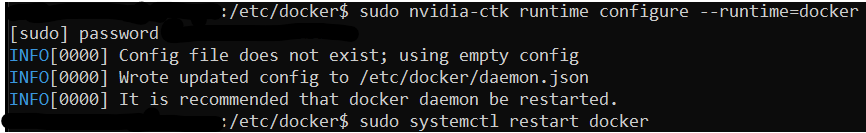

Unfortunately, even if you have a GPU on your virtual server, even if you get under the hood and install your GPU drivers on the Ubuntu operating system, currently Cloudron's OpenWebUI app installation won't use your GPU. So on Cloudron you're stuck with CPU+RAM.

But that is not as gloomy as it sounds... To answer your next question...

@timconsidine said in Real-world minimum server specs for OpenWebUI:

Using “out the box” with local default model.

Is there any point to the app to use with publicly hosted model ?

Yes, there is a point. Your use cases for handling data privately are more limited, certainly, but there are some outstanding advantages to doing this, particularly on Cloudron.

- You're storing your data (including chats and RAG data) on a system you control.

- Although you're still sending your chats and data within them to the public model, you at least control what you can do with the storage of your chats and data.

- You can download, backup, and always access your chats, or move them to a different OpenWebUI server, even if your connection to the public model is severed.

- You can interact with multiple public and private models via a single interface, even within each chat. None of the public platforms let you talk to the others.

- E.g. OpenWebUI has some pretty cool features to let you split chat threads among different models, and let models "compete" with each other using "arena" chats. We've found this to be invaluable in our business because a lot of optimizing AI usage is about experimentation and finding the best tool for the task at hand.

- You can install and manage your own prompt libraries, system prompts, workspaces (like "GPTs" in ChatGPT), coded tools and functions (OpenWebUI has some cool integrated Python coding capabilities in this area), in a standard way across every LLM that you interact with, and without storing your code and extended data in the public cloud.

- You can brand your chat UI according to your company or client, and modify/integrate it in other ways. OpenWebUI is flexible and open source.

- You can centrally connect to other apps that you self-host for various workloads including data access and agent/workflow automation without needing to upload and manage all that stuff in public systems.

- E.g. some apps running on Cloudron that can give your AI interactions super powers include:

- N8N for workflow automation

- Nextcloud for data storage and management

- Chat and notification apps

- BI apps like Baserow and Grafana

- E.g. some apps running on Cloudron that can give your AI interactions super powers include:

- You can manage and segregate branded multi-user access to different chats and different AIs, either in a single OpenWebUI instance, or (since app management on Cloudron is so bloody easy), different instances on different URLs.

- In the future when you switch to self hosted LLMs or other integrations, there's little no migration. You just switch off the public API connectors and redirect them to your own models and tools, because you managed your data and chats and code integrations locally from the outset.

- And more. I'm sure I didn't think of everything.

By the way, plenty of these advantages are either because of or enhanced by running on Cloudron. Cloudron is great.

I haven’t tried DeepSeek locally but might be worth a shot for privacy. I wouldn’t use it otherwise.

I agree with that decision wholeheartedly. Well, unless you're talking with DeepSeek about stuff that you want the whole world to know and learn from. Then, go nuts.

) But I don't need to sell Cloudron's benefits to this group, I'm sure, just noting they're great in relation to OpenWebUI. We are big fans of Cloudron, by the way!

) But I don't need to sell Cloudron's benefits to this group, I'm sure, just noting they're great in relation to OpenWebUI. We are big fans of Cloudron, by the way!