"BrowserType.connect_over_cdp: Timeout 60000ms exceeded" error

-

Hi everyone,

I’m encountering an issue with changedetection.io.

When attempting to perform a website check, I receive the following error:

Exception: BrowserType.connect_over_cdp: Timeout 60000ms exceeded. Call log:

- ws://0.0.0.0:3000/

- ws://0.0.0.0:3000/

Troubleshooting Attempts:

- Restarted the app.

- Restarted Cloudron.

Is this a known issue?

Thanks in advance for any insights!

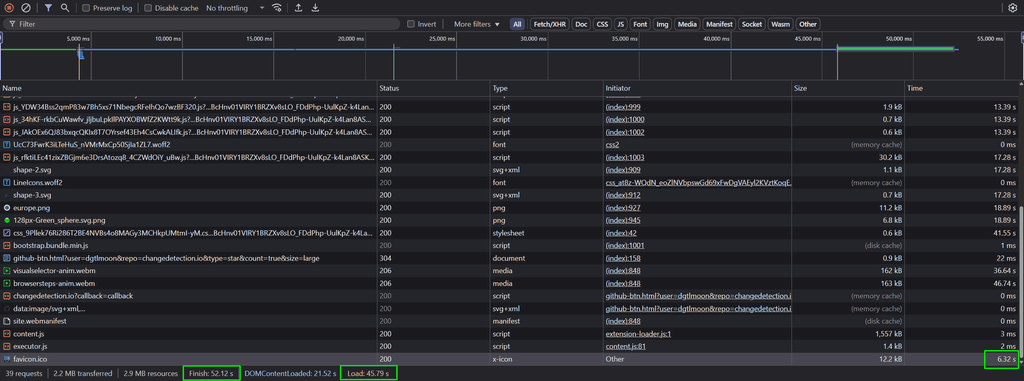

Edit: this is part of log:

Jun 18 13:51:50 xxx.xxx.xxx.xxx - - [18/Jun/2025 11:51:50] "GET / HTTP/1.1" 302 - Jun 18 13:51:51 2025-06-18 11:51:51.067 | DEBUG | __main__:_request_retry:422 - WebSocket ID: xxxxxxxxx-xxxx-xxxx-xxxx-xxxxxxxxxxxx - _request_retry attempt 14/20 for http://localhost:10280/json/version (timeout=11.5s) Jun 18 13:51:51 2025-06-18 11:51:51.069 | INFO | __main__:stats_thread_func:704 - Connections: Active count 1 of max 10, Total processed: 282. Jun 18 13:51:51 2025-06-18 11:51:51.071 | WARNING | __main__:_request_retry:441 - WebSocket ID: xxxxxxxxx-xxxxxxxxx-xxxx-xxxx-xxxxxxxxxxxx - Network error connecting to Chrome at http://localhost:10280/json/version, retrying (attempt 14/20)... Jun 18 13:51:51 2025-06-18 11:51:51.073 | INFO | __main__:stats_thread_func:727 - Process info: 6 child processes Jun 18 13:51:54 2025-06-18 11:51:54.075 | DEBUG | __main__:_request_retry:422 - WebSocket ID: xxxxxxxxx-xxxxxxxxx-xxxx-xxxx-xxxxxxxxxxxx - _request_retry attempt 15/20 for http://localhost:10280/json/version (timeout=12.0s) Jun 18 13:51:54 2025-06-18 11:51:54.075 | INFO | __main__:stats_thread_func:704 - Connections: Active count 1 of max 10, Total processed: 282. Jun 18 13:51:54 2025-06-18 11:51:54.080 | WARNING | __main__:_request_retry:441 - WebSocket ID: xxxxxxxxx-xxxxxxxxx-xxxx-xxxx-xxxxxxxxxxxx - Network error connecting to Chrome at http://localhost:10280/json/version, retrying (attempt 15/20)... Jun 18 13:51:54 2025-06-18 11:51:54.081 | INFO | __main__:stats_thread_func:727 - Process info: 6 child processes Jun 18 13:51:57 2025-06-18 11:51:57.083 | DEBUG | __main__:_request_retry:422 - WebSocket ID: xxxxxxxxx-xxxxxxxxx-xxxx-xxxx-xxxxxxxxxxxx - _request_retry attempt 16/20 for http://localhost:10280/json/version (timeout=12.5s) Jun 18 13:51:57 2025-06-18 11:51:57.083 | INFO | __main__:stats_thread_func:704 - Connections: Active count 1 of max 10, Total processed: 282. Jun 18 13:51:57 2025-06-18 11:51:57.087 | WARNING | __main__:_request_retry:441 - WebSocket ID: xxxxxxxxx-xxxxxxxxx-xxxx-xxxx-xxxxxxxxxxxx - Network error connecting to Chrome at http://localhost:10280/json/version, retrying (attempt 16/20)... Jun 18 13:51:57 2025-06-18 11:51:57.089 | INFO | __main__:stats_thread_func:727 - Process info: 6 child processes Jun 18 13:52:00 xxx.xxx.xxx.xxx - - [18/Jun/2025 11:52:00] "GET / HTTP/1.1" 302 - -

Hello @p44

Could be but could also be some sites throttling this specific agent after some time. -

@james Some other user opened an issue: https://github.com/dgtlmoon/changedetection.io/discussions/2593 Please have a look. Thanks

-

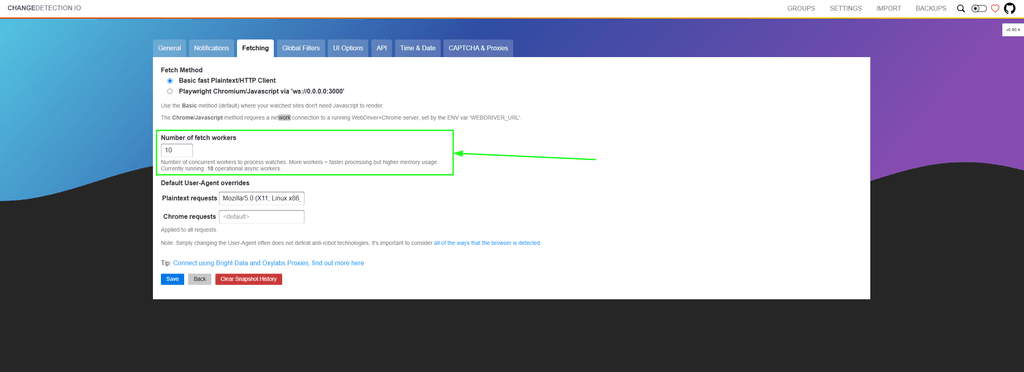

It seems Cloudron sticks to the default:

| `FETCH_WORKERS` | `10` | Number of async workers for watch processing |Also there is this line:

https://github.com/dgtlmoon/changedetection.io/blob/58e2a41c9558a242e732320af4ab0b0ae6efe326/changedetectionio/flask_app.py#L596# Start the async workers during app initialization # Can be overridden by ENV or use the default settings n_workers = int(os.getenv("FETCH_WORKERS", datastore.data['settings']['requests']['workers']))Which is also confirmed by the web ui:

-

@james Thanks. I changed value to "2" on the web UI, let see what will happen.

Thanks a lot for your patience and your support, James

Hello! It looks like you're interested in this conversation, but you don't have an account yet.

Getting fed up of having to scroll through the same posts each visit? When you register for an account, you'll always come back to exactly where you were before, and choose to be notified of new replies (either via email, or push notification). You'll also be able to save bookmarks and upvote posts to show your appreciation to other community members.

With your input, this post could be even better 💗

Register Login