how to configure Ollama in OpenWebUI

-

Hi guys,

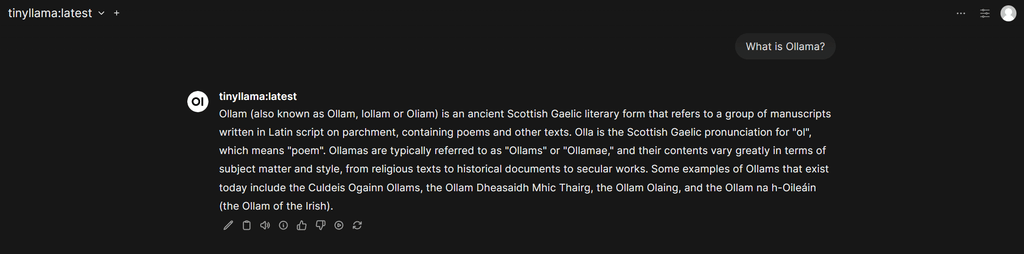

Could you please guide me how to configure Ollama in OpenWebUI so that I can download a model to test?

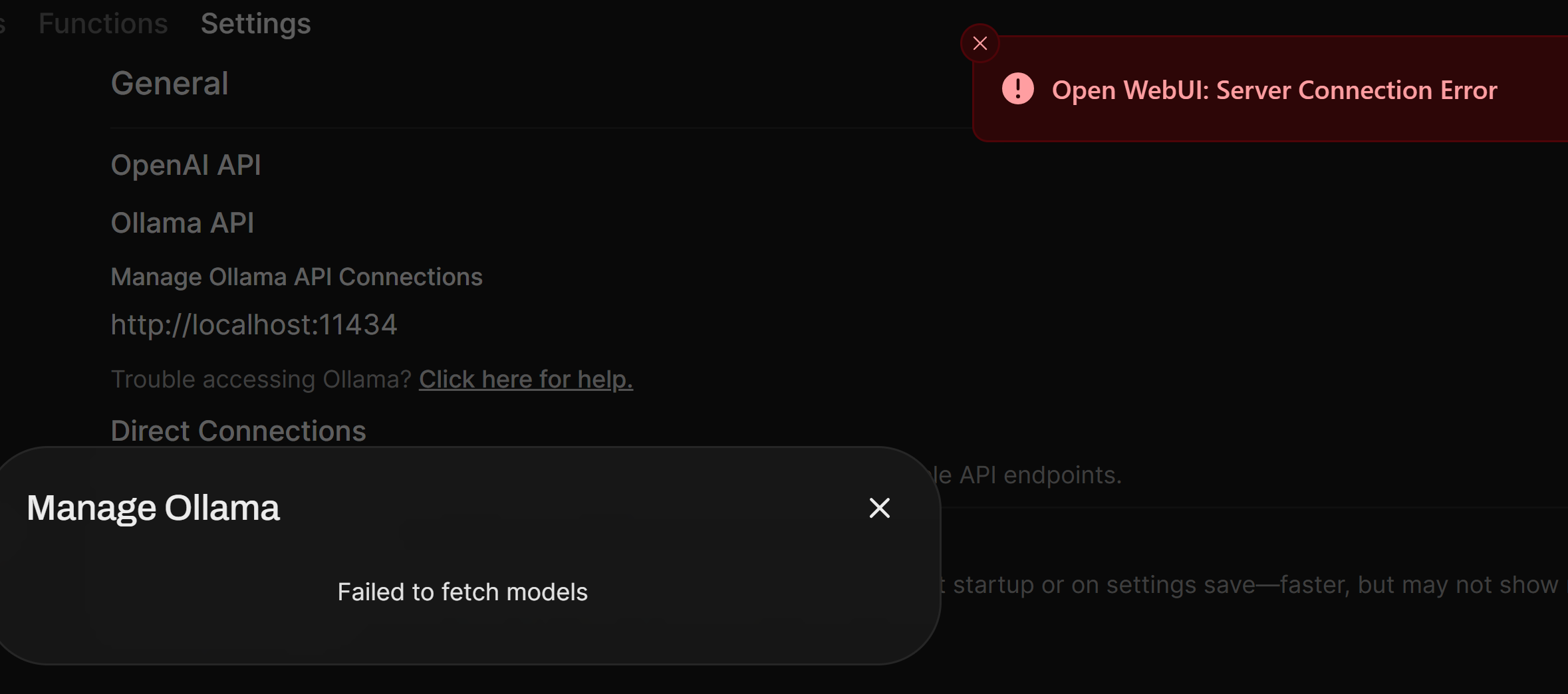

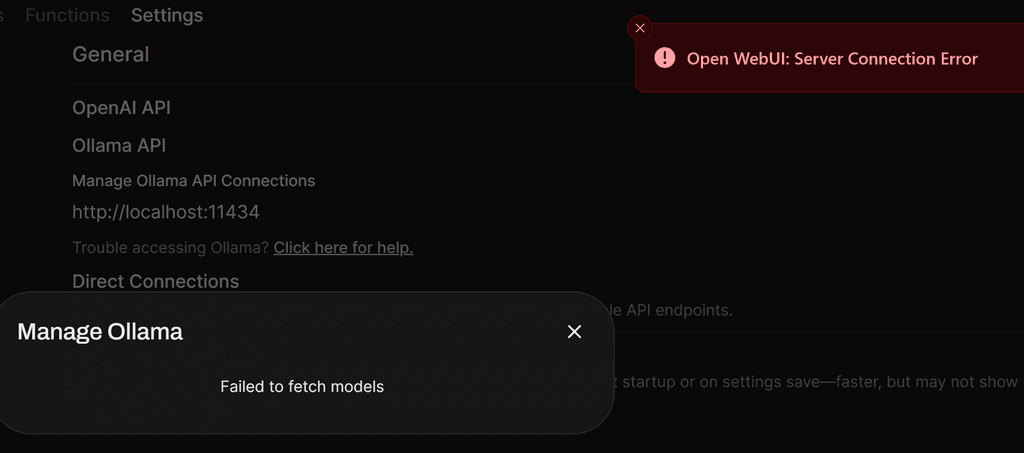

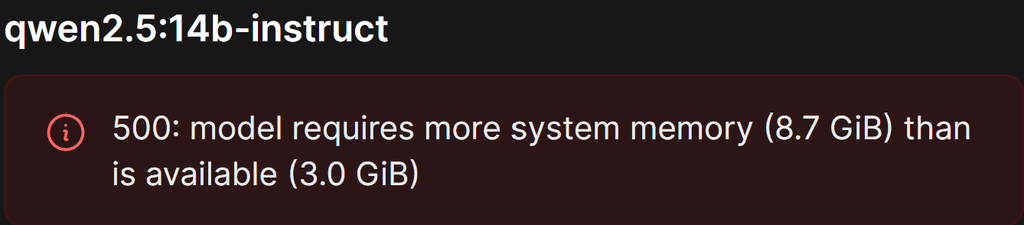

I am having below error after I installed OpenWebUI.

I tried to change localhost to my OpenWebUi domain but still same error. My OpenWebUI domain is behind cloudflare proxy.

-

Hello @harryz

The OpenWebUI Cloudron app no longer comes bundles with an internal ollama server.

Sorry, the documentation is outdated and I will update it accordingly.

Please install the Ollama app.

Inside the Ollama app web terminal you can run this command to pull a modell:ollama pull tinyllamaIn the Ollama app web terminal also run:

cat /app/data/.api_keyNote down this key, you will need it.

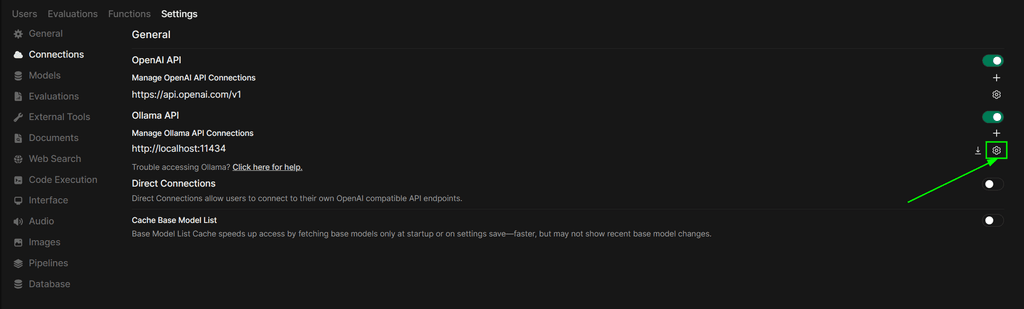

Then you need to configure the OpenWebUI app.

Login as an admin and navigate tohttps://YOUR.DOMAIN.TLD/admin/settings/connections:

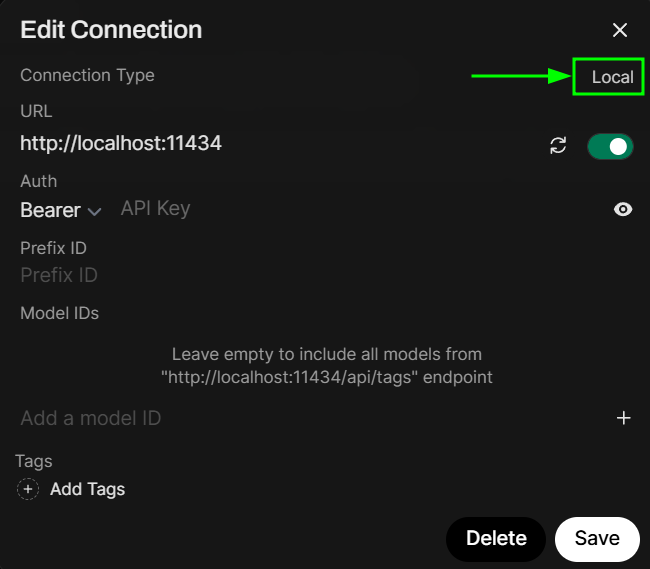

Press the configuration button for Ollama:

Click on

localto change it toexternal:

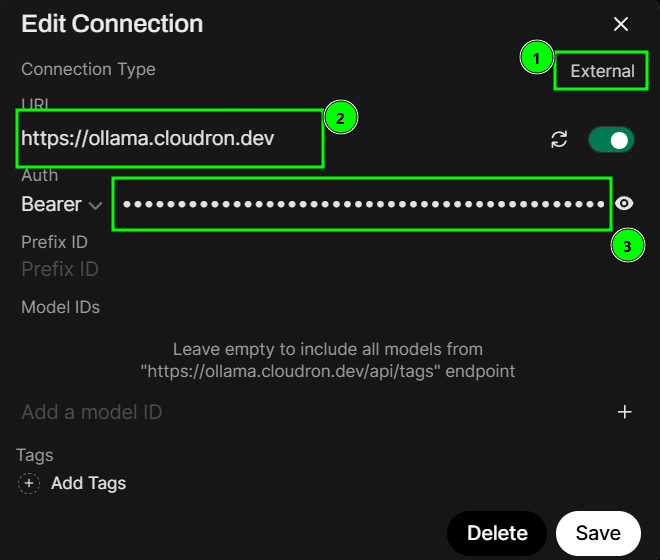

Now when it shows

external(1) configure theURLto your Ollama app url and paste the key from before intoAuth

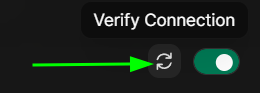

You can press the 'reload' icon to verify the connection:

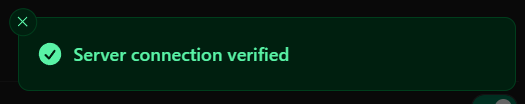

If you see the success message in the top right, you should be good to go and click the

Savebutton.

Now you can use your own Ollama server and modells in OpenWebUI:

-

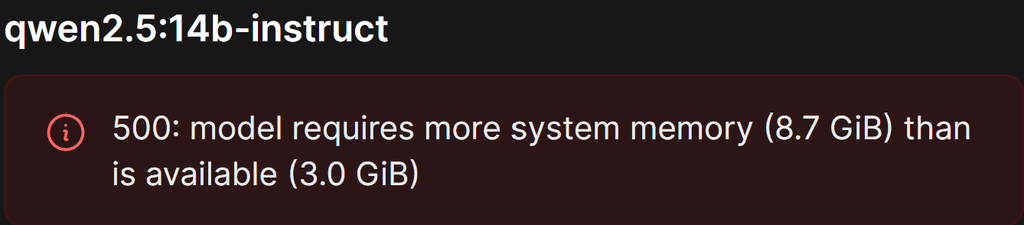

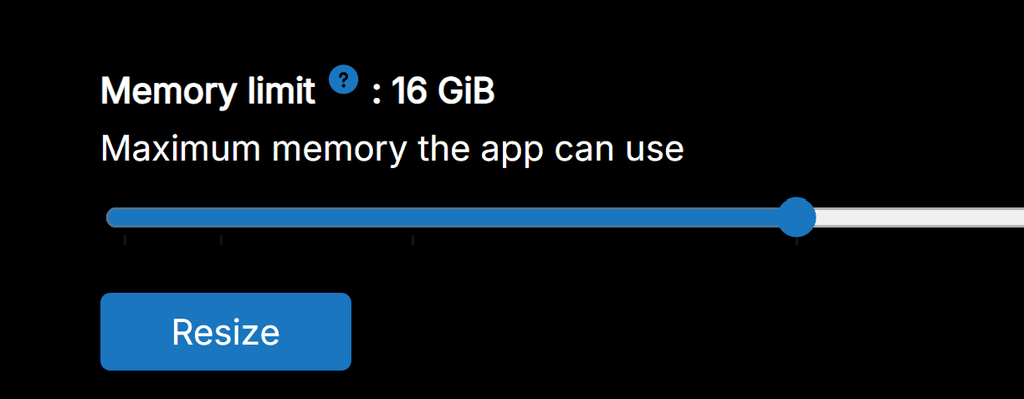

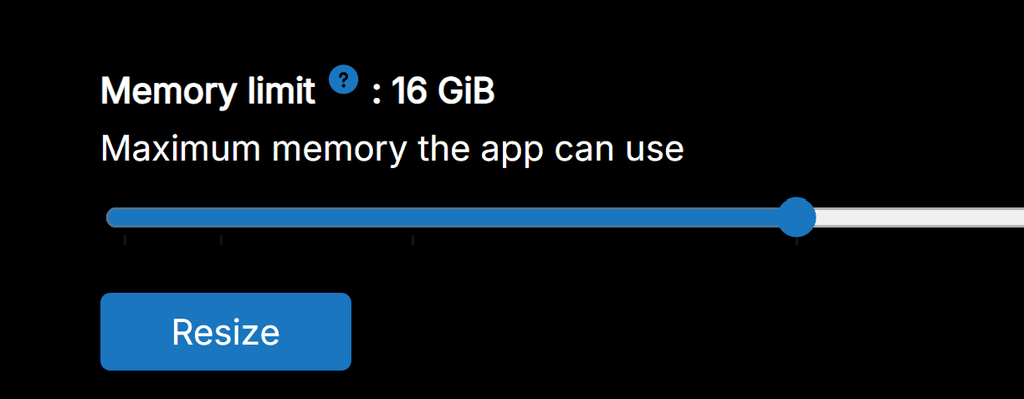

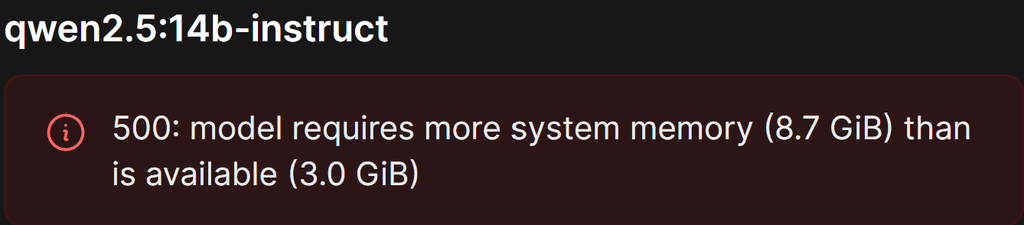

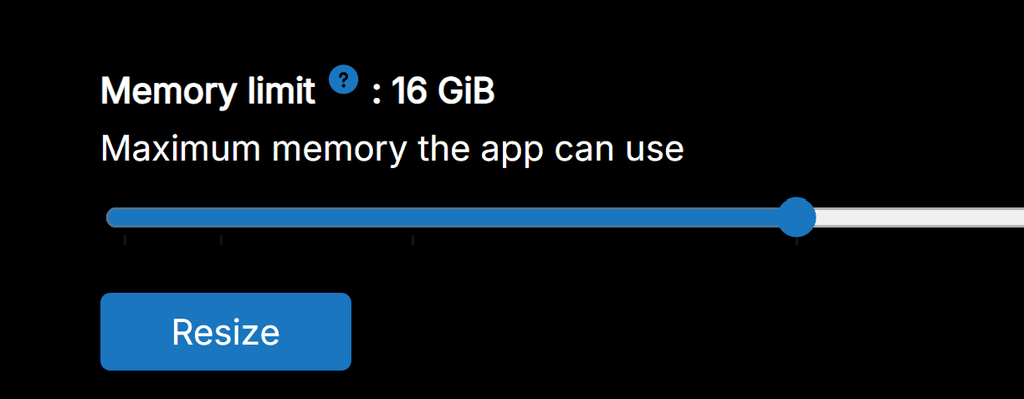

Thank you, James. You saved my day. However, how can I increase system memory for OpenWebUI. I already resize Memory Limit for OpenWebUI app to 16GB but system memory in this case is a different setting?

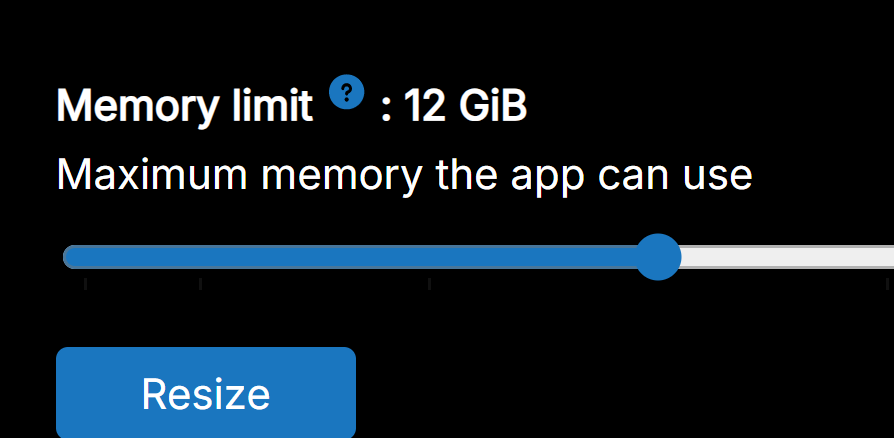

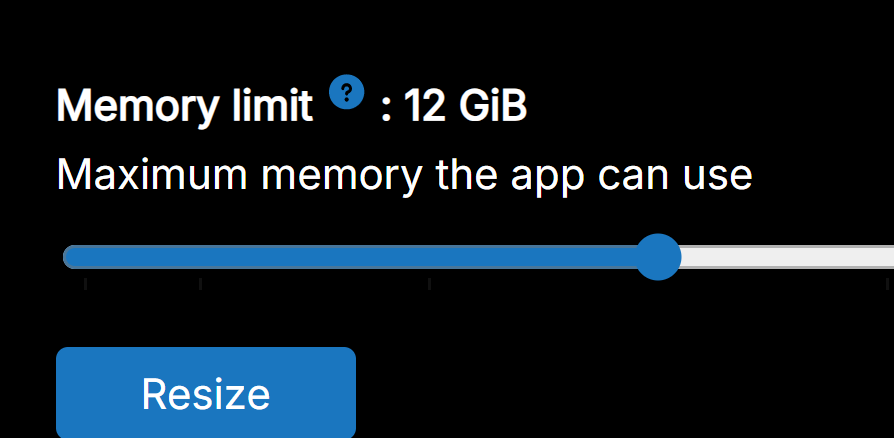

My Ollama app has 12GB Memory.

said in how to configure Ollama in OpenWebUI:

Thank you, James. You saved my day. However, how can I increase system memory for OpenWebUI. I already resize Memory Limit for OpenWebUI app to 16GB but system memory in this case is a different setting?

-

My Ollama app has 12GB Memory.

said in how to configure Ollama in OpenWebUI:

Thank you, James. You saved my day. However, how can I increase system memory for OpenWebUI. I already resize Memory Limit for OpenWebUI app to 16GB but system memory in this case is a different setting?

-

Hello @harryz

How much RAM does your server have?

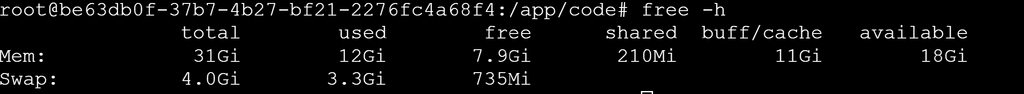

How much RAM is used already?In the ollama web temrinal run:

free -hand post the output please.

-

J james referenced this topic on

J james referenced this topic on

Hello! It looks like you're interested in this conversation, but you don't have an account yet.

Getting fed up of having to scroll through the same posts each visit? When you register for an account, you'll always come back to exactly where you were before, and choose to be notified of new replies (either via email, or push notification). You'll also be able to save bookmarks and upvote posts to show your appreciation to other community members.

With your input, this post could be even better 💗

Register Login