Backups frequently crashing lately - "reason":"Internal Error"

-

Can you check how much memory you are giving the backup task? This is in advanced settings of the backup configuration.

The default would be 400Mb and it will use that amount, since there a fair amount of data piping happening, especially if disk and network I/O are good.

-

Can you check how much memory you are giving the backup task? This is in advanced settings of the backup configuration.

The default would be 400Mb and it will use that amount, since there a fair amount of data piping happening, especially if disk and network I/O are good.

@nebulon said in Backups frequently crashing lately - "reason":"Internal Error":

The default would be 400Mb and it will use that amount, since there a fair amount of data piping happening, especially if disk and network I/O are good.

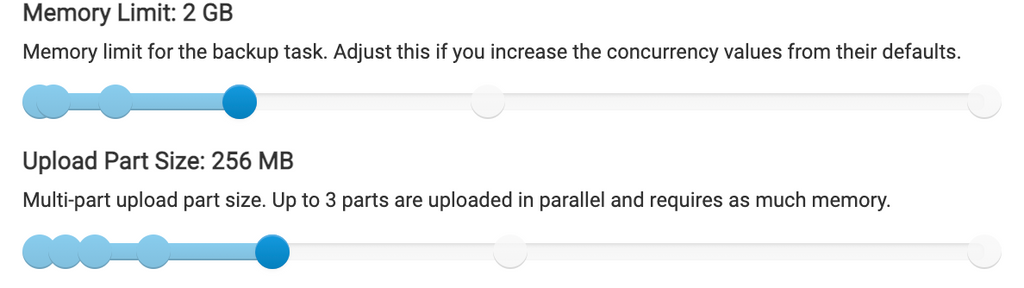

Well since I thought it was the memory for the backup task initially which has been an issue in the past for many users, I had increased it to 6 GB of memory (which is admittedly a lot on an 8 GB server), but I had it initially at 4 GB when I was seeing the issue. I've had it at 4 GB for many months though. I've lowered it to 2 GB for the moment to test and see if that helps at all, but I'm worried the trigger was mysqldump commands and I'm not sure if the backup task memory would impact that at all.

This is the current configuration as of this morning for troubleshooting purposes:

-

Just encountered the issue again.

Here are the latest logs:

Jun 17 03:13:17 my kernel: redis-server invoked oom-killer: gfp_mask=0x100cca(GFP_HIGHUSER_MOVABLE), order=0, oom_score_adj=0 Jun 17 03:13:17 my kernel: CPU: 1 PID: 6436 Comm: redis-server Not tainted 5.4.0-74-generic #83-Ubuntu Jun 17 03:13:17 my kernel: Hardware name: Vultr HFC, BIOS Jun 17 03:13:17 my kernel: Call Trace: Jun 17 03:13:17 my kernel: dump_stack+0x6d/0x8b Jun 17 03:13:17 my kernel: dump_header+0x4f/0x1eb Jun 17 03:13:17 my kernel: oom_kill_process.cold+0xb/0x10 Jun 17 03:13:17 my kernel: out_of_memory.part.0+0x1df/0x3d0 Jun 17 03:13:17 my kernel: out_of_memory+0x6d/0xd0 Jun 17 03:13:17 my kernel: __alloc_pages_slowpath+0xd5e/0xe50 Jun 17 03:13:17 my kernel: __alloc_pages_nodemask+0x2d0/0x320 Jun 17 03:13:17 my kernel: alloc_pages_current+0x87/0xe0 Jun 17 03:13:17 my kernel: __page_cache_alloc+0x72/0x90 Jun 17 03:13:17 my kernel: pagecache_get_page+0xbf/0x300 Jun 17 03:13:17 my kernel: filemap_fault+0x6b2/0xa50 Jun 17 03:13:17 my kernel: ? unlock_page_memcg+0x12/0x20 Jun 17 03:13:17 my kernel: ? page_add_file_rmap+0xff/0x1a0 Jun 17 03:13:17 my kernel: ? xas_load+0xd/0x80 Jun 17 03:13:17 my kernel: ? xas_find+0x17f/0x1c0 Jun 17 03:13:17 my kernel: ? filemap_map_pages+0x24c/0x380 Jun 17 03:13:17 my kernel: ext4_filemap_fault+0x32/0x50 Jun 17 03:13:17 my kernel: __do_fault+0x3c/0x130 Jun 17 03:13:17 my kernel: do_fault+0x24b/0x640 Jun 17 03:13:17 my kernel: __handle_mm_fault+0x4c5/0x7a0 Jun 17 03:13:17 my kernel: handle_mm_fault+0xca/0x200 Jun 17 03:13:17 my kernel: do_user_addr_fault+0x1f9/0x450 Jun 17 03:13:17 my kernel: __do_page_fault+0x58/0x90 Jun 17 03:13:17 my kernel: do_page_fault+0x2c/0xe0 Jun 17 03:13:17 my kernel: do_async_page_fault+0x39/0x70 Jun 17 03:13:17 my kernel: async_page_fault+0x34/0x40 Jun 17 03:13:17 my kernel: RIP: 0033:0x5642ded5def4 Jun 17 03:13:17 my kernel: Code: Bad RIP value. Jun 17 03:13:17 my kernel: RSP: 002b:00007ffe39d1f480 EFLAGS: 00010293 Jun 17 03:13:17 my kernel: RAX: 0000000000000001 RBX: 0000000000000000 RCX: 00005642dedb5a14 Jun 17 03:13:17 my kernel: RDX: 0000000000000001 RSI: 00007f6c13408823 RDI: 0000000000000002 Jun 17 03:13:17 my kernel: RBP: 0000000000000000 R08: 0000000000000000 R09: 00007ffe39d1f3d8 Jun 17 03:13:17 my kernel: R10: 00007ffe39d1f3d0 R11: 0000000000000000 R12: 00007ffe39d1f648 Jun 17 03:13:17 my kernel: R13: 0000000000000002 R14: 00007f6c1341f020 R15: 0000000000000000 Jun 17 03:13:17 my kernel: Mem-Info: Jun 17 03:13:17 my kernel: active_anon:961079 inactive_anon:871527 isolated_anon:0 Jun 17 03:13:17 my kernel: Node 0 active_anon:3844316kB inactive_anon:3486108kB active_file:624kB inactive_file:464kB unevictable:18540kB isolated(anon):0kB isolated(file):20kB mapped:900656kB dirty:0kB writeback:0kB shmem:1207700kB shmem_thp: 0kB shmem_pmdmapped: 0kB anon_thp: 0kB writeback_tmp:0kB unstable:0kB all_unreclaimable? no Jun 17 03:13:17 my kernel: Node 0 DMA free:15900kB min:132kB low:164kB high:196kB active_anon:0kB inactive_anon:0kB active_file:0kB inactive_file:0kB unevictable:0kB writepending:0kB present:15992kB managed:15908kB mlocked:0kB kernel_stack:0kB pagetables:0kB bounce:0kB free_pcp:0kB local_pcp:0kB free_cma:0kB Jun 17 03:13:17 my kernel: lowmem_reserve[]: 0 2911 7858 7858 7858 Jun 17 03:13:17 my kernel: Node 0 DMA32 free:44284kB min:24988kB low:31232kB high:37476kB active_anon:1420788kB inactive_anon:1398328kB active_file:0kB inactive_file:360kB unevictable:0kB writepending:0kB present:3129200kB managed:3063664kB mlocked:0kB kernel_stack:9504kB pagetables:29712kB bounce:0kB free_pcp:1560kB local_pcp:540kB free_cma:0kB Jun 17 03:13:17 my kernel: lowmem_reserve[]: 0 0 4946 4946 4946 Jun 17 03:13:17 my kernel: Node 0 Normal free:42004kB min:42460kB low:53072kB high:63684kB active_anon:2423528kB inactive_anon:2087780kB active_file:588kB inactive_file:808kB unevictable:18540kB writepending:0kB present:5242880kB managed:5073392kB mlocked:18540kB kernel_stack:29040kB pagetables:53096kB bounce:0kB free_pcp:3512kB local_pcp:676kB free_cma:0kB Jun 17 03:13:17 my kernel: lowmem_reserve[]: 0 0 0 0 0 Jun 17 03:13:17 my kernel: Node 0 DMA: 1*4kB (U) 1*8kB (U) 1*16kB (U) 0*32kB 2*64kB (U) 1*128kB (U) 1*256kB (U) 0*512kB 1*1024kB (U) 1*2048kB (M) 3*4096kB (M) = 15900kB Jun 17 03:13:17 my kernel: Node 0 DMA32: 3172*4kB (UME) 1384*8kB (UE) 744*16kB (UME) 242*32kB (UME) 15*64kB (UME) 0*128kB 0*256kB 0*512kB 0*1024kB 0*2048kB 0*4096kB = 44368kB Jun 17 03:13:17 my kernel: Node 0 Normal: 157*4kB (UME) 2012*8kB (UMEH) 976*16kB (UMEH) 291*32kB (UME) 4*64kB (M) 2*128kB (M) 1*256kB (M) 0*512kB 0*1024kB 0*2048kB 0*4096kB = 42420kB Jun 17 03:13:17 my kernel: Node 0 hugepages_total=0 hugepages_free=0 hugepages_surp=0 hugepages_size=2048kB Jun 17 03:13:17 my kernel: 337832 total pagecache pages Jun 17 03:13:17 my kernel: 33532 pages in swap cache Jun 17 03:13:17 my kernel: Swap cache stats: add 413059, delete 379528, find 3909879/3976154 Jun 17 03:13:17 my kernel: Free swap = 0kB Jun 17 03:13:17 my kernel: Total swap = 1004540kB Jun 17 03:13:17 my kernel: 2097018 pages RAM Jun 17 03:13:17 my kernel: 0 pages HighMem/MovableOnly Jun 17 03:13:17 my kernel: 58777 pages reserved Jun 17 03:13:17 my kernel: 0 pages cma reserved Jun 17 03:13:17 my kernel: 0 pages hwpoisoned Jun 17 03:13:17 my kernel: oom-kill:constraint=CONSTRAINT_NONE,nodemask=(null),cpuset=ac3d3b89315a33f079b879cebf2fa7d68e0512640f9a7ab16e1b9c79b192fa1b,mems_allowed=0,global_oom,task_memcg=/system.slice/box-task-10731.service,task=node,pid=1757378,uid=0 Jun 17 03:13:17 my kernel: Out of memory: Killed process 1757378 (node) total-vm:2615204kB, anon-rss:1725444kB, file-rss:0kB, shmem-rss:0kB, UID:0 pgtables:8244kB oom_score_adj:0 Jun 17 03:13:17 my kernel: oom_reaper: reaped process 1757378 (node), now anon-rss:0kB, file-rss:0kB, shmem-rss:0kB Jun 17 03:13:17 my sudo[1757377]: pam_unix(sudo:session): session closed for user root Jun 17 03:13:17 my systemd[1]: box-task-10731.service: Main process exited, code=exited, status=50/n/a Jun 17 03:13:17 my systemd[1]: box-task-10731.service: Failed with result 'exit-code'.This time it wasn't MySQL but was redis apparently?

The system memory output is below too:

free -h total used free shared buff/cache available Mem: 7.8Gi 4.5Gi 237Mi 1.2Gi 3.1Gi 1.8Gi Swap: 980Mi 969Mi 11Mi -

Just encountered the issue again.

Here are the latest logs:

Jun 17 03:13:17 my kernel: redis-server invoked oom-killer: gfp_mask=0x100cca(GFP_HIGHUSER_MOVABLE), order=0, oom_score_adj=0 Jun 17 03:13:17 my kernel: CPU: 1 PID: 6436 Comm: redis-server Not tainted 5.4.0-74-generic #83-Ubuntu Jun 17 03:13:17 my kernel: Hardware name: Vultr HFC, BIOS Jun 17 03:13:17 my kernel: Call Trace: Jun 17 03:13:17 my kernel: dump_stack+0x6d/0x8b Jun 17 03:13:17 my kernel: dump_header+0x4f/0x1eb Jun 17 03:13:17 my kernel: oom_kill_process.cold+0xb/0x10 Jun 17 03:13:17 my kernel: out_of_memory.part.0+0x1df/0x3d0 Jun 17 03:13:17 my kernel: out_of_memory+0x6d/0xd0 Jun 17 03:13:17 my kernel: __alloc_pages_slowpath+0xd5e/0xe50 Jun 17 03:13:17 my kernel: __alloc_pages_nodemask+0x2d0/0x320 Jun 17 03:13:17 my kernel: alloc_pages_current+0x87/0xe0 Jun 17 03:13:17 my kernel: __page_cache_alloc+0x72/0x90 Jun 17 03:13:17 my kernel: pagecache_get_page+0xbf/0x300 Jun 17 03:13:17 my kernel: filemap_fault+0x6b2/0xa50 Jun 17 03:13:17 my kernel: ? unlock_page_memcg+0x12/0x20 Jun 17 03:13:17 my kernel: ? page_add_file_rmap+0xff/0x1a0 Jun 17 03:13:17 my kernel: ? xas_load+0xd/0x80 Jun 17 03:13:17 my kernel: ? xas_find+0x17f/0x1c0 Jun 17 03:13:17 my kernel: ? filemap_map_pages+0x24c/0x380 Jun 17 03:13:17 my kernel: ext4_filemap_fault+0x32/0x50 Jun 17 03:13:17 my kernel: __do_fault+0x3c/0x130 Jun 17 03:13:17 my kernel: do_fault+0x24b/0x640 Jun 17 03:13:17 my kernel: __handle_mm_fault+0x4c5/0x7a0 Jun 17 03:13:17 my kernel: handle_mm_fault+0xca/0x200 Jun 17 03:13:17 my kernel: do_user_addr_fault+0x1f9/0x450 Jun 17 03:13:17 my kernel: __do_page_fault+0x58/0x90 Jun 17 03:13:17 my kernel: do_page_fault+0x2c/0xe0 Jun 17 03:13:17 my kernel: do_async_page_fault+0x39/0x70 Jun 17 03:13:17 my kernel: async_page_fault+0x34/0x40 Jun 17 03:13:17 my kernel: RIP: 0033:0x5642ded5def4 Jun 17 03:13:17 my kernel: Code: Bad RIP value. Jun 17 03:13:17 my kernel: RSP: 002b:00007ffe39d1f480 EFLAGS: 00010293 Jun 17 03:13:17 my kernel: RAX: 0000000000000001 RBX: 0000000000000000 RCX: 00005642dedb5a14 Jun 17 03:13:17 my kernel: RDX: 0000000000000001 RSI: 00007f6c13408823 RDI: 0000000000000002 Jun 17 03:13:17 my kernel: RBP: 0000000000000000 R08: 0000000000000000 R09: 00007ffe39d1f3d8 Jun 17 03:13:17 my kernel: R10: 00007ffe39d1f3d0 R11: 0000000000000000 R12: 00007ffe39d1f648 Jun 17 03:13:17 my kernel: R13: 0000000000000002 R14: 00007f6c1341f020 R15: 0000000000000000 Jun 17 03:13:17 my kernel: Mem-Info: Jun 17 03:13:17 my kernel: active_anon:961079 inactive_anon:871527 isolated_anon:0 Jun 17 03:13:17 my kernel: Node 0 active_anon:3844316kB inactive_anon:3486108kB active_file:624kB inactive_file:464kB unevictable:18540kB isolated(anon):0kB isolated(file):20kB mapped:900656kB dirty:0kB writeback:0kB shmem:1207700kB shmem_thp: 0kB shmem_pmdmapped: 0kB anon_thp: 0kB writeback_tmp:0kB unstable:0kB all_unreclaimable? no Jun 17 03:13:17 my kernel: Node 0 DMA free:15900kB min:132kB low:164kB high:196kB active_anon:0kB inactive_anon:0kB active_file:0kB inactive_file:0kB unevictable:0kB writepending:0kB present:15992kB managed:15908kB mlocked:0kB kernel_stack:0kB pagetables:0kB bounce:0kB free_pcp:0kB local_pcp:0kB free_cma:0kB Jun 17 03:13:17 my kernel: lowmem_reserve[]: 0 2911 7858 7858 7858 Jun 17 03:13:17 my kernel: Node 0 DMA32 free:44284kB min:24988kB low:31232kB high:37476kB active_anon:1420788kB inactive_anon:1398328kB active_file:0kB inactive_file:360kB unevictable:0kB writepending:0kB present:3129200kB managed:3063664kB mlocked:0kB kernel_stack:9504kB pagetables:29712kB bounce:0kB free_pcp:1560kB local_pcp:540kB free_cma:0kB Jun 17 03:13:17 my kernel: lowmem_reserve[]: 0 0 4946 4946 4946 Jun 17 03:13:17 my kernel: Node 0 Normal free:42004kB min:42460kB low:53072kB high:63684kB active_anon:2423528kB inactive_anon:2087780kB active_file:588kB inactive_file:808kB unevictable:18540kB writepending:0kB present:5242880kB managed:5073392kB mlocked:18540kB kernel_stack:29040kB pagetables:53096kB bounce:0kB free_pcp:3512kB local_pcp:676kB free_cma:0kB Jun 17 03:13:17 my kernel: lowmem_reserve[]: 0 0 0 0 0 Jun 17 03:13:17 my kernel: Node 0 DMA: 1*4kB (U) 1*8kB (U) 1*16kB (U) 0*32kB 2*64kB (U) 1*128kB (U) 1*256kB (U) 0*512kB 1*1024kB (U) 1*2048kB (M) 3*4096kB (M) = 15900kB Jun 17 03:13:17 my kernel: Node 0 DMA32: 3172*4kB (UME) 1384*8kB (UE) 744*16kB (UME) 242*32kB (UME) 15*64kB (UME) 0*128kB 0*256kB 0*512kB 0*1024kB 0*2048kB 0*4096kB = 44368kB Jun 17 03:13:17 my kernel: Node 0 Normal: 157*4kB (UME) 2012*8kB (UMEH) 976*16kB (UMEH) 291*32kB (UME) 4*64kB (M) 2*128kB (M) 1*256kB (M) 0*512kB 0*1024kB 0*2048kB 0*4096kB = 42420kB Jun 17 03:13:17 my kernel: Node 0 hugepages_total=0 hugepages_free=0 hugepages_surp=0 hugepages_size=2048kB Jun 17 03:13:17 my kernel: 337832 total pagecache pages Jun 17 03:13:17 my kernel: 33532 pages in swap cache Jun 17 03:13:17 my kernel: Swap cache stats: add 413059, delete 379528, find 3909879/3976154 Jun 17 03:13:17 my kernel: Free swap = 0kB Jun 17 03:13:17 my kernel: Total swap = 1004540kB Jun 17 03:13:17 my kernel: 2097018 pages RAM Jun 17 03:13:17 my kernel: 0 pages HighMem/MovableOnly Jun 17 03:13:17 my kernel: 58777 pages reserved Jun 17 03:13:17 my kernel: 0 pages cma reserved Jun 17 03:13:17 my kernel: 0 pages hwpoisoned Jun 17 03:13:17 my kernel: oom-kill:constraint=CONSTRAINT_NONE,nodemask=(null),cpuset=ac3d3b89315a33f079b879cebf2fa7d68e0512640f9a7ab16e1b9c79b192fa1b,mems_allowed=0,global_oom,task_memcg=/system.slice/box-task-10731.service,task=node,pid=1757378,uid=0 Jun 17 03:13:17 my kernel: Out of memory: Killed process 1757378 (node) total-vm:2615204kB, anon-rss:1725444kB, file-rss:0kB, shmem-rss:0kB, UID:0 pgtables:8244kB oom_score_adj:0 Jun 17 03:13:17 my kernel: oom_reaper: reaped process 1757378 (node), now anon-rss:0kB, file-rss:0kB, shmem-rss:0kB Jun 17 03:13:17 my sudo[1757377]: pam_unix(sudo:session): session closed for user root Jun 17 03:13:17 my systemd[1]: box-task-10731.service: Main process exited, code=exited, status=50/n/a Jun 17 03:13:17 my systemd[1]: box-task-10731.service: Failed with result 'exit-code'.This time it wasn't MySQL but was redis apparently?

The system memory output is below too:

free -h total used free shared buff/cache available Mem: 7.8Gi 4.5Gi 237Mi 1.2Gi 3.1Gi 1.8Gi Swap: 980Mi 969Mi 11Mi@d19dotca as mentioned, I think the only option for now is to either increase "physical" memory on the server or decrease the memory limit for the backup task. Also I guess from your screenshot you might want to decrease part-size, since from your memory paste and part size of 256MB, a single part, loaded into memory, would force the server to kill something to free up some memory.

Of course decreasing part-size usually means slower backups or if you have huge files, some object storages will hit part-number limits then...

-

@d19dotca as mentioned, I think the only option for now is to either increase "physical" memory on the server or decrease the memory limit for the backup task. Also I guess from your screenshot you might want to decrease part-size, since from your memory paste and part size of 256MB, a single part, loaded into memory, would force the server to kill something to free up some memory.

Of course decreasing part-size usually means slower backups or if you have huge files, some object storages will hit part-number limits then...

@nebulon Okay, I've further lowered the backup config and will hope that works for now. I just don't quite get why memory is an issue when my server never seems to go much beyond 5 GB used out of 8 GB. Nothing really changed outside of moving to Vultr, and even then that was about 1.5 months ago and this issue only started in the past week or so.

-

@nebulon Okay, I've further lowered the backup config and will hope that works for now. I just don't quite get why memory is an issue when my server never seems to go much beyond 5 GB used out of 8 GB. Nothing really changed outside of moving to Vultr, and even then that was about 1.5 months ago and this issue only started in the past week or so.

@d19dotca but your screenpastes do show the server getting low on memory

237Mifree is quite low given your part-size setting. Whatever shared/buff/availalbe is shown there, if the kernel decides to kill a task means those other bits are deemed more important and can't be freed up.

Especially during backup the system I/O will use quite a bit of memory for buffering.I acknowledge that this is not ideal to keep such a large amount of memory around just for the backup task, so I think the alternative is to restrict the backup task heavily and keep backup objects small (meaning maybe not using tarball strategy if you do). The tradeoff will be slower backups though.

-

@d19dotca but your screenpastes do show the server getting low on memory

237Mifree is quite low given your part-size setting. Whatever shared/buff/availalbe is shown there, if the kernel decides to kill a task means those other bits are deemed more important and can't be freed up.

Especially during backup the system I/O will use quite a bit of memory for buffering.I acknowledge that this is not ideal to keep such a large amount of memory around just for the backup task, so I think the alternative is to restrict the backup task heavily and keep backup objects small (meaning maybe not using tarball strategy if you do). The tradeoff will be slower backups though.

@nebulon Okay I've not had a backup crash in the last two days approximately now since severely limiting the backup memory from what it used to be. I still don't understand why it's suddenly crashing when it's been configured this way for so long now and only started recently happening with no new apps or memory changes (that I'm aware of anyways), but I'll keep an eye on it. Thanks for the help Nebulon, looks like limiting the backup memory is working for now.

-

Hi @nebulon - I see recently that there are a few

puma: cluster workercommands being run which seem to be taking up a decent chunk of memory... any ideas if this is Cloudron-related? I haven't noticedpumabefore. Wondering if this is related to the memory issues I've been having recently too.ubuntu@my:~$ ps aux --sort -rss | head USER PID %CPU %MEM VSZ RSS TTY STAT START TIME COMMAND uuidd 10044 4.4 2.0 2617076 300100 ? Sl 09:13 0:22 /usr/sbin/mysqld ubuntu 13987 0.3 1.8 1129480 279584 pts/0 Sl 09:14 0:01 puma: cluster worker 1: 17 [code] ubuntu 13982 0.2 1.6 1129480 253660 pts/0 Sl 09:14 0:00 puma: cluster worker 0: 17 [code] ubuntu 13717 2.6 1.6 406624 251220 pts/0 Sl 09:14 0:11 sidekiq 6.2.1 code [0 of 2 busy] ubuntu 13716 2.2 1.6 453504 243064 pts/0 Sl 09:14 0:09 puma 5.3.2 (tcp://127.0.0.1:3000) [code] yellowt+ 11728 1.9 1.1 1592140 177712 pts/0 Sl 09:13 0:09 /home/git/gitea/gitea web -c /run/gitea/app.ini -p 3000 mysql 834 1.0 1.0 2482488 161512 ? Ssl 09:12 0:05 /usr/sbin/mysqld root 845 2.5 0.8 3316204 132860 ? Ssl 09:12 0:13 /usr/bin/dockerd -H fd:// --log-driver=journald --exec-opt native.cgroupdriver=cgroupfs --storage-driver=overlay2 ubuntu 14525 1.4 0.8 11457536 122692 pts/0 Sl+ 09:14 0:05 /usr/local/node-14.15.4/bin/node /app/code/app.js

Edit: I believe I've confirmed now that the

pumaandsidekiqcommands/services are related to Mastodon. When I stop Mastodon, those services go away too.I realized this issue started happening right around the time of the latest Mastodon update which was ~17 days ago. Now I'm starting to wonder if this latest 1.6.2 version release is related... https://forum.cloudron.io/post/32335

-

Hi @nebulon - I see recently that there are a few

puma: cluster workercommands being run which seem to be taking up a decent chunk of memory... any ideas if this is Cloudron-related? I haven't noticedpumabefore. Wondering if this is related to the memory issues I've been having recently too.ubuntu@my:~$ ps aux --sort -rss | head USER PID %CPU %MEM VSZ RSS TTY STAT START TIME COMMAND uuidd 10044 4.4 2.0 2617076 300100 ? Sl 09:13 0:22 /usr/sbin/mysqld ubuntu 13987 0.3 1.8 1129480 279584 pts/0 Sl 09:14 0:01 puma: cluster worker 1: 17 [code] ubuntu 13982 0.2 1.6 1129480 253660 pts/0 Sl 09:14 0:00 puma: cluster worker 0: 17 [code] ubuntu 13717 2.6 1.6 406624 251220 pts/0 Sl 09:14 0:11 sidekiq 6.2.1 code [0 of 2 busy] ubuntu 13716 2.2 1.6 453504 243064 pts/0 Sl 09:14 0:09 puma 5.3.2 (tcp://127.0.0.1:3000) [code] yellowt+ 11728 1.9 1.1 1592140 177712 pts/0 Sl 09:13 0:09 /home/git/gitea/gitea web -c /run/gitea/app.ini -p 3000 mysql 834 1.0 1.0 2482488 161512 ? Ssl 09:12 0:05 /usr/sbin/mysqld root 845 2.5 0.8 3316204 132860 ? Ssl 09:12 0:13 /usr/bin/dockerd -H fd:// --log-driver=journald --exec-opt native.cgroupdriver=cgroupfs --storage-driver=overlay2 ubuntu 14525 1.4 0.8 11457536 122692 pts/0 Sl+ 09:14 0:05 /usr/local/node-14.15.4/bin/node /app/code/app.js

Edit: I believe I've confirmed now that the

pumaandsidekiqcommands/services are related to Mastodon. When I stop Mastodon, those services go away too.I realized this issue started happening right around the time of the latest Mastodon update which was ~17 days ago. Now I'm starting to wonder if this latest 1.6.2 version release is related... https://forum.cloudron.io/post/32335

@d19dotca if it aligns time-wise this is quite possible. Maybe mastodon has memory spikes as well which could align with the memory requirement of the backup process then. Not sure what could be done though, if the memory is indeed used for backups and the app components.

-

@d19dotca if it aligns time-wise this is quite possible. Maybe mastodon has memory spikes as well which could align with the memory requirement of the backup process then. Not sure what could be done though, if the memory is indeed used for backups and the app components.

@nebulon So I stopped the Mastodon app, and things seem a bit better but I find the memory still runs low, it's only a matter of maybe 12 hours and the

freememory is less than 300 MB. I don't think that used to be the case. You mentioning that the free memory was low got me intrigued as to what was taking up the memory. With all apps and services running except for Mastodon, and soon after a reboot, the following is the memory usage by service:ubuntu@my:~$ ps axo rss,comm,pid | awk '{ proc_list[$2] += $1; } END \ { for (proc in proc_list) { printf("%d\t%s\n", proc_list[proc],proc); }}' | sort -n | tail -n 20 | sort -rn | awk '{$1/=1024;printf "%.0fMB\t",$1}{print $2}' 3942MB /usr/sbin/apach 1769MB node 1030MB ruby2.7 717MB supervisord 511MB mysqld 468MB containerd-shim 263MB spamd 220MB redis-server 207MB postmaster 164MB nginx 132MB dockerd 114MB mongod 94MB uwsgi 59MB containerd 57MB systemd-udevd 38MB carbon-cache 35MB snapd 35MB imap 34MB pidproxy 33MB radicaleIs the memory usage for

nodesounding about right with it being under 2 GB? Do you have any concerns with the memory usage above as the top 20 memory-consuming tasks?For context, after a reboot, my server memory is as follows (I'll update tomorrow morning/afternoon when it's low again):

ubuntu@my:~$ free -h total used free shared buff/cache available Mem: 14Gi 4.0Gi 4.8Gi 449Mi 5.5Gi 9.6Gi Swap: 4.0Gi 0B 4.0Gi -

@nebulon So I stopped the Mastodon app, and things seem a bit better but I find the memory still runs low, it's only a matter of maybe 12 hours and the

freememory is less than 300 MB. I don't think that used to be the case. You mentioning that the free memory was low got me intrigued as to what was taking up the memory. With all apps and services running except for Mastodon, and soon after a reboot, the following is the memory usage by service:ubuntu@my:~$ ps axo rss,comm,pid | awk '{ proc_list[$2] += $1; } END \ { for (proc in proc_list) { printf("%d\t%s\n", proc_list[proc],proc); }}' | sort -n | tail -n 20 | sort -rn | awk '{$1/=1024;printf "%.0fMB\t",$1}{print $2}' 3942MB /usr/sbin/apach 1769MB node 1030MB ruby2.7 717MB supervisord 511MB mysqld 468MB containerd-shim 263MB spamd 220MB redis-server 207MB postmaster 164MB nginx 132MB dockerd 114MB mongod 94MB uwsgi 59MB containerd 57MB systemd-udevd 38MB carbon-cache 35MB snapd 35MB imap 34MB pidproxy 33MB radicaleIs the memory usage for

nodesounding about right with it being under 2 GB? Do you have any concerns with the memory usage above as the top 20 memory-consuming tasks?For context, after a reboot, my server memory is as follows (I'll update tomorrow morning/afternoon when it's low again):

ubuntu@my:~$ free -h total used free shared buff/cache available Mem: 14Gi 4.0Gi 4.8Gi 449Mi 5.5Gi 9.6Gi Swap: 4.0Gi 0B 4.0Gi@d19dotca my shell foo is not that great, but is it possible that your listing would combine all processes with the same name? If that is the case then maybe this is ok, otherwise I wonder which is that single node process and also that single supervisord should hardly take up that much.

-

@d19dotca my shell foo is not that great, but is it possible that your listing would combine all processes with the same name? If that is the case then maybe this is ok, otherwise I wonder which is that single node process and also that single supervisord should hardly take up that much.

@nebulon Oh sorry, yes that was a command I found online which I thought was neat, haha. It just combines processes with the same name which I think is useful at times, so it's basically a summary of all MySQL services, all Node servers, etc.

Thanks for the assistance by the way - I know I'm deviating a bit from the original concern so that's okay - we can probably mark this resolved now since limiting the backup task memory was sufficient for now. It just has me wondering about memory usage overall on my system now. haha. I'll maybe file a new post if I need a bit more insight later to memory stuff. Thanks again for the help!

For reference, here is the current status of those commands:

ubuntu@my:~$ free -h total used free shared buff/cache available Mem: 14Gi 5.3Gi 797Mi 925Mi 8.2Gi 7.8Gi Swap: 4.0Gi 3.0Mi 4.0Gi(there was 2.2 GB free when I woke up this morning but little left now because it just completed a backup, so hoping that memory gets put back - but wondering if maybe this is why it's going to low in "free" memory (even though plenty left in "available" memory).

ubuntu@my:~$ ps axo rss,comm,pid | awk '{ proc_list[$2] += $1; } END \ > { for (proc in proc_list) { printf("%d\t%s\n", proc_list[proc],proc); }}' | sort -n | tail -n 20 | sort -rn | awk '{$1/=1024;printf "%.0fMB\t",$1}{print $2}' 5793MB /usr/sbin/apach 1958MB node 1070MB ruby2.7 903MB mysqld 716MB supervisord 476MB containerd-shim 327MB spamd 236MB redis-server 179MB postmaster 170MB nginx 139MB mongod 133MB dockerd 121MB uwsgi 81MB imap 65MB systemd-udevd 61MB containerd 59MB imap-login 44MB radicale 39MB carbon-cache 36MB snapdDefinitely a lot more Apache-related memory usage (which I guess is just all the containers running Apache like all the WordPress sites for example and makes sense as most of the apps running are WordPress apps in Cloudron). MySQL memory also almost doubled, but since so many are WordPress sites and if they're getting busier I suspect that means more MySQL usage which means MySQL will use more memory, so I think that all checks out still.

One thing I'm not sure I fully understand yet (but maybe this is a bit outside the scope of Cloudron) is the memory part... so last night I had 4.0 GB used and 4.8 GB in the free column, now it's 5.3 GB used but the free memory dropped by about 4 GB to 797 MB from 4.8 GB last night. That's where I get a bit confused and unsure why that goes so low when the memory usage only went up a bit. I believe it basically becomes "reserved" and then used in the "buff/cache" and when no longer used it's still reserved so becomes "available", is that right do you think?

Hello! It looks like you're interested in this conversation, but you don't have an account yet.

Getting fed up of having to scroll through the same posts each visit? When you register for an account, you'll always come back to exactly where you were before, and choose to be notified of new replies (either via email, or push notification). You'll also be able to save bookmarks and upvote posts to show your appreciation to other community members.

With your input, this post could be even better 💗

Register Login