After Ubuntu 22/24 Upgrade syslog getting spammed and grows way to much clogging up the diskspace

-

@SansGuidon the issue arises only with the logs of some specific apps it seems. Did you notice which app specifically is growing in log size? Or is it all the app logs? But you are right, this problem is solved only in Cloudron 9.

@girish said in After Ubuntu 22/24 Upgrade syslog getting spammed and grows way to much clogging up the diskspace:

@SansGuidon the issue arises only with the logs of some specific apps it seems. Did you notice which app specifically is growing in log size? Or is it all the app logs? But you are right, this problem is solved only in Cloudron 9.

Based on early investigation, some apps like Syncthing and Lamp, or even wallos, generate more logs than the rest. But this is just when looking at the data of past hours, and after applying the diff + logrotate tuning. I'll keep you posted if I find more interesting evidence. If someone has a script to quickly generate relevant stats, I'm interested.

-

@girish I don't think I've hit this issue myself, but why not just push out an 8.3.3 with this fix?

@jdaviescoates Yes, could help, as in current state, the syslog implementation generate errors in my logs, which could explain the logs growing in size. So I had to apply the diff to avoid this repeated pattern

2025-08-31T20:42:40.149390+00:00 ubuntu-cloudron-16gb-nbg1-3 syslog.js[970341]: <30>1 2025-08-31T20:42:40Z ubuntu-cloudron-16gb-nbg1-3 b5b418fc-0f16-4cde-81a1-1213880c9a10 1123 b5b418fc-0f16-4cde-81a1-1213880c9a10 - IndexError: list index out of range 2025-08-31T20:42:40.240033+00:00 ubuntu-cloudron-16gb-nbg1-3 syslog.js[970341]: <30>1 2025-08-31T20:42:40Z ubuntu-cloudron-16gb-nbg1-3 cd4a6fed-6fd7-4616-ba0d-d0c38972774b 1123 cd4a6fed-6fd7-4616-ba0d-d0c38972774b - 172.18.0.1 - - [31/Aug/2025:20:42:40 +0000] "GET / HTTP/1.1" 200 45257 "-" "Mozilla (CloudronHealth)" 2025-08-31T20:42:41.676806+00:00 ubuntu-cloudron-16gb-nbg1-3 syslog.js[970341]: <30>1 2025-08-31T20:42:41Z ubuntu-cloudron-16gb-nbg1-3 mongodb 1123 mongodb - {"t":{"$date":"2025-08-31T20:42:41.675+00:00"},"s":"D1", "c":"REPL", "id":21223, "ctx":"NoopWriter","msg":"Set last known op time","attr":{"lastKnownOpTime":{"ts":{"$timestamp":{"t":1756672961,"i":1}},"t":42}}} 2025-08-31T20:42:43.067695+00:00 ubuntu-cloudron-16gb-nbg1-3 syslog.js[970341]: <30>1 2025-08-31T20:42:43Z ubuntu-cloudron-16gb-nbg1-3 mongodb 1123 mongodb - {"t":{"$date":"2025-08-31T20:42:43.066+00:00"},"s":"D1", "c":"NETWORK", "id":4668132, "ctx":"ReplicaSetMonitor-TaskExecutor","msg":"ReplicaSetMonitor ping success","attr":{"host":"mongodb:27017","replicaSet":"rs0","durationMicros":606}} 2025-08-31T20:42:44.061046+00:00 ubuntu-cloudron-16gb-nbg1-3 syslog.js[970341]: <30>1 2025-08-31T20:42:44Z ubuntu-cloudron-16gb-nbg1-3 b5b418fc-0f16-4cde-81a1-1213880c9a10 1123 b5b418fc-0f16-4cde-81a1-1213880c9a10 - url = link.split(" : ")[0].split(" ")[1].strip("[]") 2025-08-31T20:42:44.061077+00:00 ubuntu-cloudron-16gb-nbg1-3 syslog.js[970341]: <30>1 2025-08-31T20:42:44Z ubuntu-cloudron-16gb-nbg1-3 b5b418fc-0f16-4cde-81a1-1213880c9a10 1123 b5b418fc-0f16-4cde-81a1-1213880c9a10 - ~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~^^^ 2025-08-31T20:42:44.061100+00:00 ubuntu-cloudron-16gb-nbg1-3 syslog.js[970341]: <30>1 2025-08-31T20:42:44Z ubuntu-cloudron-16gb-nbg1-3 b5b418fc-0f16-4cde-81a1-1213880c9a10 1123 b5b418fc-0f16-4cde-81a1-1213880c9a10 - IndexError: list index out of range -

@jdaviescoates Yes, could help, as in current state, the syslog implementation generate errors in my logs, which could explain the logs growing in size. So I had to apply the diff to avoid this repeated pattern

2025-08-31T20:42:40.149390+00:00 ubuntu-cloudron-16gb-nbg1-3 syslog.js[970341]: <30>1 2025-08-31T20:42:40Z ubuntu-cloudron-16gb-nbg1-3 b5b418fc-0f16-4cde-81a1-1213880c9a10 1123 b5b418fc-0f16-4cde-81a1-1213880c9a10 - IndexError: list index out of range 2025-08-31T20:42:40.240033+00:00 ubuntu-cloudron-16gb-nbg1-3 syslog.js[970341]: <30>1 2025-08-31T20:42:40Z ubuntu-cloudron-16gb-nbg1-3 cd4a6fed-6fd7-4616-ba0d-d0c38972774b 1123 cd4a6fed-6fd7-4616-ba0d-d0c38972774b - 172.18.0.1 - - [31/Aug/2025:20:42:40 +0000] "GET / HTTP/1.1" 200 45257 "-" "Mozilla (CloudronHealth)" 2025-08-31T20:42:41.676806+00:00 ubuntu-cloudron-16gb-nbg1-3 syslog.js[970341]: <30>1 2025-08-31T20:42:41Z ubuntu-cloudron-16gb-nbg1-3 mongodb 1123 mongodb - {"t":{"$date":"2025-08-31T20:42:41.675+00:00"},"s":"D1", "c":"REPL", "id":21223, "ctx":"NoopWriter","msg":"Set last known op time","attr":{"lastKnownOpTime":{"ts":{"$timestamp":{"t":1756672961,"i":1}},"t":42}}} 2025-08-31T20:42:43.067695+00:00 ubuntu-cloudron-16gb-nbg1-3 syslog.js[970341]: <30>1 2025-08-31T20:42:43Z ubuntu-cloudron-16gb-nbg1-3 mongodb 1123 mongodb - {"t":{"$date":"2025-08-31T20:42:43.066+00:00"},"s":"D1", "c":"NETWORK", "id":4668132, "ctx":"ReplicaSetMonitor-TaskExecutor","msg":"ReplicaSetMonitor ping success","attr":{"host":"mongodb:27017","replicaSet":"rs0","durationMicros":606}} 2025-08-31T20:42:44.061046+00:00 ubuntu-cloudron-16gb-nbg1-3 syslog.js[970341]: <30>1 2025-08-31T20:42:44Z ubuntu-cloudron-16gb-nbg1-3 b5b418fc-0f16-4cde-81a1-1213880c9a10 1123 b5b418fc-0f16-4cde-81a1-1213880c9a10 - url = link.split(" : ")[0].split(" ")[1].strip("[]") 2025-08-31T20:42:44.061077+00:00 ubuntu-cloudron-16gb-nbg1-3 syslog.js[970341]: <30>1 2025-08-31T20:42:44Z ubuntu-cloudron-16gb-nbg1-3 b5b418fc-0f16-4cde-81a1-1213880c9a10 1123 b5b418fc-0f16-4cde-81a1-1213880c9a10 - ~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~^^^ 2025-08-31T20:42:44.061100+00:00 ubuntu-cloudron-16gb-nbg1-3 syslog.js[970341]: <30>1 2025-08-31T20:42:44Z ubuntu-cloudron-16gb-nbg1-3 b5b418fc-0f16-4cde-81a1-1213880c9a10 1123 b5b418fc-0f16-4cde-81a1-1213880c9a10 - IndexError: list index out of range@SansGuidon I'm using Syncthing. I've not hit this issue in that my disk space isn't running out - but perhaps that just because I've got quite a big disk and that I recently cleaned up a load of Nextcloud stuff to give me lots more space because my disk was running out!

Where do I look to check if this issue is indeed affecting me after all? Thanks

-

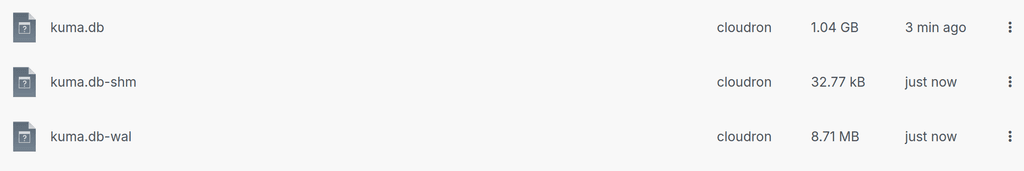

From a deeper investigation, Syslog is exploding (GBs/day) because Cloudron’s backup job dumps full SQLite DBs (e.g. Kuma’s heartbeat table) to stdout, which gets swallowed by journald/rsyslog. One backup ran = ~500MB of SQL spam in syslog in my case. Four runs/day = 2GB+/day, at least. but it could be more depending on the setup. I just triggered a backup now and it grew by almost 2GB.

root@ubuntu-cloudron-16gb-nbg1-3:~# grep -nE "CREATE TABLE \[heartbeat\]|INSERT INTO heartbeat|BEGIN TRANSACTION" /var/log/syslog | head -10 1152:2025-08-31T21:00:37.705303+00:00 ubuntu-cloudron-16gb-nbg1-3 d6750120460b[1123]: BEGIN TRANSACTION; 1153:2025-08-31T21:00:37.705386+00:00 ubuntu-cloudron-16gb-nbg1-3 d6750120460b[1123]: CREATE TABLE [heartbeat](#015 1162:2025-08-31T21:00:37.705789+00:00 ubuntu-cloudron-16gb-nbg1-3 d6750120460b[1123]: INSERT INTO heartbeat VALUES(1,1,1,1,'200 - OK','2025-03-27 23:26:53.602',566,0,0); 1163:2025-08-31T21:00:37.705828+00:00 ubuntu-cloudron-16gb-nbg1-3 d6750120460b[1123]: INSERT INTO heartbeat VALUES(2,0,1,1,'200 - OK','2025-03-27 23:27:54.295',167,60,0); 1164:2025-08-31T21:00:37.705864+00:00 ubuntu-cloudron-16gb-nbg1-3 d6750120460b[1123]: INSERT INTO heartbeat VALUES(3,0,1,1,'200 - OK','2025-03-27 23:28:54.506',247,60,0); 1165:2025-08-31T21:00:37.705930+00:00 ubuntu-cloudron-16gb-nbg1-3 d6750120460b[1123]: INSERT INTO heartbeat VALUES(4,0,1,1,'200 - OK','2025-03-27 23:29:54.801',441,60,0); 1166:2025-08-31T21:00:37.705973+00:00 ubuntu-cloudron-16gb-nbg1-3 d6750120460b[1123]: INSERT INTO heartbeat VALUES(5,0,1,1,'200 - OK','2025-03-27 23:30:55.259',200,60,0); 1167:2025-08-31T21:00:37.706010+00:00 ubuntu-cloudron-16gb-nbg1-3 d6750120460b[1123]: INSERT INTO heartbeat VALUES(6,0,1,1,'200 - OK','2025-03-27 23:31:55.486',162,60,0); 1168:2025-08-31T21:00:37.706033+00:00 ubuntu-cloudron-16gb-nbg1-3 d6750120460b[1123]: INSERT INTO heartbeat VALUES(7,0,1,1,'200 - OK','2025-03-27 23:32:55.691',161,60,0); 1169:2025-08-31T21:00:37.706057+00:00 ubuntu-cloudron-16gb-nbg1-3 d6750120460b[1123]: INSERT INTO heartbeat VALUES(8,0,1,1,'200 - OK','2025-03-27 23:33:55.899',129,60,0);I'm interested to know if someone can validate this observation on another Cloudron instance ideally with an existing and long running Kuma instance:

Reproduction path

- Install Uptime Kuma on Cloudron

- Trigger a backup

- Watch /var/log/syslog: you’ll see CREATE TABLE heartbeat + endless INSERT lines

Root Cause

Backup script calls sqlite3 .dump → stdout → journald → rsyslog → syslog file. Logging pipelines aren’t designed for multi-hundred MB database dumps.Impact

- /var/log/syslog bloats to multi-GB

- Disk space wasted, logrotate churn

- Actual logs are drowned in noise

Fix?

- Don’t stream .dump to stdout. Redirect to file, or use .backup. Silence the dump in logs?

-

From a deeper investigation, Syslog is exploding (GBs/day) because Cloudron’s backup job dumps full SQLite DBs (e.g. Kuma’s heartbeat table) to stdout, which gets swallowed by journald/rsyslog. One backup ran = ~500MB of SQL spam in syslog in my case. Four runs/day = 2GB+/day, at least. but it could be more depending on the setup. I just triggered a backup now and it grew by almost 2GB.

root@ubuntu-cloudron-16gb-nbg1-3:~# grep -nE "CREATE TABLE \[heartbeat\]|INSERT INTO heartbeat|BEGIN TRANSACTION" /var/log/syslog | head -10 1152:2025-08-31T21:00:37.705303+00:00 ubuntu-cloudron-16gb-nbg1-3 d6750120460b[1123]: BEGIN TRANSACTION; 1153:2025-08-31T21:00:37.705386+00:00 ubuntu-cloudron-16gb-nbg1-3 d6750120460b[1123]: CREATE TABLE [heartbeat](#015 1162:2025-08-31T21:00:37.705789+00:00 ubuntu-cloudron-16gb-nbg1-3 d6750120460b[1123]: INSERT INTO heartbeat VALUES(1,1,1,1,'200 - OK','2025-03-27 23:26:53.602',566,0,0); 1163:2025-08-31T21:00:37.705828+00:00 ubuntu-cloudron-16gb-nbg1-3 d6750120460b[1123]: INSERT INTO heartbeat VALUES(2,0,1,1,'200 - OK','2025-03-27 23:27:54.295',167,60,0); 1164:2025-08-31T21:00:37.705864+00:00 ubuntu-cloudron-16gb-nbg1-3 d6750120460b[1123]: INSERT INTO heartbeat VALUES(3,0,1,1,'200 - OK','2025-03-27 23:28:54.506',247,60,0); 1165:2025-08-31T21:00:37.705930+00:00 ubuntu-cloudron-16gb-nbg1-3 d6750120460b[1123]: INSERT INTO heartbeat VALUES(4,0,1,1,'200 - OK','2025-03-27 23:29:54.801',441,60,0); 1166:2025-08-31T21:00:37.705973+00:00 ubuntu-cloudron-16gb-nbg1-3 d6750120460b[1123]: INSERT INTO heartbeat VALUES(5,0,1,1,'200 - OK','2025-03-27 23:30:55.259',200,60,0); 1167:2025-08-31T21:00:37.706010+00:00 ubuntu-cloudron-16gb-nbg1-3 d6750120460b[1123]: INSERT INTO heartbeat VALUES(6,0,1,1,'200 - OK','2025-03-27 23:31:55.486',162,60,0); 1168:2025-08-31T21:00:37.706033+00:00 ubuntu-cloudron-16gb-nbg1-3 d6750120460b[1123]: INSERT INTO heartbeat VALUES(7,0,1,1,'200 - OK','2025-03-27 23:32:55.691',161,60,0); 1169:2025-08-31T21:00:37.706057+00:00 ubuntu-cloudron-16gb-nbg1-3 d6750120460b[1123]: INSERT INTO heartbeat VALUES(8,0,1,1,'200 - OK','2025-03-27 23:33:55.899',129,60,0);I'm interested to know if someone can validate this observation on another Cloudron instance ideally with an existing and long running Kuma instance:

Reproduction path

- Install Uptime Kuma on Cloudron

- Trigger a backup

- Watch /var/log/syslog: you’ll see CREATE TABLE heartbeat + endless INSERT lines

Root Cause

Backup script calls sqlite3 .dump → stdout → journald → rsyslog → syslog file. Logging pipelines aren’t designed for multi-hundred MB database dumps.Impact

- /var/log/syslog bloats to multi-GB

- Disk space wasted, logrotate churn

- Actual logs are drowned in noise

Fix?

- Don’t stream .dump to stdout. Redirect to file, or use .backup. Silence the dump in logs?

@SansGuidon good sleuthing. I don't currently have an instance of Uptime Kuma running so can't assist but hopefully others can.

-

That is some good investigation indeed. I tried to reproduce this, but given that Cloudron isn't using syslog as such at all, I am not sure how to reproduce this and what makes it log to syslog in your case. But maybe I am missing something obvious or have you somehow adjusted the docker configs around logging on that instance?

-

I've no idea, my setup seems to use journald which could be a default and root cause of such issues

root@ubuntu-cloudron-16gb-nbg1-3:~# docker info | grep 'Logging Driver' Logging Driver: journaldam I alone with this setup? I've no memory about configuring this behavior for logging driver.

-

I've no idea, my setup seems to use journald which could be a default and root cause of such issues

root@ubuntu-cloudron-16gb-nbg1-3:~# docker info | grep 'Logging Driver' Logging Driver: journaldam I alone with this setup? I've no memory about configuring this behavior for logging driver.

@SansGuidon said in After Ubuntu 22/24 Upgrade syslog getting spammed and grows way to much clogging up the diskspace:

I alone with this setup?

Nope. I see to have the same:

root@Ubuntu-2204-jammy-amd64-base ~ # docker info | grep 'Logging Driver' Logging Driver: journald -

Ah no that is correct. Sorry what I meant is, that Cloudron task or app related logs should not show up in default syslog as such, like when you would run

journalctl -fHowever you should have acloudron-syslogdaemon running. Check withsystemctl status cloudron-syslogThat one would dump corresponding logs into the correct places in

/home/yellowtent/paltformdata/logs/...So still I am curious how it ends up in

/var/log/syslogand then why it would log db dump data there. -

Thanks for your feedback, @nebulon

I'm not sure why, but Cloudron created my app containers with Docker’s syslog log driver. Those containers write their stdout/stderr straight into the host’s rsyslog, which in turn writes to /var/log/syslog.

So when an app (Uptime Kuma in my case) runs a huge sqlite3 .dump during a Cloudron task/backup, that dump goes to stdout → syslog → /var/log/syslog, ballooning the file by GBs. This is not journald forwarding (it’s disabled). Cloudron’s own cloudron-syslog also logs per-app to /home/yellowtent/platformdata/logs/…, so right now there’s duplication.I’m not looking for a local workaround; I’d like Cloudron to confirm the intent here and provide a platform fix.

Below, the findings and some questions/proposals to pursue

Dockerd default vs. container reality

systemctl show docker -p ExecStart # ... --log-driver=journald ...docker ps -a -q | xargs -r -I{} docker inspect {} \ --format '{{.Name}} {{.HostConfig.LogConfig.Type}}' | sort -u # ~80 containers → all: syslog The daemon default is journald, but all existing containers are syslog (likely from when they were created).

The daemon default is journald, but all existing containers are syslog (likely from when they were created).Not journald → syslog; it’s Docker → rsyslog

grep -n 'ForwardToSyslog' /etc/systemd/journald.conf # ForwardToSyslog=no journald isn’t forwarding.

journald isn’t forwarding.Rsyslog is writing everything to /var/log/syslog

grep -nH . /etc/rsyslog.d/50-default.conf | sed -n '8,12p' # *.*;auth,authpriv.none -/var/log/syslogCloudron syslog collector is active (so we have duplicate paths)

systemctl status cloudron-syslog # active (running) ls /home/yellowtent/platformdata/logs/ # per-app log dirs + syslog.sock presentThe big spill: SQL dump text in logs exactly at backup window

root@ubuntu-cloudron-16gb-nbg1-3:~# grep -nE 'BEGIN TRANSACTION|CREATE TABLE \[heartbeat\]|INSERT INTO heartbeat' /var/log/syslog | head -3 1152:2025-08-31T21:00:37.705303+00:00 ubuntu-cloudron-16gb-nbg1-3 d6750120460b[1123]: BEGIN TRANSACTION; 1153:2025-08-31T21:00:37.705386+00:00 ubuntu-cloudron-16gb-nbg1-3 d6750120460b[1123]: CREATE TABLE [heartbeat](#015 1162:2025-08-31T21:00:37.705789+00:00 ubuntu-cloudron-16gb-nbg1-3 d6750120460b[1123]: INSERT INTO heartbeat VALUES(1,1,1,1,'200 - OK','2025-03-27 23:26:53.602',566,0,0); And Cloudron task timeline around the same minute:

And Cloudron task timeline around the same minute:root@ubuntu-cloudron-16gb-nbg1-3:~# grep -n '2025-08-31T21:0' /home/yellowtent/platformdata/logs/box.log | sed -n '1,40p' 9200:2025-08-31T21:00:00.014Z box:janitor Cleaning up expired tokens 9201:2025-08-31T21:00:00.016Z box:eventlog cleanup: pruning events. creationTime: Mon Jun 02 2025 21:00:00 GMT+0000 (Coordinated Universal Time) 9202:2025-08-31T21:00:00.054Z box:locks write: current locks: {"backup_task":null} 9203:2025-08-31T21:00:00.054Z box:locks acquire: backup_task 9204:2025-08-31T21:00:00.054Z box:janitor Cleaned up 0 expired tokens 9205:2025-08-31T21:00:00.166Z box:tasks startTask - starting task 7053 with options {"timeout":86400000,"nice":15,"memoryLimit":1024,"oomScoreAdjust":-999}. logs at /home/yellowtent/platformdata/logs/tasks/7053.log 9206:2025-08-31T21:00:00.168Z box:shell tasks /usr/bin/sudo -S -E /home/yellowtent/box/src/scripts/starttask.sh 7053 /home/yellowtent/platformdata/logs/tasks/7053.log 15 1024 -999 9207:2025-08-31T21:00:00.249Z box:shell Running as unit: box-task-7053.service; invocation ID: fa4cf334a41b43fc9e06d6612bf5a9c1 9209:2025-08-31T21:00:00.395Z box:apphealthmonitor app health: 31 running / 0 stopped / 0 unresponsive 9210:2025-08-31T21:00:10.288Z box:apphealthmonitor app health: 31 running / 0 stopped / 0 unresponsive 9211:2025-08-31T21:00:20.321Z box:apphealthmonitor app health: 31 running / 0 stopped / 0 unresponsive 9212:2025-08-31T21:00:30.367Z box:apphealthmonitor app health: 31 running / 0 stopped / 0 unresponsive 9213:2025-08-31T21:00:40.579Z box:apphealthmonitor app health: 31 running / 0 stopped / 0 unresponsive 9214:2025-08-31T21:00:50.457Z box:apphealthmonitor app health: 31 running / 0 stopped / 0 unresponsive 9215:2025-08-31T21:01:00.455Z box:apphealthmonitor app health: 31 running / 0 stopped / 0 unresponsive 9216:2025-08-31T21:01:10.350Z box:apphealthmonitor app health: 31 running / 0 stopped / 0 unresponsive 9217:2025-08-31T21:01:20.413Z box:apphealthmonitor app health: 31 running / 0 stopped / 0 unresponsive 9218:2025-08-31T21:01:30.407Z box:apphealthmonitor app health: 31 running / 0 stopped / 0 unresponsive 9219:2025-08-31T21:01:40.367Z box:apphealthmonitor app health: 31 running / 0 stopped / 0 unresponsive 9220:2025-08-31T21:01:50.352Z box:apphealthmonitor app health: 31 running / 0 stopped / 0 unresponsive 9221:2025-08-31T21:02:00.390Z box:apphealthmonitor app health: 31 running / 0 stopped / 0 unresponsive 9222:2025-08-31T21:02:10.709Z box:apphealthmonitor app health: 31 running / 0 stopped / 0 unresponsive 9223:2025-08-31T21:02:11.024Z box:shell system: swapon --noheadings --raw --bytes --show=type,size,used,name 9224:2025-08-31T21:02:20.338Z box:apphealthmonitor app health: 31 running / 0 stopped / 0 unresponsive 9225:2025-08-31T21:02:30.311Z box:apphealthmonitor app health: 31 running / 0 stopped / 0 unresponsive 9226:2025-08-31T21:02:40.300Z box:apphealthmonitor app health: 31 running / 0 stopped / 0 unresponsive 9227:2025-08-31T21:02:50.308Z box:apphealthmonitor app health: 31 running / 0 stopped / 0 unresponsive 9228:2025-08-31T21:03:00.406Z box:apphealthmonitor app health: 31 running / 0 stopped / 0 unresponsive 9229:2025-08-31T21:03:10.269Z box:apphealthmonitor app health: 31 running / 0 stopped / 0 unresponsive 9230:2025-08-31T21:03:20.363Z box:apphealthmonitor app health: 31 running / 0 stopped / 0 unresponsive 9231:2025-08-31T21:03:30.265Z box:apphealthmonitor app health: 31 running / 0 stopped / 0 unresponsive 9232:2025-08-31T21:03:40.281Z box:apphealthmonitor app health: 31 running / 0 stopped / 0 unresponsive 9233:2025-08-31T21:03:50.312Z box:apphealthmonitor app health: 31 running / 0 stopped / 0 unresponsive 9234:2025-08-31T21:04:00.321Z box:apphealthmonitor app health: 31 running / 0 stopped / 0 unresponsive 9235:2025-08-31T21:04:10.284Z box:apphealthmonitor app health: 31 running / 0 stopped / 0 unresponsive 9236:2025-08-31T21:04:20.357Z box:apphealthmonitor app health: 31 running / 0 stopped / 0 unresponsive 9237:2025-08-31T21:04:30.242Z box:apphealthmonitor app health: 31 running / 0 stopped / 0 unresponsive 9238:2025-08-31T21:04:30.281Z box:shell Finished with result: success 9245:2025-08-31T21:04:30.288Z box:shell Service box-task-7053 finished with exit code 0 9247:2025-08-31T21:04:30.289Z box:tasks startTask: 7053 completed with code 0Questions / Suggestions

- Is syslog the intended log driver for app containers?

Dockerd on my host now runs with --log-driver=journald, but all app containers remain on syslog unless re-created. - Platform-level fix proposals (any/all):

- Migrate app containers to journald on updates/repairs so they inherit the daemon default (no /var/log/syslog involvement).

- Ensure task/backup helpers don’t emit large dumps to stdout (redirect to files/pipes consumed by cloudron-syslog, not rsyslog).

- Ship an rsyslog drop-in that stops Docker-originated container stdout from landing in /var/log/syslog, since Cloudron already captures per-app logs under /home/yellowtent/platformdata/logs/.

This would prevent another GB-scale blow-up when an app emits a lot to stdout during backups or maintenance.

This would prevent another GB-scale blow-up when an app emits a lot to stdout during backups or maintenance.What do you think, @nebulon ?

Thanks in advance

- Is syslog the intended log driver for app containers?

-

So the docker daemon itself using journald via

--log-driver=journaldis correct. Also it is correct that the containers which are managed and started by Cloudron will havesyslogin theLogConfigof theHostConfig. Also it should mention thesyslog-addressbeingunix://home/yellowtent/platformdata/logs/syslog.sockFrom what I can see in your post this all looks correct and as intended.

Thus, none of the docker containers should log to journald or rsyslogd. Well at least if they were created by Cloudron itself of course to set those.

Given that this is uptime kuma, which in turn is just using sqlite, this lead me to https://git.cloudron.io/platform/box/-/blob/master/src/services.js?ref_type=heads#L933 which indeed starts a container without specifying the cloudron logdriver configs. So that is probably one thing we should fix.

This however would still mean the Gbs of sql dump logs just end up in another place. So the main issue then to fix is that

sqlite3 app.db .dumpwhich is run to create the sqldump also somehow logs to stdout/err despite redirectding stdou to the dump file....and that ends up in the logs somehow. I haven't found a fix yet but just to share the investigation here. -

In the meantime, the problem still persists it seems

root@ubuntu-cloudron-16gb-nbg1-3:~# du -sh /var/log/syslog* 15G /var/log/syslog 26G /var/log/syslog.1 0 /var/log/syslog.1.gz-2025083120.backup 52K /var/log/syslog.2.gz 4.0K /var/log/syslog.3.gz 4.0K /var/log/syslog.4.gzDisk graph shows

docker 25.9 GB docker-volumes 7.79 GB /apps.swap 4.29 GB platformdata 3.77 GB boxdata 58.34 MB maildata 233.47 kB Everything else (Ubuntu, etc) 48.67 GBroot@ubuntu-cloudron-16gb-nbg1-3:~# truncate -s 0 /var/log/syslog root@ubuntu-cloudron-16gb-nbg1-3:~# truncate -s 0 /var/log/syslog.1After truncating the logs (see above), I reclaim the disk space, but I really need to work on a more effective patch / housekeeping job to prevent

This disk contains: docker 25.9 GB docker-volumes 8.02 GB /apps.swap 4.29 GB platformdata 3.8 GB boxdata 57.93 MB maildata 233.47 kB Everything else (Ubuntu, etc) 7.62 GBI would also love if the Cloudron disk usage view would be a graph like for CPU and Memory. Maybe it's already planned for Cloudron 9, otherwise should I mention that idea in a new thread, @nebulon ?

-

Hello @SansGuidon

You mean the disk usage as a historical statistic and not only a singular point when checking?

If this is what you mean, no that is not part of Cloudron 9 at the moment.

But in my opinion, a very welcome feature request after Cloudron 9 is released! -

Hello @SansGuidon

You mean the disk usage as a historical statistic and not only a singular point when checking?

If this is what you mean, no that is not part of Cloudron 9 at the moment.

But in my opinion, a very welcome feature request after Cloudron 9 is released!@james said in After Ubuntu 22/24 Upgrade syslog getting spammed and grows way to much clogging up the diskspace:

Hello @SansGuidon

You mean the disk usage as a historical statistic and not only a singular point when checking?

If this is what you mean, no that is not part of Cloudron 9 at the moment.

But in my opinion, a very welcome feature request after Cloudron 9 is released!Exactly, the idea is to be able to notice if something weird is happening (like disk usage growing constantly at a rapid rate)

I'll make a proposal in a separate thread -> Follow up in https://forum.cloudron.io/topic/14292/add-historical-disk-usage-in-system-info-graphs-section -

@SansGuidon afaik, Cloudron does not log anything to syslog . Did you happen to check what was inside that massive syslog file? In one of our production cloudrons (running for almost a decade):

$ du -sh /var/log/syslog* 5.1M /var/log/syslog 6.6M /var/log/syslog.1 800K /var/log/syslog.2.gz 796K /var/log/syslog.3.gz 812K /var/log/syslog.4.gz -

Hi @joseph

root@ubuntu-cloudron-16gb-nbg1-3:~# du -sh /var/log/syslog* 8.2G /var/log/syslog 0 /var/log/syslog.1 0 /var/log/syslog.1.gz-2025083120.backup 52K /var/log/syslog.2.gz 4.0K /var/log/syslog.3.gz 4.0K /var/log/syslog.4.gzAs mentioned earlier in the discussion , it's due to sqlite backup dumps of UptimeKuma which end in the wrong place.

root@ubuntu-cloudron-16gb-nbg1-3:~# grep 'INSERT INTO' /var/log/syslog | wc -l 47237303And I think this was started being investigated by @nebulon

This generates a few GBs worth of waste per day on my Cloudron instance which causes regular outages (every few weeks) -

For now as a workaround I'm applying this patch, please advise if you have any concern with this

diff --git a/box/src/services.js b/box/src/services.js --- a/box/src/services.js +++ b/box/src/services.js @@ -1,7 +1,7 @@ 'use strict'; exports = module.exports = { getServiceConfig, listServices, getServiceStatus, @@ -308,7 +308,7 @@ async function backupSqlite(app, options) { // we use .dump instead of .backup because it's more portable across sqlite versions for (const p of options.paths) { const outputFile = path.join(paths.APPS_DATA_DIR, app.id, path.basename(p, path.extname(p)) + '.sqlite'); // we could use docker exec but it may not work if app is restarting const cmd = `sqlite3 ${p} ".dump"`; const runCmd = `docker run --rm --name=sqlite-${app.id} \ --net cloudron \ -v ${volumeDataDir}:/app/data \ --label isCloudronManaged=true \ - --read-only -v /tmp -v /run ${app.manifest.dockerImage} ${cmd} > ${outputFile}`; + --log-driver=none \ + --read-only -v /tmp -v /run ${app.manifest.dockerImage} ${cmd} > ${outputFile} 2>/dev/null`; await shell.bash(runCmd, { encoding: 'utf8' }); } } -

Hi @joseph

root@ubuntu-cloudron-16gb-nbg1-3:~# du -sh /var/log/syslog* 8.2G /var/log/syslog 0 /var/log/syslog.1 0 /var/log/syslog.1.gz-2025083120.backup 52K /var/log/syslog.2.gz 4.0K /var/log/syslog.3.gz 4.0K /var/log/syslog.4.gzAs mentioned earlier in the discussion , it's due to sqlite backup dumps of UptimeKuma which end in the wrong place.

root@ubuntu-cloudron-16gb-nbg1-3:~# grep 'INSERT INTO' /var/log/syslog | wc -l 47237303And I think this was started being investigated by @nebulon

This generates a few GBs worth of waste per day on my Cloudron instance which causes regular outages (every few weeks)@SansGuidon I think @nebulon investigated and could not reproduce. We also run uptime kuma. Our logs are fine. Have you enabled backups inside uptime kuma or something else by any chance?

root@my:~# docker ps | grep uptime cb00714073cb cloudron/louislam.uptimekuma.app:202508221422060000 "/app/pkg/start.sh" 2 weeks ago Up 2 weeks ee6e4628-c370-4713-9cb6-f1888c32f8fb root@my:~# du -sh /var/log/syslog* 352K /var/log/syslog 904K /var/log/syslog.1 116K /var/log/syslog.2.gz 112K /var/log/syslog.3.gz 112K /var/log/syslog.4.gz 108K /var/log/syslog.5.gz 112K /var/log/syslog.6.gz 108K /var/log/syslog.7.gz root@my:~# grep 'INSERT INTO' /var/log/syslog | wc -l 0 -

FWIW, our db is pretty big too.

@SansGuidon the command is just

sqlite3 ${p} ".dump"and it is redirected to a file. Do you have any ideas of why this will log sql commands to syslog? I can't reproduce this by running the command manually. -

@joseph I don't see any special setting in UptimeKuma being applied in my instance. Can you try to reproduce with those instructions below? Hope that makes sense

Ensure your default logdriver is journald:

systemctl show docker -p ExecStartShould show something like

ExecStart={ path=/usr/bin/dockerd ; argv[]=/usr/bin/dockerd -H fd:// --log-driver=journald --exec-opt native.cgroupdriver=cgroupfs --storage-driver=overlay2 --experimental --ip6tables --use>Then try to mimic what backupSqlite() does (no log driver; redirect only outside docker run):

docker run --rm alpine sh -lc 'for i in $(seq 1 3); do echo "INSERT INTO t VALUES($i);"; done' > /tmp/out.sqlObserve duplicates got logged to syslog anyway:

grep 'INSERT INTO t VALUES' /var/log/syslog | wc -l # > 0 cat /tmp/out.sql | wc -l # same 3 linesNow repeat with logging disabled (what the fix does):

docker run --rm --log-driver=none alpine sh -lc 'for i in $(seq 1 3); do echo "INSERT INTO t VALUES($i);"; done' > /tmp/out2.sql grep 'INSERT INTO t VALUES' /var/log/syslog | wc -l # unchanged

Hello! It looks like you're interested in this conversation, but you don't have an account yet.

Getting fed up of having to scroll through the same posts each visit? When you register for an account, you'll always come back to exactly where you were before, and choose to be notified of new replies (either via email, or push notification). You'll also be able to save bookmarks and upvote posts to show your appreciation to other community members.

With your input, this post could be even better 💗

Register Login