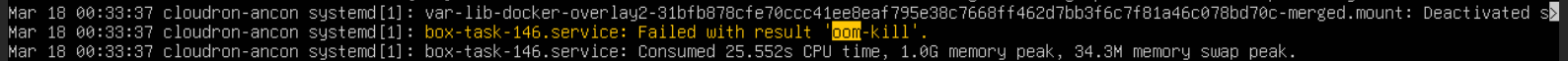

Task 'mvvolume.sh' dies from oom-kill during move of data-directory from local drive to NFS share

-

Additional output from

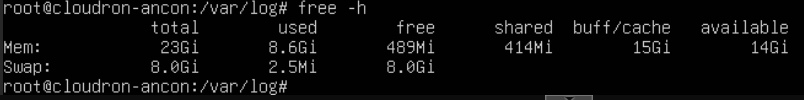

dmesgaround the oom-kill below. It looks like the process is getting up to ~11Gb before being killed, but even that should fit on the box with no issues based on the free memory. The system is running in a VM, so I could assign it more overall RAM to see if that helps, but would prefer not to restart everything in the middle of the week to increase this.[106869.999957] cp invoked oom-killer: gfp_mask=0x100cca(GFP_HIGHUSER_MOVABLE), order=0, oom_score_adj=0 [106869.999964] CPU: 7 PID: 1054475 Comm: cp Not tainted 6.8.0-55-generic #57-Ubuntu [106869.999967] Hardware name: QEMU Standard PC (i440FX + PIIX, 1996), BIOS rel-1.16.3-0-ga6ed6b701f0a-prebuilt.qemu.org 04/01/2014 [106869.999968] Call Trace: [106869.999969] <TASK> [106869.999972] dump_stack_lvl+0x76/0xa0 [106869.999976] dump_stack+0x10/0x20 [106869.999978] dump_header+0x47/0x1f0 [106869.999981] oom_kill_process+0x118/0x280 [106869.999983] out_of_memory+0x103/0x350 [106869.999984] mem_cgroup_out_of_memory+0x145/0x170 [106869.999987] try_charge_memcg+0x6d6/0x7d0 [106870.000021] mem_cgroup_swapin_charge_folio+0x7d/0x160 [106870.000023] __read_swap_cache_async+0x215/0x2b0 [106870.000025] swap_cluster_readahead+0x192/0x330 [106870.000027] swapin_readahead+0x7f/0x110 [106870.000028] do_swap_page+0x281/0xad0 [106870.000030] ? __pte_offset_map+0x1c/0x1b0 [106870.000033] handle_pte_fault+0x17b/0x1d0 [106870.000034] __handle_mm_fault+0x654/0x800 [106870.000036] handle_mm_fault+0x18a/0x380 [106870.000037] do_user_addr_fault+0x1f4/0x670 [106870.000039] ? virtscsi_queuecommand+0x149/0x3c0 [106870.000042] exc_page_fault+0x83/0x1b0 [106870.000044] asm_exc_page_fault+0x27/0x30 [106870.000046] RIP: 0010:_copy_to_iter+0x98/0x590 [106870.000049] Code: 48 89 d9 31 f6 48 01 d7 48 01 f9 40 0f 92 c6 48 85 c9 0f 88 0a 01 00 00 48 85 f6 0f 85 01 01 00 00 0f 01 cb 48 89 d9 4c 89 ee <f3> a4 0f 1f 00 0f 01 ca 49 8b 54 24 08 49 89 de 49 8b 44 24 18 49 [106870.000050] RSP: 0018:ffffb5cf4fdaba50 EFLAGS: 00050246 [106870.000052] RAX: 0000000000071000 RBX: 0000000000001000 RCX: 0000000000001000 [106870.000053] RDX: 000000000008f000 RSI: ffff8aaa4d46b000 RDI: 00007367cc58e000 [106870.000054] RBP: ffffb5cf4fdabac8 R08: 0000000000000000 R09: 0000000000000000 [106870.000055] R10: 000000004948f000 R11: ffff8aaefce4f968 R12: ffffb5cf4fdabcb0 [106870.000055] R13: ffff8aaa4d46b000 R14: 0000000000000000 R15: 0000000000001000 [106870.000058] ? filemap_get_pages+0xa9/0x3b0 [106870.000061] copy_page_to_iter+0x9f/0x170 [106870.000063] filemap_read+0x227/0x470 [106870.000065] generic_file_read_iter+0xbb/0x110 [106870.000067] ext4_file_read_iter+0x63/0x210 [106870.000070] vfs_read+0x25c/0x390 [106870.000073] ksys_read+0x73/0x100 [106870.000075] __x64_sys_read+0x19/0x30 [106870.000077] x64_sys_call+0x1bf0/0x25a0 [106870.000079] do_syscall_64+0x7f/0x180 [106870.000081] ? handle_pte_fault+0x17b/0x1d0 [106870.000082] ? __handle_mm_fault+0x654/0x800 [106870.000084] ? rseq_get_rseq_cs+0x22/0x280 [106870.000086] ? rseq_ip_fixup+0x90/0x1f0 [106870.000088] ? count_memcg_events.constprop.0+0x2a/0x50 [106870.000090] ? irqentry_exit_to_user_mode+0x7b/0x260 [106870.000091] ? irqentry_exit+0x43/0x50 [106870.000092] ? clear_bhb_loop+0x15/0x70 [106870.000094] ? clear_bhb_loop+0x15/0x70 [106870.000095] ? clear_bhb_loop+0x15/0x70 [106870.000097] entry_SYSCALL_64_after_hwframe+0x78/0x80 [106870.000098] RIP: 0033:0x7367cc71ba61 [106870.000110] Code: 00 48 8b 15 b9 73 0e 00 f7 d8 64 89 02 b8 ff ff ff ff eb bd e8 40 c4 01 00 f3 0f 1e fa 80 3d e5 f5 0e 00 00 74 13 31 c0 0f 05 <48> 3d 00 f0 ff ff 77 4f c3 66 0f 1f 44 00 00 55 48 89 e5 48 83 ec [106870.000111] RSP: 002b:00007ffd2da52e78 EFLAGS: 00000246 ORIG_RAX: 0000000000000000 [106870.000112] RAX: ffffffffffffffda RBX: 0000000000100000 RCX: 00007367cc71ba61 [106870.000113] RDX: 0000000000100000 RSI: 00007367cc4ff000 RDI: 0000000000000004 [106870.000119] RBP: 00007ffd2da52f60 R08: 00000000ffffffff R09: 00007367cc5ff000 [106870.000119] R10: 0000000000000001 R11: 0000000000000246 R12: 0000000000000000 [106870.000120] R13: 0000000000000000 R14: 0000000000000000 R15: 0000000000000000 [106870.000121] </TASK> [106870.000123] memory: usage 1048576kB, limit 1048576kB, failcnt 786574 [106870.000124] swap: usage 35080kB, limit 9007199254740988kB, failcnt 0 [106870.000125] Memory cgroup stats for /system.slice/box-task-146.service: [106870.000145] anon 0 [106870.000146] file 1065398272 [106870.000146] kernel 8138752 [106870.000147] kernel_stack 229376 [106870.000147] pagetables 1585152 [106870.000148] sec_pagetables 0 [106870.000148] percpu 960 [106870.000148] sock 0 [106870.000149] vmalloc 0 [106870.000149] shmem 0 [106870.000150] zswap 0 [106870.000150] zswapped 0 [106870.000150] file_mapped 0 [106870.000151] file_dirty 1065353216 [106870.000151] file_writeback 200704 [106870.000152] swapcached 204800 [106870.000152] anon_thp 0 [106870.000152] file_thp 0 [106870.000153] shmem_thp 0 [106870.000153] inactive_anon 204800 [106870.000154] active_anon 0 [106870.000154] inactive_file 1065324544 [106870.000154] active_file 40960 [106870.000155] unevictable 0 [106870.000155] slab_reclaimable 5885024 [106870.000155] slab_unreclaimable 379336 [106870.000156] slab 6264360 [106870.000156] workingset_refault_anon 2653 [106870.000157] workingset_refault_file 70006 [106870.000157] workingset_activate_anon 272 [106870.000157] workingset_activate_file 30798 [106870.000170] workingset_restore_anon 272 [106870.000171] workingset_restore_file 20 [106870.000171] workingset_nodereclaim 0 [106870.000172] pgscan 935071 [106870.000172] pgsteal 894602 [106870.000173] pgscan_kswapd 0 [106870.000173] pgscan_direct 935071 [106870.000174] pgscan_khugepaged 0 [106870.000180] pgsteal_kswapd 0 [106870.000180] pgsteal_direct 894602 [106870.000181] pgsteal_khugepaged 0 [106870.000181] pgfault 28424 [106870.000182] pgmajfault 622 [106870.000183] pgrefill 466537803 [106870.000183] pgactivate 28389 [106870.000184] pgdeactivate 0 [106870.000184] pglazyfree 0 [106870.000185] pglazyfreed 0 [106870.000185] zswpin 0 [106870.000185] zswpout 0 [106870.000186] zswpwb 0 [106870.000186] thp_fault_alloc 0 [106870.000187] thp_collapse_alloc 0 [106870.000187] thp_swpout 0 [106870.000187] thp_swpout_fallback 0 [106870.000188] Tasks state (memory values in pages): [106870.000188] [ pid ] uid tgid total_vm rss rss_anon rss_file rss_shmem pgtables_bytes swapents oom_score_adj name [106870.000190] [1054414] 808 1054414 2882105 10976 0 10976 0 1343488 7840 0 node [106870.000192] [1054470] 808 1054470 4189 1472 0 1472 0 77824 288 0 sudo [106870.000195] [1054471] 0 1054471 1835 832 0 832 0 57344 64 0 mvvolume.sh [106870.000197] [1054474] 0 1054474 1734 640 0 640 0 53248 32 0 find [106870.000198] [1054475] 0 1054475 1924 512 0 512 0 53248 320 0 cp [106870.000199] oom-kill:constraint=CONSTRAINT_MEMCG,nodemask=(null),cpuset=box-task-146.service,mems_allowed=0,oom_memcg=/system.slice/box-task-146.service,task_memcg=/system.slice/box-task-146.service,task=node,pid=1054414,uid=808 [106870.000240] Memory cgroup out of memory: Killed process 1054414 (node) total-vm:11528420kB, anon-rss:0kB, file-rss:43904kB, shmem-rss:0kB, UID:808 pgtables:1312kB oom_score_adj:0 -

Looking at the source of the

.shfile here -https://git.cloudron.io/platform/box/-/blob/master/src/scripts/mvvolume.sh?ref_type=headsI'd guess that replacing these lines:find "${source_dir}" -maxdepth 1 -mindepth 1 -not -wholename "${target_dir}" -exec cp -ar '{}' "${target_dir}" \; find "${source_dir}" -maxdepth 1 -mindepth 1 -not -wholename "${target_dir}" -exec rm -rf '{}' \;with something along the lines of:

rsync -a --remove-source-files --exclude "${target_dir}" "${source_dir}/" "${target_dir}/"may end up being less of a resource hog.

I'll schedule an increase of RAM for the VM this weekend and reboot it all - see if that help.

-

G girish marked this topic as a question on

G girish marked this topic as a question on

-

I upped the VM memory allocation from 24Gb to 30Gb (the whole box has 32Gb, I wanted to leave a little for the hypervisor).

Same problem. Partway though the file copy the oom-kill steps in and spoils the party. The total -vm size reported by the oom-killer was a little larger - so maybe something is using a lot of RAM during that process - but I cannot for the life of me think what it would be.

Have ordered more physical RAM for the server, will add that in the next service window and re-try this to see if it helps.

What's odd is outside the VM, there is no evidence of that amount of memory usage. The Proxmox host itself tracks the memory usage and it's not changing significantly during that time, and the looking at the system monitoring inside the Ubuntu VM shows a peak memory usage of less than 10Gb overall.

-

J joseph has marked this topic as solved on

-

-

Perhaps it was in another context than just RAM, like a name space, or temp I/O log that is usually short lived, except where it isn't.

This reminds me of the old days where copying floppies was inordinately slow since it copied only a few blocks at a time which had a lot of context switches, until someone introduced the xcopy algorythm where as much of the source that could be was read into memory at once then written to the destination at once, minimizing block copies and the back and forth context switching. Later it was improved further to not use so much memory and do nice large chunks, optimizing reads, writes of the drives (heat, errors) and time.

If the code could copy in predefined chunks, then the memory will be bound and not fill up for larger runs.

-

Perhaps it was in another context than just RAM, like a name space, or temp I/O log that is usually short lived, except where it isn't.

This reminds me of the old days where copying floppies was inordinately slow since it copied only a few blocks at a time which had a lot of context switches, until someone introduced the xcopy algorythm where as much of the source that could be was read into memory at once then written to the destination at once, minimizing block copies and the back and forth context switching. Later it was improved further to not use so much memory and do nice large chunks, optimizing reads, writes of the drives (heat, errors) and time.

If the code could copy in predefined chunks, then the memory will be bound and not fill up for larger runs.

@robi said in Task 'mvvolume.sh' dies from oom-kill during move of data-directory from local drive to NFS share:

If the code could copy in predefined chunks, then the memory will be bound and not fill up for larger runs.

in this situation atleast, the code is executing the

cptool Strange behavior really.

Strange behavior really. -

@robi said in Task 'mvvolume.sh' dies from oom-kill during move of data-directory from local drive to NFS share:

If the code could copy in predefined chunks, then the memory will be bound and not fill up for larger runs.

in this situation atleast, the code is executing the

cptool Strange behavior really.

Strange behavior really.@girish said in Task 'mvvolume.sh' dies from oom-kill during move of data-directory from local drive to NFS share:

in this situation atleast, the code is executing the cp tool Strange behavior really.

That's the issue: See here https://serverfault.com/questions/156431/copying-large-directory-with-cp-fills-memory

-

@girish said in Task 'mvvolume.sh' dies from oom-kill during move of data-directory from local drive to NFS share:

in this situation atleast, the code is executing the cp tool Strange behavior really.

That's the issue: See here https://serverfault.com/questions/156431/copying-large-directory-with-cp-fills-memory

@robi interesting link. I haven't found why it takes so much memory though, do you happen to have any information on this?

Do you think it just loads all the files into memory and works of a massive list one by one? If that's the case, yeah... this is a problem . No easy fix other than rolling our own cp it seems.

-

@robi interesting link. I haven't found why it takes so much memory though, do you happen to have any information on this?

Do you think it just loads all the files into memory and works of a massive list one by one? If that's the case, yeah... this is a problem . No easy fix other than rolling our own cp it seems.

@girish Here's what I found:

https://www.qwant.com/?q=long+run+copies+via+cp+causing+oom+memory

Various OOM workarounds:

https://github.com/rfjakob/earlyoom

https://github.com/hakavlad/nohang

https://github.com/facebookincubator/oomd

https://gitlab.freedesktop.org/hadess/low-memory-monitor/

https://github.com/endlessm/eos-boot-helper/tree/master/psi-monitorUnderstanding OOM Score adjustment:

https://last9.io/blog/understanding-the-linux-oom-killer/Possible LowFree issue:

https://bugzilla.redhat.com/show_bug.cgi?id=536734Parallel vs sequential copy:

https://askubuntu.com/questions/1471139/fuse-zip-using-cp-reported-running-out-of-virtual-memoryAnd lastly, since you use stdio as output, perhaps it fills that up somehow. If it were redirected to disk it might be different.

That might also enable resuming a failed restore.

-

I got data lost due to a weird find commands in the shell script; wonder why not to use rsync

-

@joseph said in Task 'mvvolume.sh' dies from oom-kill during move of data-directory from local drive to NFS share:

I think rsync requires a server, no?

?

No, not even over SSH. There’s a daemon mode and it can work as a server but it’s not required -

@necrevistonnezr I guess you mean the rsync binary. Sure that will work "locally" but the real benefit of rsync (protocol) comes from having a server component. This server component will not be used with nfs mounts (the topic of this thread). If you want to use rsync just locally, there are also other tools you can use.

I don't have any context more than that one sentence though

I was just replying in passing.

I was just replying in passing.

Hello! It looks like you're interested in this conversation, but you don't have an account yet.

Getting fed up of having to scroll through the same posts each visit? When you register for an account, you'll always come back to exactly where you were before, and choose to be notified of new replies (either via email, or push notification). You'll also be able to save bookmarks and upvote posts to show your appreciation to other community members.

With your input, this post could be even better 💗

Register Login