@nebulon It would be awesome if Cloudron could integrate the 'Suite Numérique' apps! In France, this suite is gaining a lot of traction and is being rolled out everywhere. Being able to deploy it easily thanks to Cloudron would be a massive advantage. I’m not sure how feasible it is or if all these apps are suitable for Cloudron packaging, but it would definitely be a game-changer.

Benoit

Posts

-

Docs - Alternative to Notion / Outline with OIDC, GDPR compliant, PDF Export (with template) etc... -

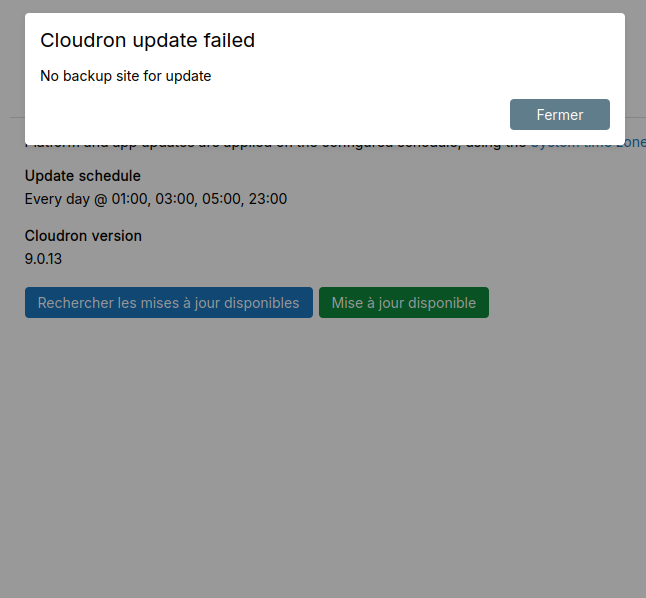

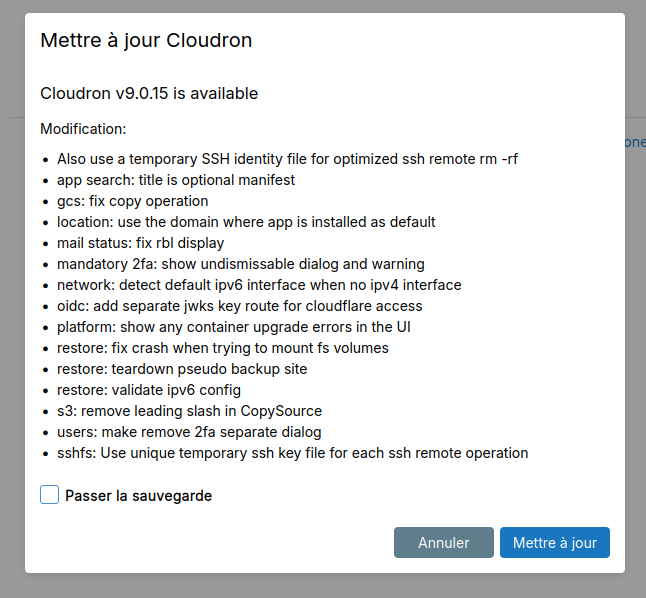

Issue with app updates when using Box Storage backupsI solved my problem by updating Cloudron to version 9.0.17. There’s no longer any need to run a backup before a manual app update.

-

Issue with app updates when using Box Storage backupsHi @jdaviescoates, I tried re-pasting the private key, but it still doesn’t work on my side. I also rebooted Cloudron, and nothing changed.

-

Issue with app updates when using Box Storage backups -

Issue with app updates when using Box Storage backupsHi everyone,

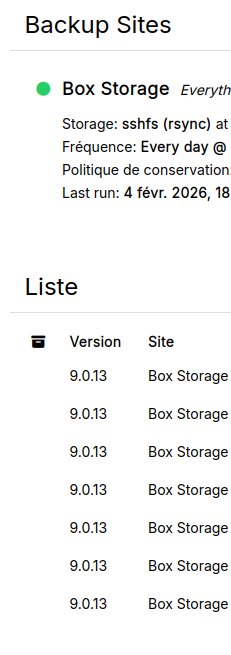

I’d like to report a strange issue that seems to be related to using Box Storage as the backup provider.

On multiple Cloudron instances where Box Storage is configured, I’ve noticed that manual app updates don’t complete properly. The update process appears to start, but the app only restarts and the update is not applied.

However, if I select “Skip backup” before launching the update, it works as expected.This behavior has been consistent across every Cloudron installation I’ve tested that uses Box Storage for backups.

Has anyone else encountered this issue, or could someone from the team try to reproduce it? It really feels like the update process is getting stuck or aborted during the backup step when Box Storage is involved.

Thanks in advance for your help!

Cloudron version : 9.0.15

Ubuntu version : Ubuntu 24.04.1 LTS Linux 6.8.0-90-generic -

Extremely slow backups to Hetzner Storage Box (rsync & tar.gz) – replacing MinIO used on a dedicated CloudronMany thanks for your support! I’m restarting my backups right away with the correct configuration. I’ll keep you posted on the backup speed, which should be significantly improved now that this configuration issue is fixed.

-

Extremely slow backups to Hetzner Storage Box (rsync & tar.gz) – replacing MinIO used on a dedicated CloudronThanks a lot for your replies. I'm letting the backups finish and I'm contacting Cloudron support in the meantime.

@nebulon I don't use subaccounts because I know it's risky. I have subfolders in /home/backups and no prefix.

Do you have a lot of these lines too ?

Jan 07 11:05:38 at ChildProcess.<anonymous> (/home/yellowtent/box/src/shell.js:82:23) Jan 07 11:05:38 at ChildProcess.emit (node:events:519:28) Jan 07 11:05:38 at maybeClose (node:internal/child_process:1101:16) Jan 07 11:05:38 at ChildProcess._handle.onexit (node:internal/child_process:304:5) { Jan 07 11:05:38 reason: 'Shell Error', Jan 07 11:05:38 details: {}, Jan 07 11:05:38 stdout: <Buffer >, Jan 07 11:05:38 stdoutLineCount: 0, Jan 07 11:05:38 stderr: <Buffer 2f 75 73 72 2f 62 69 6e 2f 63 70 3a 20 63 61 6e 6e 6f 74 20 73 74 61 74 20 27 73 6e 61 70 73 68 6f 74 2f 61 70 70 5f 66 63 31 64 33 36 33 38 2d 62 66 ... 54 more bytes>, Jan 07 11:05:38 stderrLineCount: 1, Jan 07 11:05:38 code: 1, Jan 07 11:05:38 signal: null, Jan 07 11:05:38 timedOut: false, Jan 07 11:05:38 terminated: false Jan 07 11:05:38 } Jan 07 11:05:38 box:storage/filesystem SSH remote copy failed, trying sshfs copy -

Extremely slow backups to Hetzner Storage Box (rsync & tar.gz) – replacing MinIO used on a dedicated CloudronI've attempted multiple backups, but the results are still terrible. In my opinion, the storage box isn't production-ready for Cloudron. Even the delta sync with rsync takes forever. Case in point: a backup has been running for 6 hours and is only at 57% of 77GB. I'm moving away from this solution to look for alternatives.

In all my logs i find these lines which is the point when it takes an infinity time...

Jan 07 09:48:36 at ChildProcess.<anonymous> (/home/yellowtent/box/src/shell.js:82:23) Jan 07 09:48:36 at ChildProcess.emit (node:events:519:28) Jan 07 09:48:36 at maybeClose (node:internal/child_process:1101:16) Jan 07 09:48:36 at ChildProcess._handle.onexit (node:internal/child_process:304:5) { Jan 07 09:48:36 reason: 'Shell Error', Jan 07 09:48:36 details: {}, Jan 07 09:48:36 stdout: <Buffer >, Jan 07 09:48:36 stdoutLineCount: 0, Jan 07 09:48:36 stderr: <Buffer 2f 75 73 72 2f 62 69 6e 2f 63 70 3a 20 63 61 6e 6e 6f 74 20 73 74 61 74 20 27 6e 75 6d 73 6f 6c 2d 2f 73 6e 61 70 73 68 6f 74 2f 61 70 70 5f 37 34 30 ... 62 more bytes>, Jan 07 09:48:36 stderrLineCount: 1, Jan 07 09:48:36 code: 1, Jan 07 09:48:36 signal: null, Jan 07 09:48:36 timedOut: false, Jan 07 09:48:36 terminated: false Jan 07 09:48:36 } Jan 07 09:48:36 box:storage/filesystem SSH remote copy failed, trying sshfs copy -

Extremely slow backups to Hetzner Storage Box (rsync & tar.gz) – replacing MinIO used on a dedicated Cloudron@nebulon I just stopped the backup at 55%! I checked the hardlinks box. I have a VM backup scheduled in an hour, so I've rescheduled the storage box backup for tonight; we'll see tomorrow if it goes through. I'll keep you posted.

-

Extremely slow backups to Hetzner Storage Box (rsync & tar.gz) – replacing MinIO used on a dedicated Cloudron@jdaviescoates No progress since my last message, still stuck at 32%, and this is what I see in the logs:

Jan 06 15:12:30 box:syncer finalize: patching in integrity information into /home/yellowtent/platformdata/backup/03513981-96d3-4130-832d-ff15d8a124fc/5ed6495a-866e-4899-9d1d-437bafe6473a.sync.cache Jan 06 15:12:30 box:backuptask upload: path snapshot/app_5ed6495a-866e-4899-9d1d-437bafe6473a site 03513981-96d3-4130-832d-ff15d8a124fc uploaded: {"transferred":117746128,"size":117746128,"fileCount":8678} Jan 06 15:12:30 box:tasks updating task 21170 with: {"percent":31.76923076923078,"message":"Uploading integrity information to snapshot/app_5ed6495a-866e-4899-9d1d-437bafe6473a.backupinfo (matomo.monapp.fr)"} Jan 06 15:12:30 box:backupupload upload completed. error: null Jan 06 15:12:30 box:backuptask runBackupUpload: result - {"result":{"stats":{"transferred":117746128,"size":117746128,"fileCount":8678},"integrity":{"signature":"a7be7fd4df5bf668148d531a015dbbc37f4ade8da8d130800050b9e563f2694b20f1923c2f76c7467ad42fe8a0c9431e0ac747a906fe9b855058414ec2d3f70b"}}} Jan 06 15:12:30 box:backuptask uploadAppSnapshot: matomo.monapp.fr uploaded to snapshot/app_5ed6495a-866e-4899-9d1d-437bafe6473a. 690.868 seconds Jan 06 15:12:30 box:backuptask backupAppWithTag: rotating matomo.monapp.fr snapshot of 03513981-96d3-4130-832d-ff15d8a124fc to path 2026-01-06-114408-065/app_matomo.monapp.fr_v1.53.2 Jan 06 15:12:30 box:tasks updating task 21170 with: {"percent":31.76923076923078,"message":"Copying /mnt/managedbackups/03513981-96d3-4130-832d-ff15d8a124fc/snapshot/app_5ed6495a-866e-4899-9d1d-437bafe6473a to /mnt/managedbackups/03513981-96d3-4130-832d-ff15d8a124fc/2026-01-06-114408-065/app_matomo.monapp.fr_v1.53.2"} Jan 06 15:12:30 box:shell filesystem: ssh -o "StrictHostKeyChecking no" -i /tmp/identity_file_-mnt-managedbackups-03513981-96d3-4130-832d-ff15d8a124fc -p 23 user@user.your-storagebox.de cp -aR snapshot/app_5ed6495a-866e-4899-9d1d-437bafe6473a 2026-01-06-114408-065/app_matomo.monapp.fr_v1.53.2 Jan 06 15:12:31 box:shell filesystem: ssh -o "StrictHostKeyChecking no" -i /tmp/identity_file_-mnt-managedbackups-03513981-96d3-4130-832d-ff15d8a124fc -p 23 user@user.your-storagebox.de cp -aR snapshot/app_5ed6495a-866e-4899-9d1d-437bafe6473a 2026-01-06-114408-065/app_matomo.monapp.fr_v1.53.2 errored BoxError: ssh exited with code 1 signal null Jan 06 15:12:31 at ChildProcess.<anonymous> (/home/yellowtent/box/src/shell.js:82:23) Jan 06 15:12:31 at ChildProcess.emit (node:events:519:28) Jan 06 15:12:31 at maybeClose (node:internal/child_process:1101:16) Jan 06 15:12:31 at ChildProcess._handle.onexit (node:internal/child_process:304:5) { Jan 06 15:12:31 reason: 'Shell Error', Jan 06 15:12:31 details: {}, Jan 06 15:12:31 stdout: <Buffer >, Jan 06 15:12:31 stdoutLineCount: 0, Jan 06 15:12:31 stderr: <Buffer 2f 75 73 72 2f 62 69 6e 2f 63 70 3a 20 63 61 6e 6e 6f 74 20 73 74 61 74 20 27 73 6e 61 70 73 68 6f 74 2f 61 70 70 5f 35 65 64 36 34 39 35 61 2d 38 36 ... 54 more bytes>, Jan 06 15:12:31 stderrLineCount: 1, Jan 06 15:12:31 code: 1, Jan 06 15:12:31 signal: null, Jan 06 15:12:31 timedOut: false, Jan 06 15:12:31 terminated: false Jan 06 15:12:31 } Jan 06 15:12:31 box:storage/filesystem SSH remote copy failed, trying sshfs copy Jan 06 15:12:31 box:shell filesystem: cp -aR /mnt/managedbackups/03513981-96d3-4130-832d-ff15d8a124fc/snapshot/app_5ed6495a-866e-4899-9d1d-437bafe6473a /mnt/managedbackups/03513981-96d3-4130-832d-ff15d8a124fc/2026-01-06-114408-065/app_matomo.monapp.fr_v1.53.2 -

Extremely slow backups to Hetzner Storage Box (rsync & tar.gz) – replacing MinIO used on a dedicated Cloudron@jdaviescoates I started the backup 2.5 hours ago and it is currently at 32% for a little over 200 GB to back up using rsync (first full backup).

I did not enable hardlinks.

Since this Storage Box will be used to back up multiple Cloudron instances, I left the setting at 10 simultaneous uploads. -

Extremely slow backups to Hetzner Storage Box (rsync & tar.gz) – replacing MinIO used on a dedicated CloudronHi,

I’m experiencing extremely slow Cloudron backups to a Hetzner Storage Box, regardless of the backup format (rsync or tar.gz, encryption disabled).

Context

Backup target: Hetzner Storage Box (SSH/rsync)

Tested formats: rsync and tar.gz

Encryption: disabled

Nextcloud apps excluded

Goal: move away from MinIO, which I previously ran on a dedicated Cloudron, and use Storage Box instead

Observed behavior

The upload phase completes, but backups then stall for a long time during the rotation / copy phase. I consistently see SSH copy errors followed by a fallback to sshfs:box:backuptask uploadAppSnapshot: <APP_DOMAIN> uploaded to snapshot/app_<APP_ID> box:backuptask backupAppWithTag: rotating snapshot to timestamped backup box:shell filesystem: ssh ... cp -aR snapshot/app_<APP_ID> <BACKUP_PATH> /usr/bin/cp: cannot stat 'snapshot/app_<APP_ID>' box:storage/filesystem SSH remote copy failed, trying sshfs copy box:shell filesystem: cp -aR /mnt/managedbackups/<CLOUDRON_ID>/snapshot/app_<APP_ID> \ /mnt/managedbackups/<CLOUDRON_ID>/<TIMESTAMP>/app_<APP_NAME>_v<APP_VERSION>After this, backups often stay at the same percentage for 20–30+ minutes, sometimes much longer, even though CPU and RAM usage are low.

System

Cloudron: 9.0.13

OS: Ubuntu 24.04.1 LTS (kernel 6.8)

VM: 4 vCPU, ~21 GB RAM

Hypervisor: QEMU

Questions

Is Hetzner Storage Box a recommended/supported backend for Cloudron backups?

Is this SSH cp -aR failure and sshfs fallback expected?

Could this explain the very long backup times?

Are there recommended settings (hardlinks, concurrency, format) when using Storage Box?

Thanks for any guidance.

-

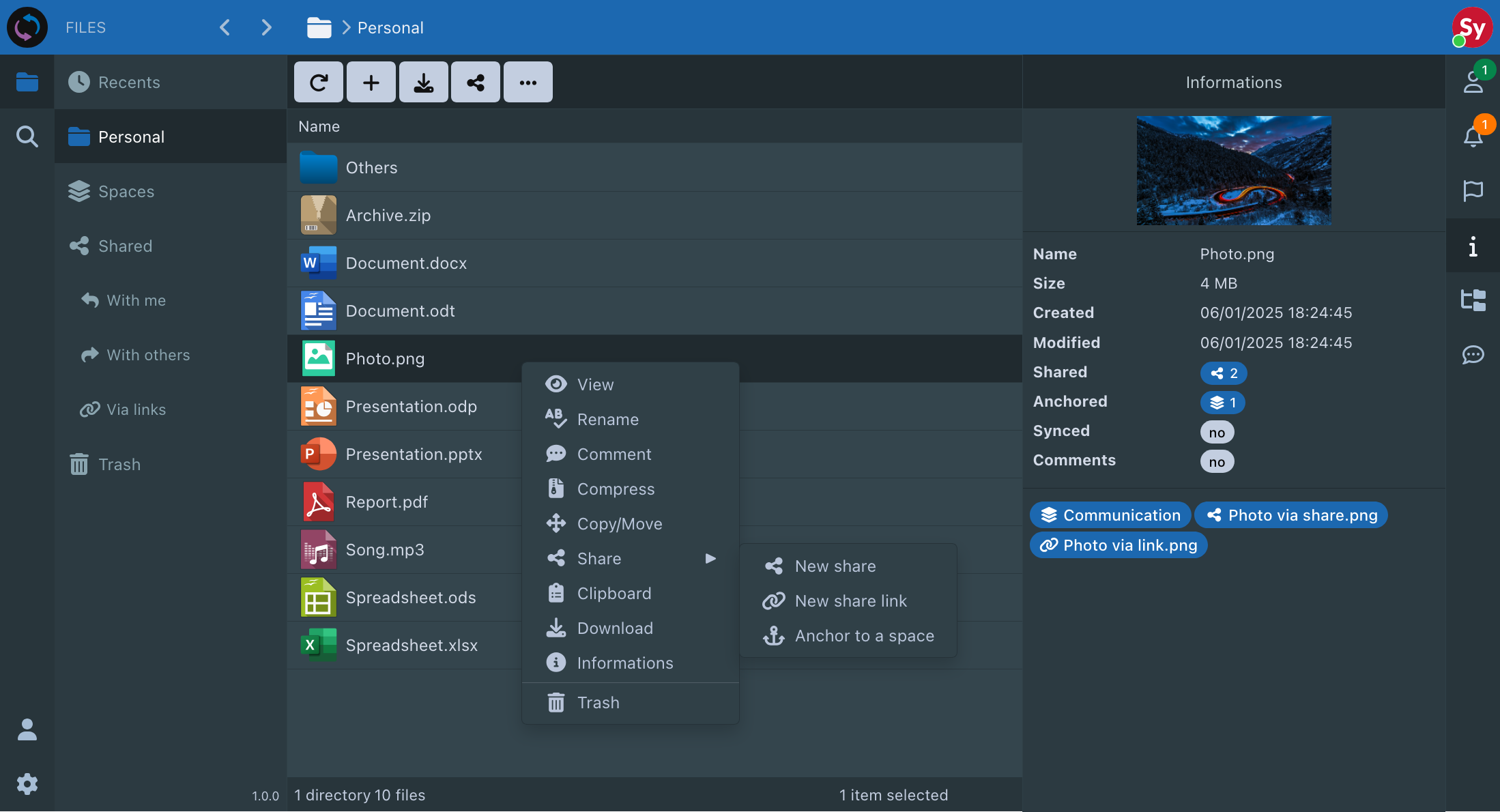

Sync In : Your data belongs to you

- Title: Sync In

- Main Page: https://sync-in.com/

- Git: https://github.com/Sync-in

- Licence: AGPL

- Docker: Yes

- Demo: https://sync-in.com/docs/demo

- Summary: Sync-in is your open source platform to sync, share, and collaborate securely.

Manage your data, freely, privately and with no compromise.

- Notes:

- Open Source

- Data Sovereignty

- Secure By Design

- Scalable & Reliable

- Efficiency

- Available Anywhere

- Collaborative Spaces

- Synchronization

- Shares

- Guests & Personal Groups

- Activity Tracking

- Advanced File Manager

- Smart Search

- Real-time Collaborative Editing

- WebDAV Integration

-

- **Alternative to **: Nextcloud / Owncloud

- Screenshots:

-

Cannot Restore Nextcloud Backups via rsync to MinIO – fsmetadata.json ENOENT Errorok thanks @nebulon i write to support.

-

Cannot Restore Nextcloud Backups via rsync to MinIO – fsmetadata.json ENOENT ErrorYes, no fsmetadata.json on minio. I can only restore the oldest backup without errors. All the others fail and I always get the same error. Naturally, when I retry the task, Nextcloud ends up either empty or in an error state.

-

Cannot Restore Nextcloud Backups via rsync to MinIO – fsmetadata.json ENOENT Error@nebulon said in Cannot Restore Nextcloud Backups via rsync to MinIO – fsmetadata.json ENOENT Error:

/home/yellowtent/appsdata/

yes it is

-

Cannot Restore Nextcloud Backups via rsync to MinIO – fsmetadata.json ENOENT Error@BrutalBirdie yes i know that and we start OS upgrades. Yes the backups have files. But not all the files because of rsync.

@nebulon it seems the fsmetadata.json isn't exist... I tried with a new manual backup and the fsmetadata is not created, but we have no error during the backup process. -

Cannot Restore Nextcloud Backups via rsync to MinIO – fsmetadata.json ENOENT ErrorHello, I’m encountering an issue with my Nextcloud backups via rsync to an unencrypted MinIO on a Cloudron server. I’m unable to properly restore a backup—I always get the same error on one file:

External Error: Error loading fsmetadata.json: ENOENT: no such file or directory, open '/home/yellowtent/appsdata/appid/fsmetadata.json'

The only backup I’ve been able to restore is the very first (oldest) one from a month ago… How can I fix this? Thank you for your help!

Cloudron v8.3.1 (Ubuntu 20.04.4 LTS)

-

Nextcloud Error : redis problemThank you, @joseph ! Great! But how were you able to restart Redis? When I tried, I could only put it in recovery mode. I had renamed the file from dump.rdb to dump.rdb.corrupt and then tried restarting Redis, but it didn’t work. I must have missed a step. Thanks again!"

-

Nextcloud Error : redis problem@joseph i tried to copy the dump.rdb file from /home/yellowtent/platformdata/redis/<app_id> in /home/yellowtent/appsdata/<app_id> (app was stopped) and it doesn't work... I tried to put the app in recovery mode but it fails too with many message like this : box:services Attempt 6 failed. Will retry: Error waiting for redis-<ID>. Status code: 200 message: process not running