Hello @halkhamis

If you want to use an external database with Freescout you will have to fork the Freescout package and change it so that you can use an external database.

It sounds like you want to use enterprise and high availability features for a software that is not highly available in the first place, so the HA of the DB will offer nothing when the software is down anyway.

Cloudron also provides the security and encryption for the DB and also offers the backup and restore for the app and database itself, but not PITR.

james

Posts

-

Environment File Resets After Application Restart -

Community AppsHermes Agent

Detail Link / Info Wishlist topic Wishlist Topic Author @andreasdueren Repository Repository Install CloudronVersions.json If you have questions or issues about this community app, please open a separate topic in the @community-apps category and link to this reply.

-

Kutt 3.0Hello @poeti8

We did this 4 hours ago https://forum.cloudron.io/post/124829Also, @poeti8 I would like to confirm that you are indeed https://github.com/poeti8 so we add you to the Validated App Maintainer group to signal users that you are indeed who you are.

Unfortunately you used a different mail in our forum that the mail on your website so I could not confirm it by myself. -

Environment File Resets After Application RestartHello @halkhamis

Cloudron does manage all the requirements for apps within itself.

There is no need for an external database.

Can you maybe elaborate why you want to use an external database? -

Environment File Resets After Application RestartHello @halkhamis

Changing the database environment variables is currently not support and set on every start of the app.

Why do you want to change the database connection? -

Environment File Resets After Application RestartHello @halkhamis

Changes made to the .env file should persist after application restarts.

Yes and No.

It depends on the environment variables you are looking to override.

Certain variables are configured on every app restart by Cloudron to ensure the app is working.For example these variables are always set - https://git.cloudron.io/packages/freescout-app/-/blob/master/start.sh?ref_type=heads#L51-L60

echo "=> Set configs" crudini --set /app/data/env "" APP_URL "${CLOUDRON_APP_ORIGIN}" crudini --set /app/data/env "" APP_FORCE_HTTPS "true" crudini --set /app/data/env "" DB_CONNECTION "mysql" crudini --set /app/data/env "" DB_HOST "${CLOUDRON_MYSQL_HOST}" crudini --set /app/data/env "" DB_PORT "${CLOUDRON_MYSQL_PORT}" crudini --set /app/data/env "" DB_DATABASE "${CLOUDRON_MYSQL_DATABASE}" crudini --set /app/data/env "" DB_USERNAME "${CLOUDRON_MYSQL_USERNAME}" crudini --set /app/data/env "" DB_PASSWORD "${CLOUDRON_MYSQL_PASSWORD}" crudini --set /app/data/env "" APP_DISABLE_UPDATING "true"Also when the Cloudron User Management was chosen when the app was installed the following configurations will always be set - https://git.cloudron.io/packages/freescout-app/-/blob/master/start.sh?ref_type=heads#L116-L139

if [[ -n "${CLOUDRON_OIDC_ISSUER:-}" ]]; then echo "=> Configure OIDC" OAUTH_PROVIDERS=$(gosu cloudron php <<'EOF' <?php echo addslashes( serialize( [ [ 'active' => 1, 'default' => 1, 'provider' => "oauth", 'name' => getenv("CLOUDRON_OIDC_PROVIDER_NAME") ?? "Cloudron", 'id' => "cloudron", 'client_id' => getenv("CLOUDRON_OIDC_CLIENT_ID"), 'client_secret' => getenv("CLOUDRON_OIDC_CLIENT_SECRET"), 'auth_url' => getenv("CLOUDRON_OIDC_AUTH_ENDPOINT"), 'token_url' => getenv("CLOUDRON_OIDC_TOKEN_ENDPOINT"), 'user_url' => getenv("CLOUDRON_OIDC_PROFILE_ENDPOINT"), 'user_method' => "POST", 'proxy' => "", 'mapping' => "", 'scopes' => "openid profile email" ] ] ) ); EOF )So without the detail from you which exact variables you are trying to change, I can only assume they are one of the default sets of Cloudron.

-

WebSocket Connection ErrorHello @mononym

Is there something 'unique' about your Cloudron set up?

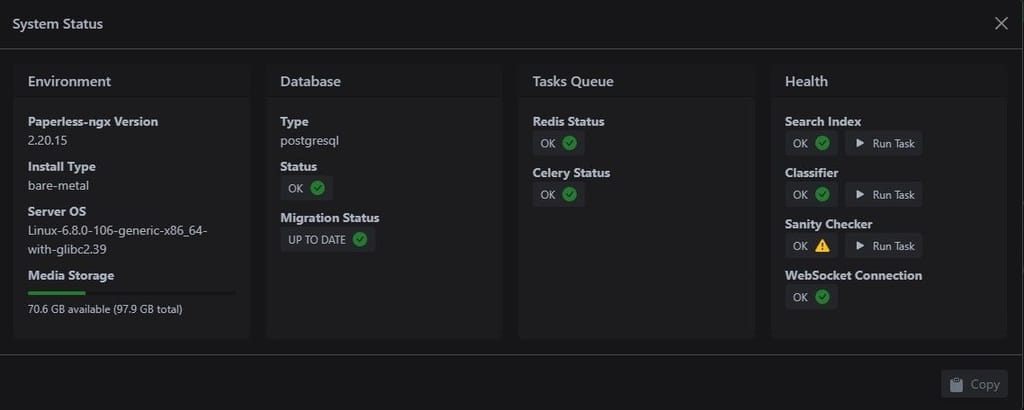

Please post the output ofcloudron-support --troubleshoot.I just tried to reproduce this and the @paperless-ngx shows this status:

{ "pngx_version": "2.20.15", "server_os": "Linux-6.8.0-106-generic-x86_64-with-glibc2.39", "install_type": "bare-metal", "storage": { "total": 105089261568, "available": 75854053376 }, "database": { "type": "postgresql", "url": "db03d44177398c497fbc6f4e8c08ee8092", "status": "OK", "error": null, "migration_status": { "latest_migration": "documents.1075_workflowaction_order", "unapplied_migrations": [] } }, "tasks": { "redis_url": "redis://redis-03d44177-398c-497f-bc6f-4e8c08ee8092:6379", "redis_status": "OK", "redis_error": null, "celery_status": "OK", "celery_url": "celery@03d44177-398c-497f-bc6f-4e8c08ee8092", "celery_error": null, "index_status": "OK", "index_last_modified": "2026-05-18T00:00:01.273615Z", "index_error": null, "classifier_status": "OK", "classifier_last_trained": "2026-05-18T08:05:01.763426Z", "classifier_error": null, "sanity_check_status": "OK", "sanity_check_last_run": "2026-05-17T00:30:01.353326Z", "sanity_check_error": null }, "websocket_connected": "OK" }

-

Cloudron dashboard not responding - box and cloudron-firewall failingHello @flexplore

Please post the output ofcloudron-support --troubleshootand make sure you are not affected by https://forum.cloudron.io/topic/15442/ubuntu-24.04-kernel-6.8.0-110-regression-affecting-cloudron -

IsoFlow on Cloudron - Isometric network and infrastructure diagramsHello @neoplex

I have added your community app to https://forum.cloudron.io/post/121148 -

Community AppsIsoFlow

Detail Link / Info Wishlist topic Topic Author @neoplex Repository Repository Install CloudronVersions.json If you have questions or issues about this community app, please open a separate topic in the @community-apps category and link to this reply.

-

Cannot run app with BepInEx and ValheimPlusHello @overclockmp

Thanks for the detailed write-up, I will fix the package with your suggestions. -

Use scheduler to run the job queueHello @dennisj

Apologies I missed that.

The @mediawiki package by default runs:echo '==> Run cron job' && /usr/local/bin/gosu cloudron:cloudron /usr/bin/php /app/code/maintenance/runJobs.php --maxtime 50 --procs 1Every

*/1 * * * *minute.

See: https://git.cloudron.io/packages/mediawiki-app/-/blob/master/CloudronManifest.json?ref_type=heads#L29-L33 -

Use scheduler to run the job queueHello @dennisj

You can add your overrides in/app/data/LocalSettings.phpas documented in https://docs.cloudron.io/packages/mediawiki#changing-permissions -

Probleme nach der Aktivierung des 2FA PluginHello @patmo.de

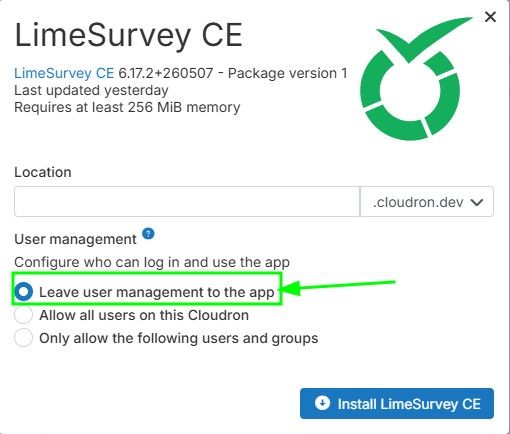

Please write topics in English to include everyone.In order to disable LDAP completely for @limesurvey you need to install the @limesurvey app with its own user management.

Otherwise, whenAllow all users on this Cloudronis selected, every app restart will configure and active the LDAP plugin again.I understand that you already have a @limesurvey app installed.

To migrate your @limesurvey app to theLeave user management to the appversion you need to follow some steps:- create a backup of your current @limesurvey app

- download the backup configuration

- install a new @limesurvey app and chose

Leave user management to the app - when the new app is fully installed go into the backup

Backupssettings of the new @limesurvey app and pressImport Backupand then clickupload a Backup Configto upload your previous downloaded config - press

Import - the app then restores from your backup

- confirm everything is working in order

- you are done

-

Issues with ChangeDetection and UptimeKumaHello @anthony-tran

Maybe you can try to disable IPv6 and see if this resolves the issue.

If so, try to enable IPv6 again and see if the issue remains solved or appears again. -

Vaultwarden fails to start after update – DB migration error (SSO)Hello @BetaBreak

Great to read that it resolved your issue.

Always happy to help.If possible, give the solution I linked also an upvote to improve the forum search.

-

Using OpenVPN on Cloudron as a client for other VPN services?Hello @nottheend

- "route all traffic to the cloudron VPN endpoint via a third party VPN?

The idea would be to only allow access to configured apps through the Cloudron VPN app or a custom VPN you can configure.

-

Issues with ChangeDetection and UptimeKumaHello @anthony-tran

Indeed, the IPv6 records exit.dig AAAA change.anthonytran.fr +short 2001:1600:13:101::1e95 dig AAAA my.anthonytran.fr +short 2001:1600:13:101::1e95So this is some DNS issue.

I you run thedigcommands from your Cloudron server and from inside the failing apps, do they resolve correctly? -

Issues with ChangeDetection and UptimeKumaHello @anthony-tran

What DNS provider are you using and what exactly is the error message from the app affected? -

Issues with ChangeDetection and UptimeKumaHello @anthony-tran

Aftert the update to9.1.7have you tried to restart the server once?

If not, please try.