I was able to decrypt the fsmetadata.json with the command

cloudron backup decrypt

so something seems to be broken with the cli. I also made sure to update it to the current version.

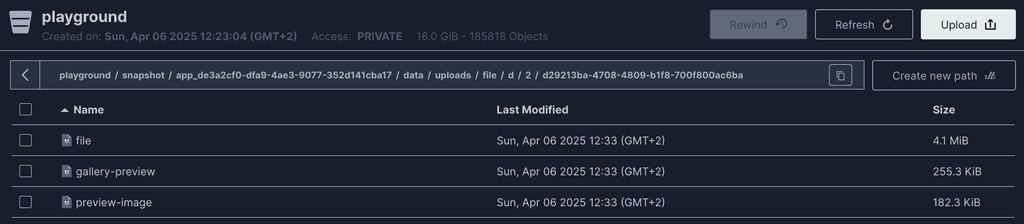

Now in the decrypted fsmetadata.json I found this part:

"execFiles": [

"./data/uploads/file/d/2/d29213ba-4708-4809-b1f8-700f800ac6ba/file",

"./data/uploads/file/d/3/d3e8ee5e-7ea5-496d-95d6-c6ca4ccf9cc1/file",

"./data/uploads/file/5/5/55ab31ec-5b88-483f-951f-e9623e8d4ff9/file",

"./data/uploads/file/5/e/5e1dbb9a-db2c-4ca9-a8a7-a07ad05d19f9/file",

"./data/uploads/file/5/1/516ea496-f931-45d4-97c9-99ff95c6cf9d/file",

"./data/uploads/file/5/a/5a981f44-ff10-4b49-842b-cc63616d846c/file",

"./data/uploads/file/5/3/532d9935-3eec-45c3-9469-b736ec86bdf5/file",

"./data/uploads/file/2/d/2d582f11-046e-449a-a005-17fdf0a86579/file",

"./data/uploads/file/2/d/2d4d72fe-87a6-4aef-8fdb-78cff8be20af/file",

"./data/uploads/file/2/5/255ddaa6-0075-498f-a40f-67de3ef53c56/file",

"./data/uploads/file/2/e/2e76a400-8220-4fc3-bdf2-683a4aa8afb0/file",

"./data/uploads/file/2/6/26237f18-cde3-42b2-9ecc-5deca2fb68f2/file",

"./data/uploads/file/2/6/26274aff-c005-4f4d-81ca-0ebd0920d45e/file",

"./data/uploads/file/2/6/26c5e394-75c4-416e-8c23-3b6f70e840f5/file",

"./data/uploads/file/2/6/26cc0a5f-d2a7-40a4-8d28-d53b031fe8a0/file",

"./data/uploads/file/2/6/26cb69ad-ea82-4f12-8875-f37f3931d95c/file",

"./data/uploads/file/2/4/243ccdea-b57e-4425-b533-c1e6c67109fb/file",

"./data/uploads/file/2/9/29580126-7579-42fd-8f97-3892dd631d21/file",

"./data/uploads/file/2/9/29fdcfbb-33c2-47f2-a13c-6dfae0aa0d64/file",

"./data/uploads/file/e/2/e2eb9830-15ad-4a52-860b-8e3b10a558a5/file",

"./data/uploads/file/e/e/ee5320b0-9b3c-4eac-99a1-3faef8a1b020/file",

"./data/uploads/file/e/6/e60a8456-58c0-48ee-b082-23d7011213dc/file",

"./data/uploads/file/e/6/e60a8456-58c0-48ee-b082-23d7011213dc/old-5",

"./data/uploads/file/e/4/e4c62fcf-af7d-48bc-8774-9f81a89740b0/file",

"./data/uploads/file/e/9/e9a8304b-6ff9-4f97-9d71-864396833475/file",

"./data/uploads/file/e/9/e94109e2-5863-45e4-8e1b-45ac534ef125/file",

"./data/uploads/file/e/0/e0c16fc6-2b2c-40db-a919-1912c0a1562d/file",

"./data/uploads/file/1/6/16aa7a1a-26e6-47c0-aec9-b6af7ffe289d/file",

"./data/uploads/file/1/b/1b941dca-50fe-46c6-bb72-146ed4c6a0d0/file",

"./data/uploads/file/1/9/1976db36-ec69-46eb-ba56-e09dcde3d5aa/file",

"./data/uploads/file/a/e/ae267001-e6c1-4b17-a431-3c9b375603fc/file",

"./data/uploads/file/6/e/6e3a2379-ffa9-4926-9813-7a030f4e3f6d/file",

"./data/uploads/file/6/9/69fd301e-26e8-4c6b-896d-f11b636a7cb6/file",

"./data/uploads/file/6/0/6041fd0a-1aa6-4df2-9035-5b0149f37d19/file",

"./data/uploads/file/6/7/67fecd3c-c1eb-489a-8bdd-57c78a5a8fb3/file",

"./data/uploads/file/6/8/68bb454c-8edd-456b-8721-6929bbe95cb5/file",

"./data/uploads/file/6/8/68d2a785-f6aa-4dd4-80ea-65fb09c474b8/file",

"./data/uploads/file/b/d/bd36f035-e77b-4a96-9bb7-ddb65a5750b7/file",

"./data/uploads/file/b/e/beca9a5b-52dc-43ba-a83e-5153e001057b/file",

"./data/uploads/file/b/e/beefb3f9-13bd-42a4-9635-d6ffbe690cc4/file",

"./data/uploads/file/b/c/bc6c802c-7937-4f28-9ff0-c081e6d152fb/file",

"./data/uploads/file/b/9/b91796a9-14a2-4295-bcb0-c936f3c76e77/file",

"./data/uploads/file/4/a/4a2b4b01-e945-4167-adf2-2338d312bd82/file",

"./data/uploads/file/4/4/4493e6c2-eec2-48d6-ac39-063ac1a73e36/file",

"./data/uploads/file/4/f/4fcdd7fe-ac5b-4fbf-9a7b-74e9eb6e8d51/file",

"./data/uploads/file/f/2/f2027049-ebdb-4711-bc1e-41779f7ee270/file",

"./data/uploads/file/f/2/f2c92a65-f186-4947-9279-22d0310b2b01/file",

"./data/uploads/file/f/1/f190bce4-4238-45dd-b139-22a7ea27948d/file",

"./data/uploads/file/f/4/f4c41dbf-1882-46cb-837b-15a31a8733a9/file",

"./data/uploads/file/f/0/f0dee544-3b1e-442a-b441-7f8f036b5562/file",

"./data/uploads/file/c/d/cda56b9a-67a8-4747-a759-55696c719122/file",

"./data/uploads/file/c/5/c5f5d7ea-3554-4d05-89e7-4f514d55907d/file",

"./data/uploads/file/c/a/caa0771e-f1fa-4778-b211-2529903fac92/file",

"./data/uploads/file/c/9/c9f19e18-3ac9-4281-ae1d-915c1ae3ef08/file",

"./data/uploads/file/9/6/96af3a57-12b9-49bb-96ed-c0a4b78f3137/file",

"./data/uploads/file/9/6/962112d7-71f9-4023-aadb-25e3b9404297/file",

"./data/uploads/file/0/d/0dadd978-eda1-43d4-9c45-21a92a5ee8a5/file",

"./data/uploads/file/0/5/05972e67-fe2d-449b-a4e6-f7de08f9b0d1/file",

"./data/uploads/file/0/a/0a2d0fa1-42dc-4b41-a5f4-182221ba7209/file",

"./data/uploads/file/0/3/03a5bbe4-a228-478d-9775-3e32b8fb52b3/file",

"./data/uploads/file/3/2/326bec47-8e46-424c-bff8-7ed2a9c8fcf5/file",

"./data/uploads/file/3/e/3e5626f3-8fcd-46f1-9f16-0e3811d12050/file",

"./data/uploads/file/3/e/3edbf99e-d675-4a00-b540-0b0df1f41051/file",

"./data/uploads/file/3/f/3f5c1c60-d5f6-4bde-ba53-09bcf56ce738/file",

"./data/uploads/file/3/c/3c80e130-01de-46c6-9d95-93147b98b145/file",

"./data/uploads/file/3/c/3c80e130-01de-46c6-9d95-93147b98b145/old-4",

"./data/uploads/file/7/2/72549e3c-c857-4f49-bc25-08e91804fc21/file",

"./data/uploads/file/7/1/71dde4c2-261b-4333-82bf-d46e1fed81d6/file",

"./data/uploads/file/7/c/7c8c02ac-5c38-4b85-9d74-a9e343b29d00/file",

"./data/uploads/file/8/d/8d2f9876-f045-45a3-9014-b6e751d6fa87/file",

"./data/uploads/file/8/5/856fc239-2a32-47d4-9734-4a5a21f23cd5/file",

"./data/uploads/file/8/e/8e0aca86-4abb-458b-a5e7-8e889f5c1a63/file",

"./data/uploads/file/8/f/8f30270d-7339-42c6-8019-7d2647530c00/file",

"./data/uploads/file/8/3/83f431b4-15bb-4ccd-81c3-72051d4447ca/file",

"./data/uploads/file/8/8/881409a4-fb7f-4af7-97bb-4cc144ba61e2/file"

],

This seems very strange to me because it sets all these files as executable if I understand this correctly? But these are user uploaded files that do not (and should not) be executed as far as I understand?