We managed to deploy Prosody using Cloudron. Here are some notes which we hope might help. For us, Dino was an easier client to use than Kaidan.

Packaging Prosody 13.0.6 (XMPP) for Cloudron: what worked, what bit us

We packaged Prosody 13.0.6 as a Cloudron app at xmpp.example.com with LDAP auth, HTTP file upload, multi-device sync (carbons + MAM), MUC, and 1:1 audio/video via the turn addon. It scores 91% on compliance.conversations.im and passes the connect.xmpp.net TLS/connectivity checks.

The headline finding is a good-news one that contradicts older guidance, so it leads. Then the writeup splits for three audiences: people who just want to run it, people packaging Prosody (or any multi-domain app) for Cloudron, and the Prosody developers.

Built on the shoulders of DerekJarvis/cloudron-prosody (a fork of SaraSmiseth/prosody). Thank you both.

Our packaging (the CloudronManifest, the start script, the cert layout, and the 13.0.6 pin described below) is published at palladium.wanderingmonster.dev/palladium-dragon/prosody-cloudron if you want to reuse or adapt it.

TL;DR: the six things worth knowing

Cloudron 9.x exposes per-alias TLS certs inside the container, at /etc/certs/<domain>.cert and /etc/certs/<domain>.key, not just the primary tls_cert.pem. This overturns the "primary-domain-only" reading of the tls addon docs and removes the old copy-certs-from-the-host hack for federating component subdomains.

Use simple JIDs (user@xmpp.example.com, where the app domain is the VirtualHost). This sidesteps the apex-cert problem and collapses four component subdomains down to one (conference.).

LDAP auth means clients must use SASL PLAIN (over TLS). Many clients disable PLAIN by default and then fail in a way that looks exactly like a wrong password.

A/V works server-side via the turn addon plus mod_turn_external (XEP-0215). The practical limiter is the client: XMPP A/V calling clients are Linux-desktop only today.

A handful of build traps (apt nightly-vs-stable, Podman, registry, core-module conflicts, ENTRYPOINT), with fixes below.

The health check needs a real 200. A 404 is treated as unhealthy.

(a) For people who just want to run it

JIDs are user@xmpp.example.com. Cloudron users log in with their Cloudron username (or email) as the JID localpart and their Cloudron password. There is nothing to configure inside the XMPP app itself; every Cloudron user is automatically an XMPP user.

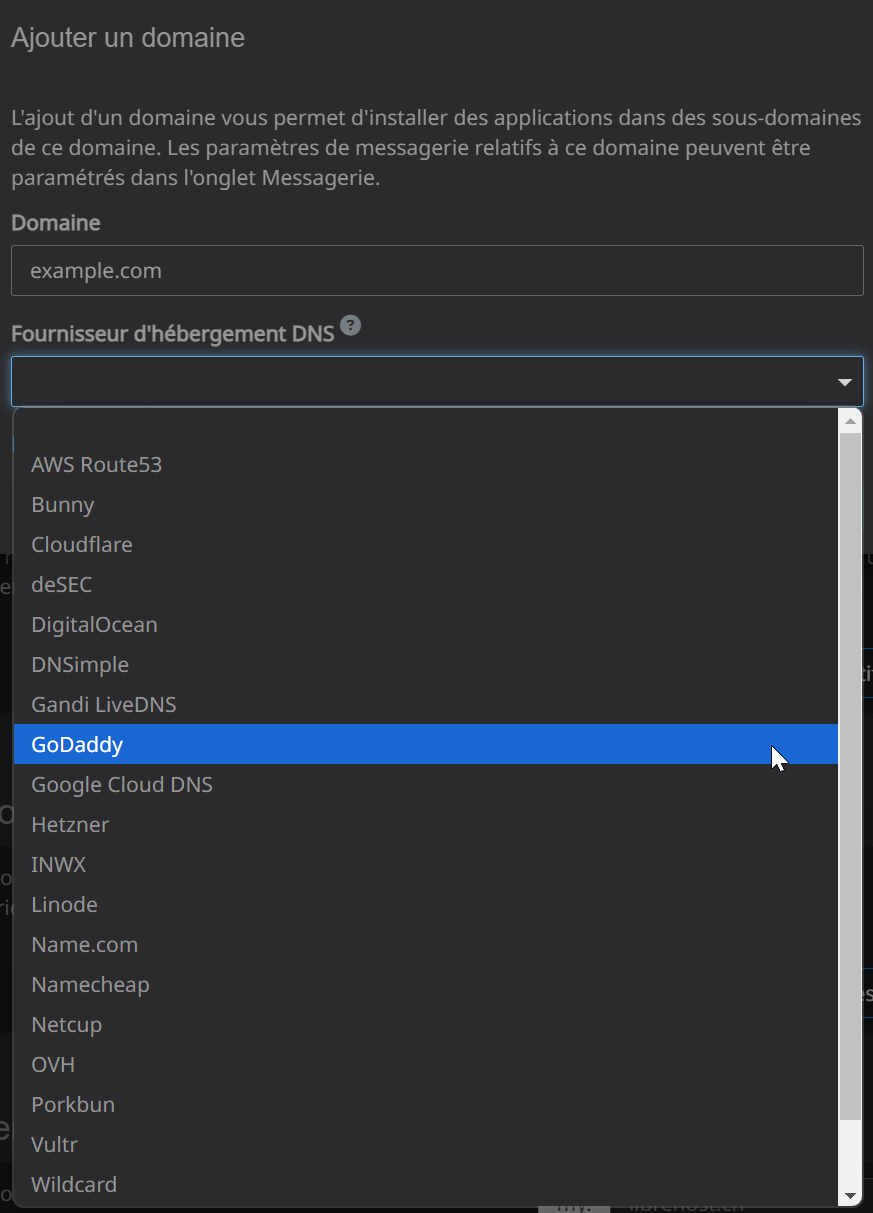

One DNS/alias to add: conference.xmpp.example.com (the MUC component, the only thing that federates). Add it as a Cloudron app alias:

cloudron configure --app xmpp.example.com --location xmpp \

--alias-domains conference.xmpp.example.com

On a Cloudron-managed DNS zone this auto-creates the record and provisions the cert. PEP covers pubsub, file upload is served on the main host, and proxy65 is dropped, so no other subdomains are needed.

Optional SRV records (these improve federation discoverability but are not required, since the JID domain is also the connect host on standard ports):

_xmpp-client._tcp.xmpp.example.com. 300 IN SRV 0 5 5222 xmpp.example.com.

_xmpps-client._tcp.xmpp.example.com. 300 IN SRV 0 5 5223 xmpp.example.com.

_xmpp-server._tcp.xmpp.example.com. 300 IN SRV 0 5 5269 xmpp.example.com.

_xmpp-server._tcp.conference.xmpp.example.com. 300 IN SRV 0 5 5269 xmpp.example.com.

Client login gotcha. If login fails like a wrong password, enable SASL PLAIN (sometimes labelled "allow cleartext auth") in your client. Section (c) explains why this is necessary and why it is safe (c2s requires TLS, so the password only ever travels encrypted).

Encryption. OMEMO is encouraged but not forced (optional policy); c2s requires TLS regardless.

Calls reality check. The server is call-ready, but a working XMPP A/V client is Linux-desktop only right now: Dino, or the experimental calling in Kaidan 0.15. Gajim's A/V is non-functional, and there is no working macOS or mobile XMPP calling client today. Plan for the client side, not the server side.

(b) For packagers (Prosody, or any multi-domain Cloudron app)

Base and version

FROM docker.io/cloudron/base:5.0.0 (Ubuntu noble; fully-qualify the name so Podman does not prompt, see the build note below).

Install from the official Prosody apt repo, but pin the stable package:

apt-get install -y prosody=13.0.6-1~noble1

The trap: the prosody-13.0 package is a nightly branch build (it self-reports "13.0 nightly build N"), not the stable point release. Verify with dpkg-query -W prosody. Do not run prosody --version in the build; Prosody's root-guard refuses to run as root and fails the build.

Cloudron specifics

Use CMD, never ENTRYPOINT. ENTRYPOINT breaks Cloudron's debug mode. Put the entrypoint logic in a script invoked by CMD.

Debian FHS paths come with the apt package: config in /etc/prosody, binary /usr/bin/prosody, modules /usr/lib/prosody, Lua 5.4. Point data_path, certificates, run_dir, and pidfile at writable locations (/app/data, /run).

Read addon env on every boot. Never bake CLOUDRON_LDAP_* or CLOUDRON_TURN_* into static config; they change on restart. Map them to your config env in the start script, then gosu prosody:prosody prosody -F.

The health check needs a real 2xx. Cloudron marks the app unhealthy on a 404. We use the community mod_http_host_status_check, which serves GET /host_status_check as HTTP 200, and set healthCheckPath: /host_status_check. Note the distinction: the often-repeated "Prosody 404s in a browser, that's fine" remark applies only to the bare root path a human hits, not to the health path, which must return 200.

Certificates (the headline)

The tls addon exposes the primary cert at /etc/certs/tls_cert.pem and tls_key.pem. On Cloudron 9.x it also exposes a per-alias cert at /etc/certs/<alias-domain>.cert and <alias-domain>.key.

So for a federated MUC subdomain: add it as an alias, then copy /etc/certs/conference.<domain>.{cert,key} into the certs/<domain>/{fullchain,privkey}.pem layout Prosody auto-discovers (chown prosody, key mode 0640). No host-path hack, no cron cert-sync. This is the part that previously forced people into copying the whole host cert directory in, and on 9.x it is no longer necessary.

Wildcard nesting matters: *.example.com covers xmpp.example.com but not conference.xmpp.example.com. The alias yields a *.xmpp.example.com cert, which does.

Modules in 13.0

Many modules older guides copy from the community repo are core now: smacks, turn_external, mam, carbons, csi_simple, muc_mam, server_contact_info, auth_ldap, cloud_notify, and vcard_muc. Copying the community cloud_notify or vcard_muc triggers a "conflict with built-in feature" error; just enable the core ones. We copy only host_status_check, http_host_status_check, e2e_policy, filter_chatstates, and throttle_presence.

Build and deploy

This host runs Podman, not Docker. cloudron build shells out to docker, so bridge it with a docker-to-podman shim early on PATH, plus REGISTRY_AUTH_FILE=~/.docker/config.json so podman push finds Docker's credentials.

cloudron build (local) needs a registry the box can pull from. It builds, pushes, then reads the pushed image's digest for cloudron install. --no-push fails with "Failed to detect sha256". A remote box cannot use a locally-built image without a registry (we used a self-hosted Forgejo container registry). The registry-free "build on the box" experience is the separate Docker Builder app, which still pushes to a registry it manages.

CLI version: there is no 9.x CLI. cloudron tops out at 8.2.6 and works fine against a 9.1.7 box; the CLI and server follow separate version lines.

LDAP

authentication = "ldap"; ldap_mode = "bind"

ldap_server = CLOUDRON_LDAP_SERVER:CLOUDRON_LDAP_PORT

ldap_base = CLOUDRON_LDAP_USERS_BASE_DN

ldap_rootdn = CLOUDRON_LDAP_BIND_DN ; ldap_password = CLOUDRON_LDAP_BIND_PASSWORD

ldap_filter = "(&(objectclass=user)(|(username=$user)(mail=$user)))"

Cloudron user objects are objectclass=user with username, mail, and uid.

A/V (TURN)

Declare the turn addon. mod_turn_external reads CLOUDRON_TURN_{SERVER,PORT,TLS_PORT,SECRET} and advertises STUN/TURN/TURNS via XEP-0215 with time-limited HMAC REST credentials:

turn_external_host = CLOUDRON_TURN_SERVER -- the panel host, e.g. my.example.com

turn_external_port = 3478 ; turn_external_tls_port = 5349

turn_external_secret = CLOUDRON_TURN_SECRET

coturn is fronted on the panel host (my.example.com), with a relay UDP range of 50000-51000. Provider-firewall dependency: 3478 and 5349 (TCP+UDP) and the relay range must be reachable from the internet. Cloudron's own firewall opens them; your cloud provider's security group might not. This is the classic "calls connect then drop" cause, so test it before blaming anything else (the WebRTC Trickle ICE page is the quickest check).

Ports / manifest

httpPort: 5280 (BOSH/websocket/file-upload, fronted by Cloudron TLS on 443).

tcpPorts: 5222 (c2s STARTTLS), 5223 (c2s direct-TLS, XEP-0368), 5269 (s2s).

addons: localstorage, tls, ldap, turn; multiDomain: true.

(c) For the Prosody developers

Config-sandbox noise. Prosody 13's config sandbox logs a deprecation for every os.getenv/tonumber ("replace os with Lua.os"). For env-driven container configs that is dozens of warning lines per boot. A documented, warning-free idiom for reading env vars in config would help packagers.

SASL and LDAP bind. With bind-mode LDAP, Prosody only offers PLAIN (there is no reusable secret, so no SCRAM). This is correct, but it surprises users whose clients disable PLAIN by default and then see a generic auth failure. A clearer client-facing error ("server offers only PLAIN; enable cleartext-over-TLS") would cut support load. Partly a client issue, but worth a doc note.

Headless/health conventions. The de-facto Cloudron health endpoint (mod_http_host_status_check) lives in community modules. First-class guidance for "Prosody as a backend service behind a managed proxy" (health route, trusted proxy, http_external_url) would help.

Managed coturn. mod_turn_external plus a managed coturn that fronts on a different hostname than the JID works well via XEP-0215 / TURN REST (use-auth-secret). A short doc example would help packagers on Cloudron and other managed platforms.

Validation results

compliance.conversations.im: 91% (Prosody 13.0.6 detected). Compliant XEPs include 0215 (STUN+TURN), 0045 (MUC), 0313 (MAM + MUC-MAM), 0280 (Carbons), 0198 (Stream Management), 0363 (HTTP Upload), 0357 (Push), 0384 (OMEMO), 0163 (PEP), 0368, Roster Versioning, 0191 (Blocking), and 0352 (CSI).

TLS. c2s StartTLS (5222), c2s Direct-TLS (5223), and s2s (5269) all present a valid Let's Encrypt certificate. TLS 1.0/1.1 refused, 1.2/1.3 only.

The A/V path. We confirmed the relay path two independent ways: a WebRTC Trickle ICE test gathered a relay candidate from the coturn relay range, and the compliance tester passed XEP-0215 for both STUN and TURN. A live client call additionally confirmed XEP-0353 call signalling routing through Prosody. Worth flagging for anyone testing this: an unanswered call does not by itself exercise TURN, because modern Jingle defers relay allocation until the callee accepts, so a true two-party media test needs two live endpoints.

Federation. The MUC subdomain presents a CA-trusted *.xmpp.example.com certificate on s2s, and Prosody correctly refuses remote servers presenting self-signed certificates.

Credits

DerekJarvis/cloudron-prosody and SaraSmiseth/prosody for the base image and config structure; the Prosody project; and the Cloudron team and the forum threads on the turn and tls addons. Our resulting package is at palladium.wanderingmonster.dev/palladium-dragon/prosody-cloudron.

2

4 Votes4 Posts1k Views

2

4 Votes4 Posts1k Views