Scaling / High Availability Cloudron Setup

-

@marcusquinn

You can just not have live HA, but like a soft one.

If a container is on a node that is not responding you can start it on a new node.@moocloud_matt Thanks, I know what HA is, and how it works, and far too many options for it - it's still the wrong approach for almost all online services.

One thing I've learned in all my years is to always discount, ignore and do the opposite of anyone that says "just" in any comments, because they always represent the vast difference in time and cost between saying and doing.

I'm well aware of the vast industry of people peddling HA pipe-dreams - I'm pretty sure I could beat all of them for uptime, by specifically avoiding doing every single thing they recommend, and just having the tried and tested strategy of keeping it simple.

If you can't take a month off and then another month doing different things without having to do any maintenance or explain anything to anyone, your stack is too complicated.

All HA ever did for me was cost me an additional couple of employees just to continually maintain it, and generally take away resources and attention from the actual things users wanted.

No K8S, no excessive expertise costs, and no uptime problems since because there's just less to go wrong, and less opinion to distract from the actual usage of services that funds them.

-

@moocloud_matt Thanks, I know what HA is, and how it works, and far too many options for it - it's still the wrong approach for almost all online services.

One thing I've learned in all my years is to always discount, ignore and do the opposite of anyone that says "just" in any comments, because they always represent the vast difference in time and cost between saying and doing.

I'm well aware of the vast industry of people peddling HA pipe-dreams - I'm pretty sure I could beat all of them for uptime, by specifically avoiding doing every single thing they recommend, and just having the tried and tested strategy of keeping it simple.

If you can't take a month off and then another month doing different things without having to do any maintenance or explain anything to anyone, your stack is too complicated.

All HA ever did for me was cost me an additional couple of employees just to continually maintain it, and generally take away resources and attention from the actual things users wanted.

No K8S, no excessive expertise costs, and no uptime problems since because there's just less to go wrong, and less opinion to distract from the actual usage of services that funds them.

HA is a bit overpower for most customer that's true, but is the idea that people have of it, that push MSP and CSP to have some lvl of HA.

Good hardware components can be a good solution, but if you have to restore a raid from a backup it will take a lot of time, and some customer don't want to take that risk.

They just prefer to have an soft HA set-up.KISS is always the good way in the IT, but not always it's possible to "keep it simple s*" .

-

HA is a bit overpower for most customer that's true, but is the idea that people have of it, that push MSP and CSP to have some lvl of HA.

Good hardware components can be a good solution, but if you have to restore a raid from a backup it will take a lot of time, and some customer don't want to take that risk.

They just prefer to have an soft HA set-up.KISS is always the good way in the IT, but not always it's possible to "keep it simple s*" .

@moocloud_matt In 20 years of hosting web apps, I cannot recall a single instance of hardware failure causing service or data loss. Not one.

There are network interruptions but they usually get solved through mutual interests in that being solved.

I have however see frequent data loss from software issues, and in the absolute vast majority of cases it was human error.

Software High Availability is a contradiction because it is adding the element of human reliance to a system, and my experience was that the software was never finished, always being updated and frequently failing.

Hardware resilience and redundancy is the only method of data security that is almost immune to interference.

In my experience "Customers" have no idea or interest in risk assessment, and they just want things to work - it is "solutions" sales people that try to sell them solutions to risks they didn't know they had to have in the first place for the sake of securing support retainers.

I'm sure you know what you want - but I would never invest in what you suggest purely because you are trying to sell it.

To me the best solution is one that does not need the person selling it, and that is where actually the oldest solutions have stood the test of time and new solutions are creating the problems they want to sell the solutions for because they cannot scare their customers into support retainers if they were given solutions that just worked because they don't need to keep changing.

-

@moocloud_matt In 20 years of hosting web apps, I cannot recall a single instance of hardware failure causing service or data loss. Not one.

There are network interruptions but they usually get solved through mutual interests in that being solved.

I have however see frequent data loss from software issues, and in the absolute vast majority of cases it was human error.

Software High Availability is a contradiction because it is adding the element of human reliance to a system, and my experience was that the software was never finished, always being updated and frequently failing.

Hardware resilience and redundancy is the only method of data security that is almost immune to interference.

In my experience "Customers" have no idea or interest in risk assessment, and they just want things to work - it is "solutions" sales people that try to sell them solutions to risks they didn't know they had to have in the first place for the sake of securing support retainers.

I'm sure you know what you want - but I would never invest in what you suggest purely because you are trying to sell it.

To me the best solution is one that does not need the person selling it, and that is where actually the oldest solutions have stood the test of time and new solutions are creating the problems they want to sell the solutions for because they cannot scare their customers into support retainers if they were given solutions that just worked because they don't need to keep changing.

Obviously, it's true what you say, it's just base on Hardware Defines Stuff.

We use a lot of software define storage SDS, or software defines networking, and with that, we can easily without many efforts push SoftHA to everybody.

Not for the customer, but for us especially.

If we maintain an HDD or just OS patch/update (we use LivePatch from January, so no need reboot now) take time and sometimes customer need to use their app on that time.Some customers just want high SLA, just because the wrong maintenance or failure will make them lose a lot of money, and this is not seen as annual SLA, but maybe monthly, having just 5 min of downtime is really hard to offer without some kind of HA.

It's completely true that good hardware can reduce a lot of inconveniences, and in most cases, it will protect the customer really well. But now that we have SDS and is easy to manage and really robust, we can move to that and countless on hardware.

Obviously, there are 2 ways Hardware and Software and there are pros and cons for both, I just see Cloudron as more oriented on Software than hardware, and to replay the main question of this post, I think that softHA base on SDS can be easily implemented compared to some of the super costly hardware by DELL or HPE and provide scalability other than a soft approach to HA.

But I support your thesis to, good hardware is absolutely a good way to provide good SLA.

-

Obviously, it's true what you say, it's just base on Hardware Defines Stuff.

We use a lot of software define storage SDS, or software defines networking, and with that, we can easily without many efforts push SoftHA to everybody.

Not for the customer, but for us especially.

If we maintain an HDD or just OS patch/update (we use LivePatch from January, so no need reboot now) take time and sometimes customer need to use their app on that time.Some customers just want high SLA, just because the wrong maintenance or failure will make them lose a lot of money, and this is not seen as annual SLA, but maybe monthly, having just 5 min of downtime is really hard to offer without some kind of HA.

It's completely true that good hardware can reduce a lot of inconveniences, and in most cases, it will protect the customer really well. But now that we have SDS and is easy to manage and really robust, we can move to that and countless on hardware.

Obviously, there are 2 ways Hardware and Software and there are pros and cons for both, I just see Cloudron as more oriented on Software than hardware, and to replay the main question of this post, I think that softHA base on SDS can be easily implemented compared to some of the super costly hardware by DELL or HPE and provide scalability other than a soft approach to HA.

But I support your thesis to, good hardware is absolutely a good way to provide good SLA.

@moocloud_matt Which SDS do you use?

-

@moocloud_matt Which SDS do you use?

@robi

Mostly Ceph, if is not needed an other stack by the customer.

But I have tested recently the new ScaleOutZFS by truenas scale, and it's really good and easy to manage, but is not a really 100% SDS. -

@robi

Mostly Ceph, if is not needed an other stack by the customer.

But I have tested recently the new ScaleOutZFS by truenas scale, and it's really good and easy to manage, but is not a really 100% SDS.@moocloud_matt have you played with Open vStorage? MaxIOPS? ...?

-

@moocloud_matt have you played with Open vStorage? MaxIOPS? ...?

@robi

Not that I remember, but I'm not actually the CTO, so in some cases i just know of the production / future production ready solution that we are testing or using.

I don't keep up with all the project that we test or try. -

@robi

Not that I remember, but I'm not actually the CTO, so in some cases i just know of the production / future production ready solution that we are testing or using.

I don't keep up with all the project that we test or try.@moocloud_matt Okie.. if you get to ask him, see what else he's tried.

-

Hey everyone, hope it's ok if I chime in here and ask if anyone has built something in this direction. I'm asking because I got approached to host a static site (basically html, js and a few smaller images) which has trafficspikes where 50k+ users will try to access it for a short period of time and then mostly idles again. How would you go about that, is this doable on Cloudron? I did manage to have 100s of users, but 1000s is a different story

Can the surfer app (being a node server and all) handle that load if there's enough CPU/RAM on the host machine? Or would you rather build a custom nginx app which does nothing but serve compressed static files? Or fire up some smaller VPS, install nginx and use a load balancer to spread the traffic? Any information and suggestion is appreciated

-

Hey everyone, hope it's ok if I chime in here and ask if anyone has built something in this direction. I'm asking because I got approached to host a static site (basically html, js and a few smaller images) which has trafficspikes where 50k+ users will try to access it for a short period of time and then mostly idles again. How would you go about that, is this doable on Cloudron? I did manage to have 100s of users, but 1000s is a different story

Can the surfer app (being a node server and all) handle that load if there's enough CPU/RAM on the host machine? Or would you rather build a custom nginx app which does nothing but serve compressed static files? Or fire up some smaller VPS, install nginx and use a load balancer to spread the traffic? Any information and suggestion is appreciated

-

Hey everyone, hope it's ok if I chime in here and ask if anyone has built something in this direction. I'm asking because I got approached to host a static site (basically html, js and a few smaller images) which has trafficspikes where 50k+ users will try to access it for a short period of time and then mostly idles again. How would you go about that, is this doable on Cloudron? I did manage to have 100s of users, but 1000s is a different story

Can the surfer app (being a node server and all) handle that load if there's enough CPU/RAM on the host machine? Or would you rather build a custom nginx app which does nothing but serve compressed static files? Or fire up some smaller VPS, install nginx and use a load balancer to spread the traffic? Any information and suggestion is appreciated

@msbt said in Scaling / High Availability Cloudron Setup:

Can the surfer app (being a node server and all) handle that load if there's enough CPU/RAM on the host machine?

For static content, this should be quite easily achievable. Have you tried running any load tests? There's a bunch of variables here like network and disk speed. Best to measure the actual server setup.

-

@msbt I actually think for your scenario with mostly static content, you could put Cloudflare or similar in front of that app to sustain those spike times.

-

@msbt said in Scaling / High Availability Cloudron Setup:

I wanted to avoid Cloudflare traffic

If you're simply against Cloudflare as some people seem to be but open to other CDNs, I can vouch for BunnyCDN, everyone raves about them and they are very inexpensive too for the offerings. They also seem to be about to launch some DNS packages, I think they're slowly taking direct aim at Cloudflare and seem a little more friendly to use.

-

@msbt said in Scaling / High Availability Cloudron Setup:

I wanted to avoid Cloudflare traffic

If you're simply against Cloudflare as some people seem to be but open to other CDNs, I can vouch for BunnyCDN, everyone raves about them and they are very inexpensive too for the offerings. They also seem to be about to launch some DNS packages, I think they're slowly taking direct aim at Cloudflare and seem a little more friendly to use.

-

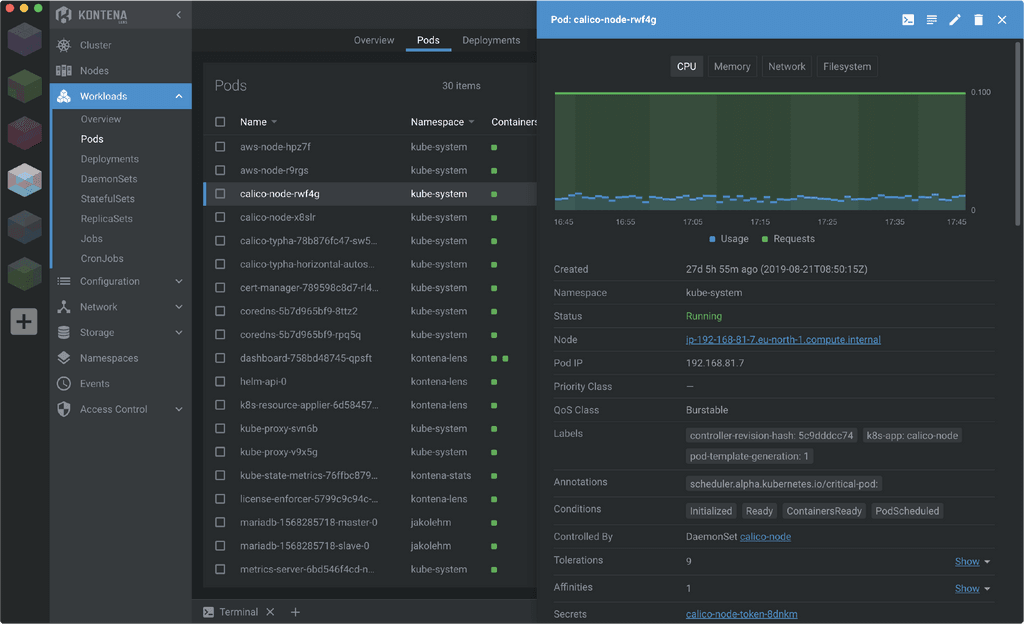

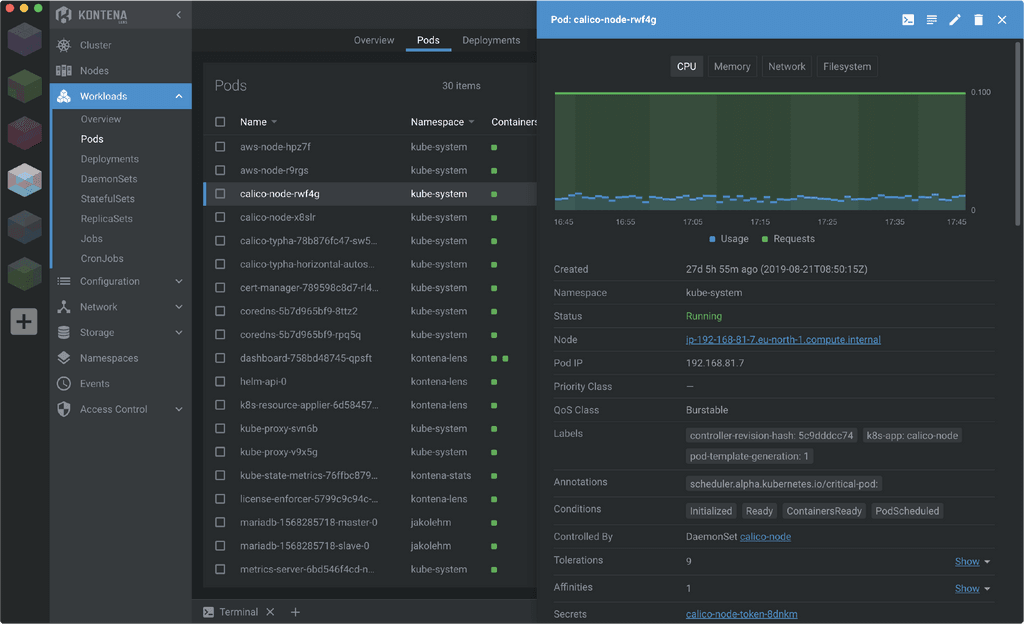

Moving to a K8s is hard for sure, simple things get more complex. But I think that's the best long term and where I see things going at large. Maybe setting it up as a single-node k8s would be easier initially?

If Cloudrun was set up to run as a single or multi-node Kubernetes (k3s/rke) cluster it opens the door to tons of cool stuff. You could for example use Rancher/Fleet to manage all your Cloudron/K8s migrations, monitoring, security, etc.

I use Rancher/k8s for multi-node / multi-cluster stuff and Cloudron for single system. Rancher connecting to a k8s version of Cloudrun would be the pie in the sky for me.

-

Moving to a K8s is hard for sure, simple things get more complex. But I think that's the best long term and where I see things going at large. Maybe setting it up as a single-node k8s would be easier initially?

If Cloudrun was set up to run as a single or multi-node Kubernetes (k3s/rke) cluster it opens the door to tons of cool stuff. You could for example use Rancher/Fleet to manage all your Cloudron/K8s migrations, monitoring, security, etc.

I use Rancher/k8s for multi-node / multi-cluster stuff and Cloudron for single system. Rancher connecting to a k8s version of Cloudrun would be the pie in the sky for me.

Found this recently: https://k0sproject.io/

Not sure how much it can be applied to HA Cloudron but it seems promising

*edit: apparently the screenshot is from a client called Lens which has a small/resonable license fee"k0s is the simple, solid & certified Kubernetes distribution that works on any infrastructure: bare-metal, on-premises, edge, IoT, public & private clouds. It's 100% open source & free. Get started today!"

EDIT:

"Lens for the web browser - coming soon" Currently is a desktop app...Lens is also open source and the "free" tier seems like it would fit a majority of Cloudron's users use cases...k0s is free/open source too where as Lens does have a "Pro" version for teams and is only $19.99 a month per user but is not "necessary"

-

Found this recently: https://k0sproject.io/

Not sure how much it can be applied to HA Cloudron but it seems promising

*edit: apparently the screenshot is from a client called Lens which has a small/resonable license fee"k0s is the simple, solid & certified Kubernetes distribution that works on any infrastructure: bare-metal, on-premises, edge, IoT, public & private clouds. It's 100% open source & free. Get started today!"

EDIT:

"Lens for the web browser - coming soon" Currently is a desktop app...Lens is also open source and the "free" tier seems like it would fit a majority of Cloudron's users use cases...k0s is free/open source too where as Lens does have a "Pro" version for teams and is only $19.99 a month per user but is not "necessary"

@plusone-nick nice fine. You tried it for anything?

-

@plusone-nick nice fine. You tried it for anything?

@marcusquinn not yet, plan on dabbling with it soon though. Another interesting project where I actually found k0s: https://elest.io/ learned of elest.io from the Penpot team lol

Hello! It looks like you're interested in this conversation, but you don't have an account yet.

Getting fed up of having to scroll through the same posts each visit? When you register for an account, you'll always come back to exactly where you were before, and choose to be notified of new replies (either via email, or push notification). You'll also be able to save bookmarks and upvote posts to show your appreciation to other community members.

With your input, this post could be even better 💗

Register Login