@necrevistonnezr this looks slick, going to look in to when I get a sec

ethanxrosen

Posts

-

My experience migrating a ~2TB cloudron to a server with mixed SSD/HDD drives + current setup -

My experience migrating a ~2TB cloudron to a server with mixed SSD/HDD drives + current setup@p44 i'm using rsync with hardlinks again, but via command line outside cloudron. i sync the entire backup folder but leave out --delete so it accumulates indefinitely on my home drive, something like

rsync -avH -e "ssh -p 202" --progress user@<backup-box-ip>:/backup/directory /local/directoryHonestly there's probably a better way... My drive will fill up eventually and all the daily/weekly backups aren't really necessary in cold storage. (Any suggestions?) I would also need to install cloudron locally if i ever want to access my files offline.

Have seen others mention restic / borg in the forum.

-

OpenWebUI has an option for a SearXNG API key@Divemasterza figured out how to get this to work without making searx public (from https://forum.cloudron.io/topic/10618/how-to-connect-from-one-app-to-another-via-the-internal-network/6)

you can have cloudron oauth enabled but bypass it by calling its local hostname instead of public URL from openwebui. Tweaking @coniunctio 's query:

http://<searx app id>:<searx port>/search?q=<query>&language=auto&time_range=&safesearch=0&categories=social+media,map,it,general,science,news&format=jsonexample:

http://12345678-1234-1234-1234-abcdefabcdef:8888/search?q=<query>&language=auto&time_range=&safesearch=0&categories=social+media,map,it,general,science,news&format=jsonfind app id from cloudron dashboard, port from searxng filemanager->settings.yml under 'port:'

-

My experience migrating a ~2TB cloudron to a server with mixed SSD/HDD drives + current setupHey all, writing up some notes from a recent migration. To me (as but an enthusiast) how to go about this was not obvious from the docs or existing posts, so hopefully this is helpful to others.

My setup for context:

- cloudron: a hetzner auction server with mixed HDD+SDD

- backup box: a storage VPS in a different geographical location, using SSHFS (encrypted rsync)

- had a good experience with this so far, the ability to use hardlinks is a game changer for storage size, as well as not being charged per request

- requires manually setting up a secure ssh box. I used unattended-upgrades and ufw

- for peace of mind, i also do an offline manual backup to a drive at home every ~3-6 months

- Mixed HDD+SSD was useful for keeping cost low while balancing the needs of large apps like Nextcloud vs most other apps on cloudron. I haven't had much luck mounting external storage, so liked the idea of a bigger drive locally. the deal i found happened to have 3 drives and i laid them out like this

- 2x512GB SSD with RAID1; default drive mounted at /, for apps where i care about both uptime & speed (email,websites..)

- 1x1TB SSD; less critical apps where i care about speed, media-heavy apps like immich

- 1x2TB HDD; large storage apps (Nextcloud)

- I haven't yet set up email RAID monitoring if anyone has advice there

Before the migration i had to

- partition drives, mount extra SSD and HDD in /etc/fstab, set up RAID

- whitelist ip of the new server in my domain provider's API if needed. this was the case for namecheap.

- note down my ssh private key from the backup user (normally in /home/yellowtent/ssh) on the old server before powering it down. the backup config file doesn't include this automatically.

- i think it's best to harden SSH and firewall after fully completing restore. i tried to do this after installing cloudron but before restoring via the web interface, but seemed to always run into issues. not sure if other people have had this experience.

I ran into an issue where on my root 0.5TB drive, nextcloud would not fit. there wasn't a clear way to assign a data directory for it in advance of restore, so i had to restore it on a per-app basis via the following:

- start the restore via https://your-new-ip

- wait until the dashboard is up

- mount additional drives as volumes (i used filesystem(mountpoint)). I believe currently mounting them in /etc/fstab does not automatically make them recognized within cloudron.

- go to large app->uninstall menu->Archive

- there didn't seem to be a way to pause the restore, or change data directory while running the restore, so this was the only way i found to prevent completely filling my drive. after a while when restore for other apps finished, this app was left in Error state and I could then successfully archive on the second attempt.

- download app backup config from backups->archive

- install new instance of large app from the appstore that matches the backup app version

- download a specific version of an app by changing the appstore URL: ie https://my.server.com/#/appstore/com.nextcloud.cloudronapp?version=5.5.2 <-- change to version=x.x.x

- move this app's data directory to the volume where your additional drive is mounted (app settings->storage->data directory)

- import app backup using the config file downloaded earlier

Curious how other's experience has been with large migrations, if you've found easier ways. Also your server/backup setups for dealing with apps like Nextcloud. I think some of this process can be improved or clarified in documentation/error messages. I.e. a note to remember to whitelist new server IP in domain provider API.

Having an option to mount volumes and assign per-app data-directory as part of the restore wizard would be fantastic.

-

Backup Strategy AdviceI think it probably makes more sense for most people who are using a storage VPS to forego installing Cloudron+Minio and instead just connect to their VPS with SSHFS.

If you use Minio's S3 api, there's a big disadvantage of no hard-links with rsync right? So total backup storage balloons if you want anything but a barebones retention policy. In my case the difference was around 5TB

. On the other hand setting up a secure SSH server is almost easier than a second cloudron (get a firewall going and auto update with unattended-upgrades). Not sure how people's experience is with backup stability over SSHFS these days.

. On the other hand setting up a secure SSH server is almost easier than a second cloudron (get a firewall going and auto update with unattended-upgrades). Not sure how people's experience is with backup stability over SSHFS these days.Saw that ServaRica had some good prices competitive with Hetzner / alphaVPS.

-

Nextcloud external storage - backup strategy?@ekevu123 i think you can include external storage as part of the backup by making your external storage the data directory for nextcloud (https://docs.cloudron.io/apps/#data-directory)

for backups, from this thread (https://forum.cloudron.io/topic/4548/backup-strategy-advice) it seems like most people will either use a CIFS mounted storage box like Hetzner, or a separate storage VPS with Minio installed, + encrypted rsync. If you use S3 style storage like backblaze or wasabi, rsync hardlinks aren't yet supported, so you end up paying a lot for duplicated storage and API calls. Hopefully this helps!

-

Best practise for Nextcloud setup (NVMe) with additional HDD drives@joseph i think you might've mistaken my question for one about backups, but no worries you guys already had the answer here: https://docs.cloudron.io/apps/#data-directory

-

Best practise for Nextcloud setup (NVMe) with additional HDD drivesalso curious about @Stardenver 's original question as i'm considering switching to a server with both an SSD and HDD drives

I have a pretty big nextcloud instance that i'd like to keep on the HDD. I assume I would install cloudron and most of the apps on the SSD, and then bind-mount nextcloud's /home/yellowtent/ folder to the HDD from command line?

Alternatively, is there a way to do this from the cloudron web interface; not volumes, as I think that would exclude my nextcloud files cloudron's backup.

-

Renaming book throws permission error after using cloudron file managerHey All, circling back on this after a while, I don't think it was a permissions issue after all.

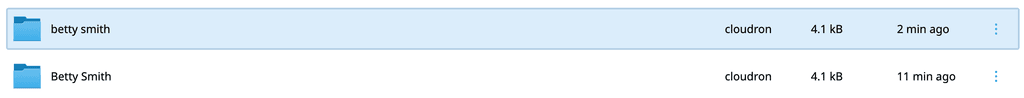

I suspect the way calibre desktop and calibre-web handle capitalization is slightly different and changes stored in metadata.db are case sensitive, so when I uploaded my library folder there were for whatever reason changes to the capitalization of author names that caused the issue.

Solved this by renaming folders and files in file manager to match what the error message was expecting, for example if the "Betty Smith" folder wasn't found, I renamed lowercase "betty smith" to "Betty Smith", after which I was able to normally use calibre-web. Had to do this for ~5-10% of books which is a pain but glad to have it working again. If anyone has found a better solution eager to hear it, scanning through the library for books with missing covers was a good indicator of where the broken links were.

-

What is Cloudron latest version?Hey all, is there a place this info can be found if your dashboard is down and you don't have access to the system info page / update section? Need to restore but unsure what version I was on around ~apr9th (7.6.___?)

-

Renaming book throws permission error after using cloudron file managerthis is what i get from ls -ld

drwxr-xr-x 147 ubuntu ubuntu 4096 Jan 14 19:16 library/ -

Renaming book throws permission error after using cloudron file managerHi All, I attempted to upload a number of audiobooks to calibre web using the cloudron file manager, as 1-by-1 via the calibre web interface would have been painstakingly slow.

I downloaded /library to my desktop, opened it with calibre locally, added the audio files, then re-uploaded the entire folder (including metadata.db based on advice from previous threads). Things seemed to be working fine and this process was fairly quick, until attempting to fetch metadata on some books which renamed author and/or title. Calibre web threw this error:

Rename title from: '/app/data/library/Betty Smith/A Tree Grows in Brooklyn (154)' to '/app/data/library/Betty Smith/A Tree Grows in Brooklyn (154)' failed with error: [Errno 2] No such file or directory: '/app/data/library/Betty Smith/A Tree Grows in Brooklyn (154)'When i look in file manager I can see a duplicate folder with different capitalization, and in calibre the book formats/files I had in the older folder are no longer accessible.

I believe this has something to do with permissions of the calibre web user as I'm seeing a similar error to https://github.com/janeczku/calibre-web/issues/637. I thought this might be related to the specific library folder I uploaded so I restored to a previous backup of calibre-web, however (quite unfortunately) the issue still persists now even when using my old un-tampered library.

Anybody have advice on how to fix the permissions of my library folder, or other insights into the source of the issue?

-

Joplin not responding - db: Could not connect@girish this was an old install, and it looks like a new install works OK

there's one extra line in the new config vs old:

# add custom configuration here. be sure to restart the app if you edit this file # https://github.com/laurent22/joplin/blob/dev/packages/server/src/env.ts SIGNUP_ENABLED=0 TERMS_ENABLED=0 MAX_TIME_DRIFT=100 <--- wasn't in old installI tried copying it over to see what it would do but the app still seems unresponsive. When i try cloning from backup the same error appears. Is there a way to repair / restore the env file?

-

Joplin not responding - db: Could not connect@girish, are there any other logs / info that would be helpful or things I could try on my end?

-

Joplin not responding - db: Could not connect -

Joplin not responding - db: Could not connectHi All,

Joplin on my server hasn't responding the last week or so. So far I've tried app reboot, reconfigure, and a server reboot. It looks like the app has been looping through this error in the logs:

Apr 08 11:19:02 2023-04-08 18:19:02: App: Starting server v2.10.11 (prod) on port 3000 and PID 35... Apr 08 11:19:02 2023-04-08 18:19:02: App: NTP time offset: -35ms Apr 08 11:19:02 2023-04-08 18:19:02: App: Running in Docker: true Apr 08 11:19:02 2023-04-08 18:19:02: App: Public base URL: ___________________ Apr 08 11:19:02 2023-04-08 18:19:02: App: API base URL: _______________ Apr 08 11:19:02 2023-04-08 18:19:02: App: User content base URL: _____________ Apr 08 11:19:02 2023-04-08 18:19:02: App: Log dir: /app/code/packages/server/logs Apr 08 11:19:02 2023-04-08 18:19:02: App: DB Config: { Apr 08 11:19:02 client: 'pg', Apr 08 11:19:02 name: '_____________________', Apr 08 11:19:02 slowQueryLogEnabled: false, Apr 08 11:19:02 slowQueryLogMinDuration: 1000, Apr 08 11:19:02 autoMigration: true, Apr 08 11:19:02 user: '__________________', Apr 08 11:19:02 password: '********', Apr 08 11:19:02 port: 5432, Apr 08 11:19:02 host: 'postgresql' Apr 08 11:19:02 } Apr 08 11:19:02 2023-04-08 18:19:02: App: Mailer Config: { Apr 08 11:19:02 enabled: true, Apr 08 11:19:02 host: 'mail', Apr 08 11:19:02 port: 2525, Apr 08 11:19:02 security: 'none', Apr 08 11:19:02 authUser: '____________________________', Apr 08 11:19:02 authPassword: '********', Apr 08 11:19:02 noReplyName: 'Joplin', Apr 08 11:19:02 noReplyEmail: '___________________' Apr 08 11:19:02 } Apr 08 11:19:02 2023-04-08 18:19:02: App: Content driver: { type: 1 } Apr 08 11:19:02 2023-04-08 18:19:02: App: Content driver (fallback): null Apr 08 11:19:02 2023-04-08 18:19:02: App: Trying to connect to database... Apr 08 11:19:02 2023-04-08 18:19:02: db: Could not connect. Will try again. Cannot read properties of undefined (reading 'name') Apr 08 11:19:03 2023-04-08 18:19:03: db: Could not connect. Will try again. Cannot read properties of undefined (reading 'name') Apr 08 11:19:04 2023-04-08 18:19:04: db: Could not connect. Will try again. Cannot read properties of undefined (reading 'name') Apr 08 11:19:05 2023-04-08 18:19:05: db: Could not connect. Will try again. Cannot read properties of undefined (reading 'name') Apr 08 11:19:06 2023-04-08 18:19:06: db: Could not connect. Will try again. Cannot read properties of undefined (reading 'name') Apr 08 11:19:07 2023-04-08 18:19:07: db: Could not connect. Will try again. Cannot read properties of undefined (reading 'name') Apr 08 11:19:08 2023-04-08 18:19:08: db: Could not connect. Will try again. Cannot read properties of undefined (reading 'name') Apr 08 11:19:09 2023-04-08 18:19:09: db: Could not connect. Will try again. Cannot read properties of undefined (reading 'name') Apr 08 11:19:10 2023-04-08 18:19:10: db: Could not connect. Will try again. Cannot read properties of undefined (reading 'name') Apr 08 11:19:11 2023-04-08 18:19:11: db: Could not connect. Will try again. Cannot read properties of undefined (reading 'name') Apr 08 11:19:12 2023-04-08 18:19:12: db: Could not connect. Will try again. Cannot read properties of undefined (reading 'name') Apr 08 11:19:13 2023-04-08 18:19:13: db: Could not connect. Will try again. Cannot read properties of undefined (reading 'name') Apr 08 11:19:14 2023-04-08 18:19:14: db: Could not connect. Will try again. Cannot read properties of undefined (reading 'name') Apr 08 11:19:15 2023-04-08 18:19:15: db: Could not connect. Will try again. Cannot read properties of undefined (reading 'name') Apr 08 11:19:16 2023-04-08 18:19:16: db: Could not connect. Will try again. Cannot read properties of undefined (reading 'name') Apr 08 11:19:17 2023-04-08 18:19:17: db: Could not connect. Will try again. Cannot read properties of undefined (reading 'name') Apr 08 11:19:18 2023-04-08 18:19:18: db: Could not connect. Will try again. Cannot read properties of undefined (reading 'name') Apr 08 11:19:19 2023-04-08 18:19:19: db: Could not connect. Will try again. Cannot read properties of undefined (reading 'name') Apr 08 11:19:20 2023-04-08 18:19:20: db: Could not connect. Will try again. Cannot read properties of undefined (reading 'name') Apr 08 11:19:21 2023-04-08 18:19:21: db: Could not connect. Will try again. Cannot read properties of undefined (reading 'name') Apr 08 11:19:22 2023-04-08 18:19:22: db: Could not connect. Will try again. Cannot read properties of undefined (reading 'name') Apr 08 11:19:23 2023-04-08 18:19:23: db: Could not connect. Will try again. Cannot read properties of undefined (reading 'name') Apr 08 11:19:24 2023-04-08 18:19:24: db: Could not connect. Will try again. Cannot read properties of undefined (reading 'name') Apr 08 11:19:25 2023-04-08 18:19:25: db: Could not connect. Will try again. Cannot read properties of undefined (reading 'name') Apr 08 11:19:26 2023-04-08 18:19:26: db: Could not connect. Will try again. Cannot read properties of undefined (reading 'name') Apr 08 11:19:27 2023-04-08 18:19:27: db: Could not connect. Will try again. Cannot read properties of undefined (reading 'name') Apr 08 11:19:28 2023-04-08 18:19:28: db: Could not connect. Will try again. Cannot read properties of undefined (reading 'name') Apr 08 11:19:29 2023-04-08 18:19:29: db: Could not connect. Will try again. Cannot read properties of undefined (reading 'name') Healtheck error: Error: connect ECONNREFUSED 172.18.18.23:30002023-04-08T18:19:30.000Z 2023-04-08 18:19:30: db: Could not connect. Will try again. Cannot read properties of undefined (reading 'name') Apr 08 11:19:31 2023-04-08 18:19:31: db: Could not connect. Will try again. Cannot read properties of undefined (reading 'name') Apr 08 11:19:32 2023-04-08 18:19:32: db: Could not connect. Will try again. Cannot read properties of undefined (reading 'name') Apr 08 11:19:32 2023-04-08 18:19:32: [error] db: Timeout trying to connect to database: TypeError: Cannot read properties of undefined (reading 'name') Apr 08 11:19:32 at /app/code/packages/server/src/db.ts:411:25 Apr 08 11:19:32 at Generator.next (<anonymous>) Apr 08 11:19:32 at fulfilled (/app/code/packages/server/dist/db.js:5:58) Apr 08 11:19:32 at processTicksAndRejections (node:internal/process/task_queues:95:5) Apr 08 11:19:32 Error: Timeout trying to connect to database. Last error was: Cannot read properties of undefined (reading 'name') Apr 08 11:19:32 at /app/code/packages/server/src/db.ts:118:10 Apr 08 11:19:32 at Generator.next (<anonymous>) Apr 08 11:19:32 at fulfilled (/app/code/packages/server/dist/db.js:5:58) Apr 08 11:19:32 at processTicksAndRejections (node:internal/process/task_queues:95:5)Does anyone have advice on how to get it working again?

-

Customize '3 week app update' special backup retention policy for nextcloudUse case-

Nextcloud updates very frequently, meaning that even if you only intend to keep 1-2 app backups at a time, you can end up with 5 or 6 due to cloudron's 3 week app update policy: https://docs.cloudron.io/backups/#retention-policyIf you have a 1tb nextcloud, this triples the storage costs / box space and adds up really quick. I think this is especially unnecessary when many people will also have a physical drive synced via the nextcloud client as a third backup.

You can delete these backups manually from your storage provider if you remember to, but it would be awesome to have an option to customize this, so people can enable automatic updates+backups of large apps in cloudron without driving too much cost.

-

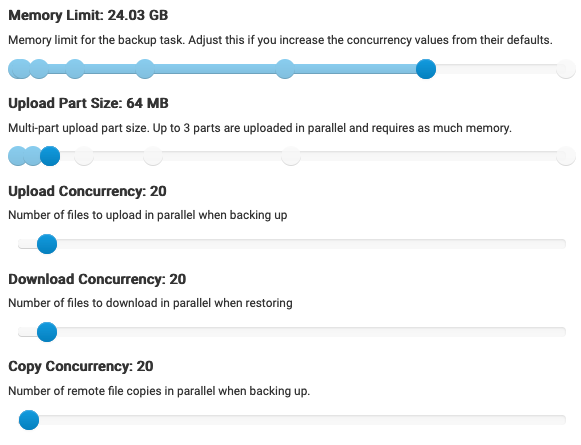

Optimal settings for Backblaze or other s3 backup servicesFinally managed to get backups stable with backblaze for a ~1TB cloudron with these settings (using encryption and rsync):

Some notes:

- During initial backups, memory was by far the most common failure. The amount needed was higher than I first expected for a given upload size and concurrency. I found (upload part size) x (upload concurrency) x 4 was roughly the amount needed. i.e. (64mb)*(20)*4 = ~5120mb.

- Scheduling backups at a time when server load is low and allotting as much memory as comfortable helped stability a lot. I ended up keeping my memory limit much higher than strictly necessary. This could probably be reduced, but stability is more important to me at the moment.

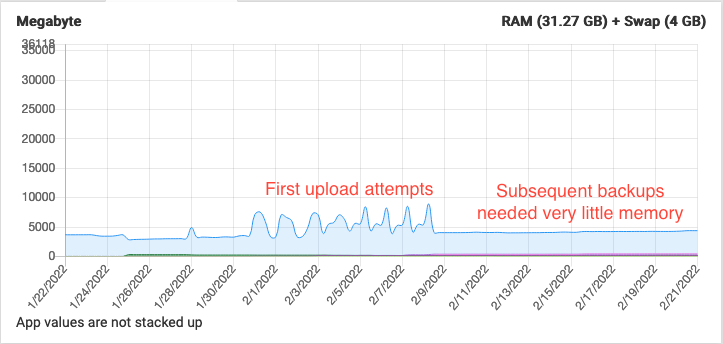

- In the graph above memory tended to spike once per backup- @girish maybe this is an issue that could be optimized?

- After initial upload, the memory required for subsequent rsyncs dropped off significantly as expected

- Uploading ~1TB of data with the above took approx. 24h

Curious to see what others find from optimizing these further, but glad to have a working baseline in the mean time.

I'm also curious to see what people's monthly bills tally up to. Waiting for the first month's bill to come in but B2 seems more cost effective than Wasabi so far!

-

Backblaze encrypted backup with Rsync failsFor future reference i managed to clear it up by using a slightly modified version of Girish's search command. It seems like the length limit applies to path length not just the filename length

find . -type f -print|awk '{print length($0), $0}' | sort -nWent into the web interface and deleted / renamed files longer than 156 bytes and finally cleared trashbin/versioned files

(wipes all deleted / old versions of files - careful):

sudo -u www-data php -f /app/code/occ versions:cleanup sudo -u www-data php -f /app/code/occ trashbin:cleanup@robi @girish agreed it would be awesome if there was an automated way to handle this in the future

-

Backblaze encrypted backup with Rsync failsHi All, I am unable to complete backup of a nextcloud instance (~500gb) with backblaze. I know cloudron backup is not usually recommended for larger apps but I thought I'd give it a shot again now that more backup parameters are tweakable. The error I am seeing seems related to encrypted file name length, here it is below:

Error uploading snapshot/app_<nextcloud app id here>/KQtuToxscu6Wp+TQwes2r+Zt37fqVz9KBKw9r3zlGFo/rLwS54KpQJ5BUWx9Wf57gBjNtYl00DkPTpRIO7DHr6E/TQkmjgv8A-8ri6mQv26IOC3SBV98HjxGQYu7Wj942Bc/uROadiwRY44JGJ7Dx8MZs9Tl43w1dViTXgkUZQELVs4/gfgicTfaoUCSMjUN1MOOBnZV3VIOt-jmyeRQIYHGPgs/yROzi4S0rB1UDLZciqxuJMHauKsCowwWvn14ymMFMCo/zK0oecogUE+CWK3JQQCDlW2mmn2Ny-BzrmGk3QokTgk/jOMbp4bhfQklbq5PlI9VYAxfjWMDqO-kd04br5t9gp4/Bdj3Hxfg03RHtuW1vhWh30hEtbB9hS75XLlvUkpdn5M-orp0303DwHg86Kj0jH+Z/hVvdpoNTbKd5ttdU9ZK5JkxtksDlRByNTlLrKZ0Kd5w/7Z2EkbU6DDooF1el4rNzETh4Hi9hW4gqC1yNKEy2byo/s788Z0aGNJt5k5t4LUbO8bmitJ+Y2lTTeYbNV-aDguk/TyEoW0Ge6PJHTg6BeGnNuN4ATowyTxWeejACY22-tgeQA7KJlA7-LVNN-ANz+xc8/DYluRyzYVRZRwR+OtWyLsND675uSdAq7WOzPwbl7tH4/jLUYu3qxS96Fj5-MwYq0YowSytZATPSJkcoBi-JbVgY/Hnvhas87QQpcrWkXBVoITd-eMTXxw6Gh4xCov9sqVsc/0y6Kh1Lt99UO6RdBTII7kgHHoPVLeSu9r-6HWmzi5MSBa9ioD6J9pkSDgI6bPq6a/7Z2EkbU6DDooF1el4rNzETh4Hi9hW4gqC1yNKEy2byo/Hnvhas87QQpcrWkXBVoITd-eMTXxw6Gh4xCov9sqVsc/1GQ+V1wtz+KCmZU1hEcQb0Wf0ZxKEAZhoejV31Cr6Lg/+tqCfG-K-nTRG3LiFmWOSqRg1HmzpiqY2ytGbHNF8Xxh4xhfznEEAfrMKhJIKDFl. Message: File name in UTF8 must be no more than 1024 bytes HTTP Code: InvalidRequest