O now I see it, I would appreciate it when the "admin page/url" won't be obsolete because its not always obvious where the admin-page is (like Surfer)

Marcel C

Posts

-

Cloudron v9.2.0 makes app-menu items disappear -

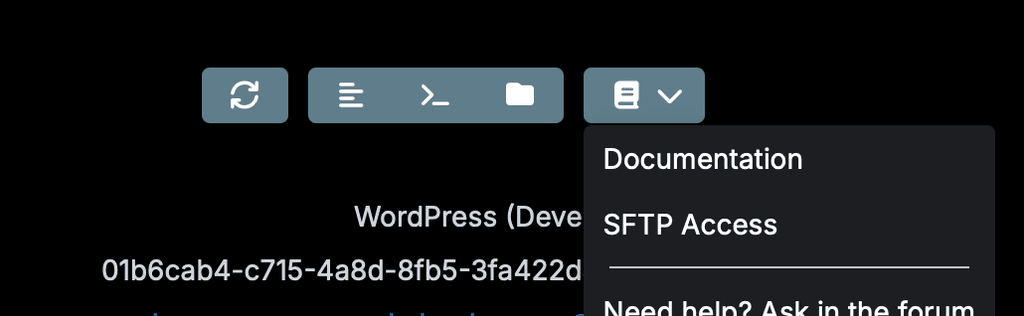

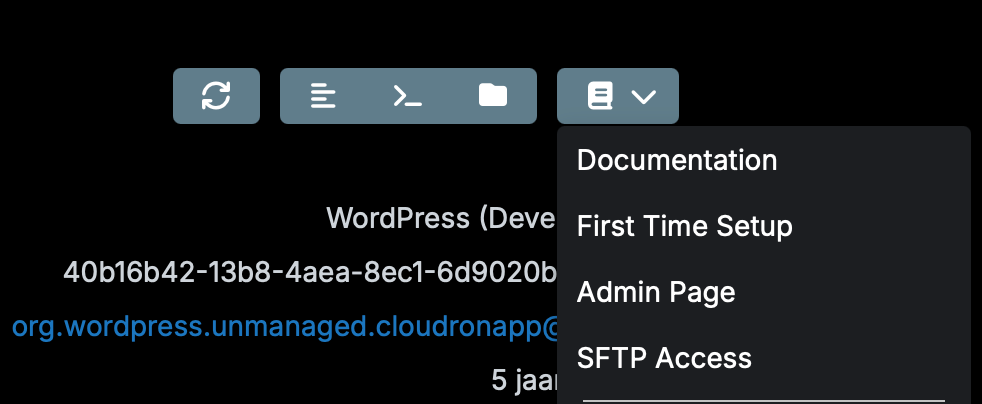

Cloudron v9.2.0 makes app-menu items disappearI noticed that since 9.2.0 the menu below is not complete anymore:

Cloudron 9.1.7:

-

client_max_body_size 2m in /api/ location blocks the large blocklists@robi I'm not a developer but a security concerned Cloudron user. The reason I started this application (LAMP-app, php and py) was that one of the users was lured into a phishing mail, his password was used for almost 2 months to send spam (via a direct SMTP connection, so no traces in IMAP). I was not able to discover this in Cloudron, that made me angry and extremely concerned: "what is happening more in the 3 Cloudrons I don't know because I can't see".

So I took a Claude AI subscription and in about 10 weeks daily coding, step-by-step I created this application but never with the thought of distributing it. So there is functionality (GUI) to add one or more Cloudrons, but ie. there is no user management (only 1 user) and the access is IP restricted to my 2 WAN IP's via DDNS reversed IP lookup, but on the other hand API keys (Cloudrons, AbuseIPDB, Pushover, ClaudeAI for daily email summary) etc. are managed via GUI.

To make it even a community app is totally out of my knowledge and comfort zone, moreover updating the app and being (feeling) responsible for others is too much. Maybe @staff can have a look with me to get inspired?

-

client_max_body_size 2m in /api/ location blocks the large blocklists@nebulon I created CRMON — Cloudron Security Monitor, CRMON is a self-built security monitoring platform I run to monitor three Cloudron servers. It combines several threat intelligence sources and pushes a consolidated blocklist nightly to each Cloudron's network firewall via the

/api/v1/network/blocklistAPI.Beyond the blocklist, CRMON also uses the Cloudron API to continuously monitor event logs: mail delivery events, IMAP/SMTP authentication failures, user login anomalies and nginx failed login attempts are fetched every few minutes and cross-referenced against AbuseIPDB. IPs exceeding configurable abuse score thresholds are auto-blocked and immediately (hourly alas because the API is not per IP) synced to the firewall.

The blocklist has 5 layers, each with a different purpose:

Layer Count Source Individual auto-blocks ~6,200 IMAP brute-force, login anomalies, mail abuse — auto-blocked based on AbuseIPDB score thresholds Wordfence (WordPress) ~4,200 A MU-plugin on ~25 WordPress sites reports attacker IPs in real time to CRMON, which syncs them to the firewall AbuseIPDB blacklist ~9,600 Daily import of the top-scoring IPs from the AbuseIPDB API FireHOL level1 ~4,500 Known malicious network CIDR ranges (botnets, scanners, exploits) Geo/country blocking ~63,000 IP ranges (IPv4 + IPv6) for ~23 high-risk countries (CN, RU, IR, KP, etc.) Why it grows: The Wordfence layer is the fastest-growing (+150–280 IPs/day) as WordPress sites under management continuously see new attackers. The geo layer is the largest by far — country-level CIDR ranges, especially for China and Russia, account for the bulk of entries.

The geo ranges alone explain why we're pushing well over 80,000 entries. Without geo blocking, the list would be ~20,000 entries and the 2 MB limit would never be an issue.

-

client_max_body_size 2m in /api/ location blocks the large blocklists@james the current blocklist payload (~2.03 MB) link I've sent you via PM

Note: this is the GET response (Python-encoded by our nightly geo sync, which doesn't escape /). The actual PHP POST payload was ~68 KB larger because PHP's json_encode() escapes all forward slashes as / by default — one extra byte per CIDR entry, ~68,000 CIDR entries total. This pushed the payload to ~2.10 MB, triggering the 413.

Workaround applied: json_encode($body, JSON_UNESCAPED_SLASHES) in our PHP code reduces it back to ~2.03 MB. We also manually patched the nginx config to client_max_body_size 10m as a structural fix.

Both are a temporary workaround as the list is increasing with 300-500 IP's a day.

-

client_max_body_size 2m in /api/ location blocks the large blocklistsFollowing the fix in #10547 that raised the ipset limit to 262,144 elements, we ran into the next bottleneck: the nginx client_max_body_size 2m on the /api/ location in the dashboard config prevents actually reaching anywhere near that element count via the API.

In /home/yellowtent/platformdata/nginx/applications/dashboard/<hostname>.conf:

location /api/ { proxy_pass http://127.0.0.1:3000; client_max_body_size 2m; ← limits POST body to ~86k entries }At ~23 bytes per entry (JSON-encoded), 2MB caps the blocklist at roughly 86,000 entries — well below the 262k ipset capacity. Anything larger returns HTTP 413.

Workaround: changing 2m to 10m in that location block fixes it immediately.

Request: could this limit be raised (or set to 0) in a future Cloudron release? Given the server-level client_max_body_size 0 already applies to all app traffic, the 2m restriction on /api/ seems overly conservative for the network blocklist endpoint.

-

Reverse geocoding (addition to Dawarich)Dawarich can be setup with "reverse geocoding", this is the process of converting geographic coordinates into a human-readable address.

The result is that Dawarich can create "visits and places", this feature tracks your visits to locations to build a comprehensive picture of your daily activities and travel history.

For this Dawarich recommends:

Currently, Dawarich supports 4 options for reverse geocoding services:

- Geoapify (free, limited usage, see Geoapify pricing)

- Photon (free, limited usage, 1 request per second)

- Self-hosted Photon (free, unlimited usage)

- Nominatim (free, limited usage, see Nominatim usage policy) or self-hosted Nominatim instance (free, unlimited usage)

So an new Cloudron-app either Photon or Nomanitim is very welcome to get the most out of Dawarich.

-

Dovecot > Sieve > central management@James does the moving from 'support' to 'feature requests' means it's currently not possible

?

? -

Dawarich signup env doesn't prevent from signing upo, wait, obviously there was somewhere an update and the setting is now in the app GUI! Be sure everyone to check this as the env didn't work anymore!

@staff please correct this in the docs

-

Dawarich signup env doesn't prevent from signing upHi guys,

this is a serious issue: setting this https://docs.cloudron.io/packages/dawarich#disable-registration doesn't work, I still can signup ...

-

Dovecot > Sieve > central managementI was wondering if it is possible in Cloudron to manage sieve centrally (via for example API or else) with server-wide rules?

Currently the only way to "push" server-wide "marks" on incoming email is via "Email Address Blocklist" but the result of that is: the mail is still delivered to the user's spam-folder.

I'm looking for a way to reject emails (discard) based on sender (I know it's possible at senders' IP address via blocklist)

-

What's coming in Cloudron 10@imc67 yes, that's part of the plan

@girish Would it be feasible to change the current methodology from text lists to database records for all block/allow-lists (mail, IP)? I manage the IP blocklist, via API, with around 90k rows and update it every hour with new and it takes about 2-3 minutes for each Cloudron-server to "handle" this. Better would be to have an API functionality with "list, add, remove"-commands.

-

How to update IP database?is it updating the database itself (I guess the IP-location info is changing often?) or still needed by app update and if so how often will the app be updated?

-

How to update IP database?@nebulon sounds like "an update of the app package is coming soon"

?

? -

How to update IP database?It’s not in the docs and the forum posts are also not 100% clear in the answer for: how does this app updates its IP2location database?

-

Feature Request: 🔥 Simple per-App WAF with Templates (KISS) 🏰You got an upvote for me in any event, but for this feature: https://docs.cloudron.io/guides/community/blocklist-updates

Haha, true, that’s my code (with your additions) I know that, but it’s not per app

-

What's coming in Cloudron 10@girish does this includes the email spam whitelist mentioned here with API and GUI?

https://forum.cloudron.io/topic/15310/spam-acl-whitelist-in-api-but-not-in-gui/ -

WARN on saving Custom Spamassassin rules@p44 with the excellent help of @d19dotca in https://forum.cloudron.io/topic/4770/sharing-custom-spamassassin-rules I have been using his latest versions since 2021. With my three different Cloudrons (together 65 users and 92 mailboxes) the spam was reduced to about 7%.

However due to some glitches it made me ask ChatGPT for a review, below my version as of today and the explanation by ChatGPT why:

After using the initial ruleset in production, I adjusted the scores with one main goal: reduce false positives without weakening real spam/phishing detection.

Key Changes & Reasoning

- DNSBL scores reduced slightly

Some lists (e.g. PBL, SBL, XBL) were too dominant—one hit could almost classify a message as spam. Lowered them so they still matter, but now require supporting signals. - URIBL rebalanced

Generic SURBL scores were reduced, while high-confidence Spamhaus DBL categories (phish/malware/botnet) remain strong. This avoids penalizing legitimate platforms with mixed reputation. - SPF/DKIM/DMARC normalized

Lowered impact of SPF softfail (common with forwarding)

Reduced trust in SPF/DKIM whitelists

Added KAM rules for better DMARC/reputation context - HTML/content rules softened

BODY_URI_ONLY reduced to avoid flagging legitimate newsletters and link-heavy emails. - Freemail & Reply-To tuning

Lowered FREEMAIL_REPLYTO to reduce false positives from SaaS/CRM systems. - RDNS/network heuristics reduced

Dynamic IP / missing rDNS is common in cloud setups, so these are now weaker signals. - Phishing & anomaly signals slightly tuned down

High-risk rules (like URI_PHISH) still strong, but less likely to trigger on borderline cases. - Date anomalies reduced

Time drift and header inconsistencies happen often in legitimate mail, so these are now low-weight.

Result

Less false positives (especially newsletters, SaaS, forwarded mail)

Spam now typically requires multiple signals instead of one strong hit

Phishing/malware detection remains solid

Overall, the system is now more balanced and closer to how modern email behaves.# ============================ # Bayesian Filtering (BAYES) # ============================ bayes_auto_learn 1 bayes_auto_learn_threshold_spam 6.0 score BAYES_00 -7.0 score BAYES_05 -4.0 score BAYES_20 -1.0 score BAYES_40 0.5 score BAYES_50 0.75 score BAYES_60 2.25 score BAYES_80 3.75 score BAYES_95 6.5 score BAYES_99 8.0 score BAYES_999 8.5 # ============================ # DNS-based Blocklists (DNSBL) # ============================ score RCVD_IN_BL_SPAMCOP_NET 4.0 score RCVD_IN_IADB_DK 0.0 score RCVD_IN_IADB_DOPTIN_LT50 0.0 score RCVD_IN_IADB_LISTED 0.0 score RCVD_IN_IADB_RDNS -0.25 score RCVD_IN_IADB_SENDERID -0.25 score RCVD_IN_IADB_SPF -0.25 score RCVD_IN_MSPIKE_BL 0.0 score RCVD_IN_MSPIKE_L2 1.0 score RCVD_IN_MSPIKE_L3 1.5 score RCVD_IN_MSPIKE_L4 3.5 score RCVD_IN_MSPIKE_L5 4.0 score RCVD_IN_MSPIKE_ZBI 4.0 score RCVD_IN_PBL 4.0 score RCVD_IN_PSBL 4.0 score RCVD_IN_SBL 4.5 score RCVD_IN_SBL_CSS 3.5 score RCVD_IN_VALIDITY_CERTIFIED 0.0 score RCVD_IN_VALIDITY_RPBL 0.0 score RCVD_IN_VALIDITY_SAFE 0.0 score RCVD_IN_XBL 5.5 score RCVD_IN_ZEN_BLOCKED 0.0 score RCVD_IN_ZEN_BLOCKED_OPENDNS 0.0 ## DNS Whitelists score RCVD_IN_DNSWL_BLOCKED 0.0 score RCVD_IN_DNSWL_HI -4.0 score RCVD_IN_DNSWL_LOW -1.0 score RCVD_IN_DNSWL_MED -4.5 score RCVD_IN_DNSWL_NONE 0.0 score RCVD_IN_MSPIKE_H2 0.0 score RCVD_IN_MSPIKE_H3 -0.25 score RCVD_IN_MSPIKE_H4 -0.5 score RCVD_IN_MSPIKE_H5 -1.0 score RCVD_IN_MSPIKE_WL 0.0 # ============================ # URI Blocklists (URIBL) # ============================ score URIBL_ABUSE_SURBL 4.5 score URIBL_BLACK 4.0 score URIBL_CR_SURBL 3.5 score URIBL_CSS 3.0 score URIBL_CSS_A 5.0 score URIBL_DBL_ABUSE_BOTCC 5.5 score URIBL_DBL_ABUSE_MALW 5.5 score URIBL_DBL_ABUSE_PHISH 5.5 score URIBL_DBL_ABUSE_REDIR 2.0 score URIBL_DBL_ABUSE_SPAM 5.5 score URIBL_DBL_BLOCKED 0.0 score URIBL_DBL_BLOCKED_OPENDNS 0.0 score URIBL_DBL_BOTNETCC 5.5 score URIBL_DBL_ERROR 0.0 score URIBL_DBL_MALWARE 5.0 score URIBL_DBL_PHISH 6.0 score URIBL_DBL_SPAM 6.0 score URIBL_GREY 0.25 score URIBL_MW_SURBL 5.0 score URIBL_PH_SURBL 5.0 score URIBL_RED 2.0 score URIBL_RHS_DOB 2.0 score URIBL_SBL 4.0 score URIBL_SBL_A 3.0 score URIBL_ZEN_BLOCKED 0.0 score URIBL_ZEN_BLOCKED_OPENDNS 0.0 # ============================ # Email Authentication (SPF/DKIM/ARC) # ============================ score ARC_INVALID 2.0 score ARC_SIGNED 0.0 score ARC_VALID 0.0 score DKIM_ADSP_ALL 2.0 score DKIM_ADSP_CUSTOM_MED 1.5 score DKIM_ADSP_NXDOMAIN 4.5 score DKIM_INVALID 3.5 score DKIM_SIGNED 0.0 score DKIM_VALID 0.0 score DKIM_VALID_AU 0.0 score DKIM_VALID_EF 0.0 score DKIM_VERIFIED 0.0 score DKIMWL_BL 3.0 score DKIMWL_WL_HIGH -4.5 score DKIMWL_WL_MED -3.5 score DKIMWL_WL_MEDHI -4.0 score FORGED_SPF_HELO 4.0 score NML_ADSP_CUSTOM_MED 2.0 score SPF_FAIL 4.5 score SPF_HELO_FAIL 3.0 score SPF_HELO_NEUTRAL 1.0 score SPF_HELO_NONE 0.0 score SPF_HELO_PASS -0.25 score SPF_HELO_SOFTFAIL 4.0 score SPF_NEUTRAL 0.0 score SPF_NONE 1.0 score SPF_PASS 0.0 score SPF_SOFTFAIL 1.0 score T_SPF_HELO_PERMERROR 0.0 score T_SPF_HELO_TEMPERROR 0.0 score T_SPF_PERMERROR 0.0 score T_SPF_TEMPERROR 0.0 score USER_IN_DEF_DKIM_WL -4.5 score USER_IN_DEF_SPF_WL -4.0 score DMARC_FAIL 5.0 score KAM_DMARC_STATUS 2.0 score KAM_SHORT 1.5 score KAM_DMARC_REJECT 3.5 score KAM_DMARC_QUARANTINE 2.5 score KAM_REPUTATION 2.0 # ============================ # HTML & MIME Structure Rules # ============================ score BODY_URI_ONLY 2.5 score DC_PNG_UNO_LARGO 1.5 score HTML_FONT_LOW_CONTRAST 0.0 score HTML_FONT_SIZE_LARGE 2.0 score HTML_FONT_TINY_NORDNS 0.0 score HTML_IMAGE_ONLY_04 1.0 score HTML_IMAGE_ONLY_08 1.5 score HTML_IMAGE_ONLY_16 2.5 score HTML_IMAGE_ONLY_24 3.5 score HTML_IMAGE_ONLY_32 4.5 score HTML_IMAGE_RATIO_02 0.25 score HTML_IMAGE_RATIO_04 0.25 score HTML_IMAGE_RATIO_06 0.25 score HTML_IMAGE_RATIO_08 0.25 score HTML_MESSAGE 0.0 score HTML_MIME_NO_HTML_TAG 0.5 score HTML_OBFUSCATE_05_10 0.5 score HTML_OBFUSCATE_10_20 1.0 score HTML_OBFUSCATE_20_30 2.0 score HTML_OBFUSCATE_30_40 2.5 score HTML_OBFUSCATE_50_60 3.0 score HTML_OBFUSCATE_70_80 3.5 score HTML_OBFUSCATE_90_100 4.0 score HTML_SHORT_LINK_IMG_1 2.0 score HTML_SHORT_LINK_IMG_2 3.0 score HTML_SHORT_LINK_IMG_3 3.0 score HTML_TAG_BALANCE_CENTER 0.25 score MIME_BASE64_TEXT 1.25 score MIME_HEADER_CTYPE_ONLY 0.5 score MIME_HTML_MOSTLY 0.0 score MIME_HTML_ONLY 0.0 score MIME_QP_LONG_LINE 0.25 score MPART_ALT_DIFF 0.75 score MPART_ALT_DIFF_COUNT 0.5 score T_KAM_HTML_FONT_INVALID 0.25 score T_TVD_MIME_EPI 0.25 # ============================ # Header / Envelope Heuristics # ============================ score HDRS_MISSP 4.0 score HEADER_FROM_DIFFERENT_DOMAINS 0.0 score HK_RANDOM_ENVFROM 3.0 score MAILING_LIST_MULTI 0.25 score MISSING_DATE 2.5 score MISSING_FROM 2.0 score MISSING_HB_SEP 2.0 score MISSING_HEADERS 6.0 score MISSING_MID 1.0 score MISSING_SUBJECT 1.0 score MSGID_OUTLOOK_INVALID 2.5 score NO_FM_NAME_IP_HOSTN 2.0 score REPLYTO_WITHOUT_TO_CC 2.5 score TO_NO_BRKTS_FROM_MSSP 2.5 score TO_NO_BRKTS_MSFT 2.5 score TVD_RCVD_IP 1.0 # ============================ # Freemail & Identity Rules # ============================ score FORGED_GMAIL_RCVD 3.0 score FORGED_MUA_OUTLOOK 3.0 score FORGED_YAHOO_RCVD 3.0 score FREEMAIL_ENVFROM_END_DIGIT 0.75 score FREEMAIL_FORGED_REPLYTO 3.5 score FREEMAIL_FROM 0.0 score FREEMAIL_REPLY 0.5 score FREEMAIL_REPLYTO 1.75 score FREEMAIL_REPLYTO_END_DIGIT 0.0 score FROM_EXCESS_BASE64 2.5 score FROM_FMBLA_NEWDOM 2.5 score FROM_FMBLA_NEWDOM14 3.0 score FROM_FMBLA_NEWDOM28 2.5 score FROM_GOV_SPOOF 3.5 score FROM_LOCAL_DIGITS 1.5 score FROM_LOCAL_HEX 1.5 score FROM_LOCAL_NOVOWEL 1.5 score FROM_MISSP_EH_MATCH 3.0 score FROM_MISSP_SPF_FAIL 4.0 score FROM_MISSPACED 3.0 score FROM_NTLD_REPLY_FREEMAIL 3.0 score FROM_STARTS_WITH_NUMS 1.0 score FROM_SUSPICIOUS_NTLD 2.0 score FROM_SUSPICIOUS_NTLD_FP 2.0 score GB_FREEMAIL_DISPTO 3.5 score GB_FREEMAIL_DISPTO_NOTFREEM 3.5 score HK_NAME_MR_MRS 2.5 score HK_RANDOM_FROM 1.5 score UNDISC_FREEM 2.5 # ============================ # Scam, Phishing & Social Engineering # ============================ score ADVANCE_FEE_2 3.0 score ADVANCE_FEE_2_NEW_FORM 3.0 score ADVANCE_FEE_2_NEW_MONEY 3.0 score ADVANCE_FEE_3 3.0 score ADVANCE_FEE_3_NEW 3.0 score ADVANCE_FEE_3_NEW_FORM 3.0 score ADVANCE_FEE_3_NEW_MONEY 3.0 score ADVANCE_FEE_4_NEW 3.0 score ADVANCE_FEE_5_NEW 3.0 score ADVANCE_FEE_5_NEW_FRM_MNY 3.0 score ADVANCE_FEE_5_NEW_MONEY 3.0 score BILLION_DOLLARS 1.0 score BITCOIN_DEADLINE 5.5 score BITCOIN_SPAM_03 5.5 score DEAR_FRIEND 2.0 score DEAR_SOMETHING 2.0 score DIET_1 1.0 score FUZZY_BITCOIN 2.5 score FUZZY_BTC_WALLET 2.5 score FUZZY_CLICK_HERE 1.5 score FUZZY_CREDIT 2.0 score FUZZY_IMPORTANT 2.5 score FUZZY_SECURITY 2.75 score FUZZY_UNSUBSCRIBE 1.0 score FUZZY_WALLET 2.0 score JOIN_MILLIONS 2.0 score LOTS_OF_MONEY 0.0 score MONEY_BACK 1.0 score NA_DOLLARS 1.0 score PDS_BTC_ID 4.0 score STOX_BOUND_090909_B 1.5 score SUBJ_ALL_CAPS 0.5 score SUBJ_AS_SEEN 0.75 score SUBJ_ATTENTION 1.5 score SUBJ_DOLLARS 0.25 score SUBJ_YOUR_DEBT 2.5 score SUBJ_YOUR_FAMILY 0.75 score THIS_AD 0.5 score TVD_PH_BODY_ACCOUNTS_PRE 2.0 score TVD_PH_BODY_META 1.5 score UNCLAIMED_MONEY 4.0 score URG_BIZ 1.5 score VFY_ACCT_NORDNS 3.0 # ============================ # Transport / Network Reputation Rules # ============================ score CK_HELO_GENERIC 1.5 score HELO_DYNAMIC_IPADDR 3.0 score HELO_DYNAMIC_IPADDR2 3.0 score HELO_DYNAMIC_SPLIT_IP 2.0 score KHOP_HELO_FCRDNS 4.0 score NO_RDNS_DOTCOM_HELO 3.0 score PDS_BAD_THREAD_QP_64 1.5 score PDS_RDNS_DYNAMIC_FP 0.5 score RCVD_HELO_IP_MISMATCH 1.75 score RCVD_ILLEGAL_IP 4.0 score RDNS_DYNAMIC 2.5 score RDNS_LOCALHOST 3.5 score RDNS_NONE 2.5 score SPAMMY_XMAILER 2.75 score TBIRD_SUSP_MIME_BDRY 2.5 score UNPARSEABLE_RELAY 0.0 # ============================ # URI & Link Obfuscation # ============================ score GOOG_REDIR_NORDNS 2.5 score HTTPS_HTTP_MISMATCH 1.5 score NORMAL_HTTP_TO_IP 3.0 score NUMERIC_HTTP_ADDR 3.0 score PDS_SHORT_SPOOFED_URL 3.0 score SENDGRID_REDIR 0.25 score T_PDS_OTHER_BAD_TLD 2.5 score TRACKER_ID 0.25 score URI_HEX 2.0 score URI_NO_WWW_BIZ_CGI 2.5 score URI_NO_WWW_INFO_CGI 2.5 score URI_NOVOWEL 0.5 score URI_OBFU_WWW 3.0 score URI_PHISH 5.5 score URI_TRUNCATED 3.0 score URI_WP_HACKED 6.0 score WEIRD_PORT 4.5 # ============================ # Miscellaneous Heuristics & Content Triggers # ============================ score ALIBABA_IMG_NOT_RCVD_ALI 2.5 score BIGNUM_EMAILS_FREEM 2.5 score BIGNUM_EMAILS_MANY 2.5 score DATE_IN_FUTURE_06_12 1.5 score DATE_IN_PAST_03_06 1.5 score DATE_IN_PAST_06_12 1.5 score ENV_AND_HDR_SPF_MATCH -4.0 score FILL_THIS_FORM 0.5 score FILL_THIS_FORM_LONG 0.5 score INVESTMENT_ADVICE 0.5 score MALWARE_NORDNS 5.0 score PLING_QUERY 1.0 score SHOPIFY_IMG_NOT_RCVD_SFY 0.75 score STOX_REPLY_TYPE 2.0 score STOX_REPLY_TYPE_WITHOUT_QUOTES 3.0 score SUSPICIOUS_RECIPS 2.5 score T_FILL_THIS_FORM_SHORT 0.25 score T_REMOTE_IMAGE 0.25 score TVD_SPACE_RATIO_MINFP -0.25 # ============================ # Spam Eating Monkey DNSBL lists # ============================ header RCVD_IN_SEM_BACKSCATTER eval:check_rbl('sembackscatter-lastexternal','backscatter.spameatingmonkey.net') describe RCVD_IN_SEM_BACKSCATTER Received from an IP listed by Spam Eating Monkey Backscatter list tflags RCVD_IN_SEM_BACKSCATTER net score RCVD_IN_SEM_BACKSCATTER 3.0 header RCVD_IN_SEM_BLACK eval:check_rbl('semblack-lastexternal','bl.spameatingmonkey.net') describe RCVD_IN_SEM_BLACK Received from an IP listed by Spam Eating Monkey Blocklist tflags RCVD_IN_SEM_BLACK net score RCVD_IN_SEM_BLACK 3.0 header RCVD_IN_SEM_NETBLACK eval:check_rbl('semnetblack-lastexternal','netbl.spameatingmonkey.net') describe RCVD_IN_SEM_NETBLACK Received from an IP listed by Spam Eating Monkeys Network Blocklist tflags RCVD_IN_SEM_NETBLACK net score RCVD_IN_SEM_NETBLACK 1.5 urirhssub SEM_FRESH30 fresh30.spameatingmonkey.net. A 2 body SEM_FRESH30 eval:check_uridnsbl('SEM_FRESH30') describe SEM_FRESH30 Contains a domain registered less than 30 days ago tflags SEM_FRESH30 net score SEM_FRESH30 3.5 urirhssub SEM_URI_BLACK uribl.spameatingmonkey.net. A 2 body SEM_URI_BLACK eval:check_uridnsbl('SEM_URI') describe SEM_URI_BLACK Contains a URI listed by Spam Eating Monkeys URI Blocklist tflags SEM_URI_BLACK net score SEM_URI_BLACK 2.5 # ============================ # JunkEmailFilter HostKarma DNSBL & DNSWL # ============================ header __RCVD_IN_HOSTKARMA eval:check_rbl('hostkarma','hostkarma.junkemailfilter.com.') describe __RCVD_IN_HOSTKARMA Sender listed in JunkEmailFilter tflags __RCVD_IN_HOSTKARMA net header RCVD_IN_HOSTKARMA_BL eval:check_rbl_sub('hostkarma','127.0.0.2') describe RCVD_IN_HOSTKARMA_BL Sender listed in HOSTKARMA-BLACK tflags RCVD_IN_HOSTKARMA_BL net score RCVD_IN_HOSTKARMA_BL 1.0 header RCVD_IN_HOSTKARMA_BR eval:check_rbl_sub('hostkarma','127.0.0.4') describe RCVD_IN_HOSTKARMA_BR Sender listed in HOSTKARMA-BROWN tflags RCVD_IN_HOSTKARMA_BR net score RCVD_IN_HOSTKARMA_BR 0.5 header RCVD_IN_HOSTKARMA_W eval:check_rbl_sub('hostkarma','127.0.0.1') describe RCVD_IN_HOSTKARMA_W Sender listed in HOSTKARMA-WHITE tflags RCVD_IN_HOSTKARMA_W net nice score RCVD_IN_HOSTKARMA_W -1.0 # ============================ # SpamRATS DNSBL # ============================ header __RCVD_IN_SPAMRATS eval:check_rbl('spamrats','all.spamrats.com.') describe __RCVD_IN_SPAMRATS SPAMRATS: sender is listed in SpamRATS tflags __RCVD_IN_SPAMRATS net reuse __RCVD_IN_SPAMRATS header RCVD_IN_SPAMRATS_DYNA eval:check_rbl_sub('spamrats','127.0.0.36') describe RCVD_IN_SPAMRATS_DYNA RATS-Dyna: sent directly from dynamic IP address tflags RCVD_IN_SPAMRATS_DYNA net reuse RCVD_IN_SPAMRATS_DYNA score RCVD_IN_SPAMRATS_DYNA 2.25 header RCVD_IN_SPAMRATS_NOPTR eval:check_rbl_sub('spamrats','127.0.0.37') describe RCVD_IN_SPAMRATS_NOPTR RATS-NoPtr: sender has no reverse DNS tflags RCVD_IN_SPAMRATS_NOPTR net reuse RCVD_IN_SPAMRATS_NOPTR score RCVD_IN_SPAMRATS_NOPTR 2.5 header RCVD_IN_SPAMRATS_SPAM eval:check_rbl_sub('spamrats','127.0.0.38') describe RCVD_IN_SPAMRATS_SPAM RATS-Spam: sender is a spam source tflags RCVD_IN_SPAMRATS_SPAM net reuse RCVD_IN_SPAMRATS_SPAM score RCVD_IN_SPAMRATS_SPAM 4.5 # ============================ # Ascams RBLs (IP Reputation) # ============================ header RCVD_IN_ASCAMS_BLOCK eval:check_rbl('ascams_block','block.ascams.com.') describe RCVD_IN_ASCAMS_BLOCK Sender listed in Ascams Block RBL tflags RCVD_IN_ASCAMS_BLOCK net score RCVD_IN_ASCAMS_BLOCK 0.0 header RCVD_IN_ASCAMS_DROP eval:check_rbl('ascams_white','dnsbl.ascams.com.') describe RCVD_IN_ASCAMS_DROP Sender listed in Ascams DROP list tflags RCVD_IN_ASCAMS_DROP nice net score RCVD_IN_ASCAMS_DROP 3.5 # ============================ # DroneBL DNSBL # ============================ header RCVD_IN_DRONEBL eval:check_rbl('dronebl','dnsbl.dronebl.org.') describe RCVD_IN_DRONEBL Sender listed in DroneBL (suspected bot/malware) tflags RCVD_IN_DRONEBL net score RCVD_IN_DRONEBL 2.0 # ============================ # GBUDB Truncate DNSBL # ============================ header RCVD_IN_GBUDB_TRUNCATE eval:check_rbl('gbudb','truncate.gbudb.net.') describe RCVD_IN_GBUDB_TRUNCATE Sender listed in GBUDB Truncate tflags RCVD_IN_GBUDB_TRUNCATE net score RCVD_IN_GBUDB_TRUNCATE 5.0 # ============================ # Usenix S5H # ============================ header RCVD_IN_S5H_BL eval:check_rbl_txt('s5hbl','all.s5h.net.') describe RCVD_IN_S5H_BL Listed at all.s5h.net tflags RCVD_IN_S5H_BL net score RCVD_IN_S5H_BL 1.5 # ============================ # Backscatterer.org # ============================ header RCVD_IN_BACKSCATTERER eval:check_rbl('backscatterer','ips.backscatterer.org.') describe RCVD_IN_BACKSCATTERER IP listed in Backscatterer (backscatter spam) tflags RCVD_IN_BACKSCATTERER net score RCVD_IN_BACKSCATTERER 2.25 - DNSBL scores reduced slightly

-

WARN on saving Custom Spamassassin rules -

WARN on saving Custom Spamassassin rulesI just edited the Custom Spamassassin rules and with saving the logs says below, is this normal, does it work?

Apr 14 11:57:51 [POST] /spam_acl Apr 14 11:57:51 2026-04-14 09:57:51,747 INFO waiting for spamd to stop Apr 14 11:57:51 2026-04-14 09:57:51,787 INFO stopped: spamd (exit status 0) Apr 14 11:57:51 2026-04-14 09:57:51,787 INFO reaped unknown pid 97370 (terminated by SIGINT) Apr 14 11:57:51 2026-04-14 09:57:51,787 INFO reaped unknown pid 97371 (terminated by SIGINT) Apr 14 11:57:51 2026-04-14 09:57:51,790 INFO spawned: 'spamd' with pid 98084 Apr 14 11:57:52 2026-04-14 09:57:52,792 INFO success: spamd entered RUNNING state, process has stayed up for > than 1 seconds (startsecs) Apr 14 11:57:52 [POST] /spam_custom_config Apr 14 11:57:53 Apr 14 09:57:53.363 [98087] warn: config: path "/root/.spamassassin" is inaccessible: Permission denied Apr 14 11:57:53 Apr 14 09:57:53.363 [98087] warn: config: path "/root/.spamassassin/user_prefs" is inaccessible: Permission denied Apr 14 11:57:53 Apr 14 09:57:53.436 [98087] warn: config: registryboundaries: no tlds defined, need to run sa-update Apr 14 11:57:53 Apr 14 09:57:53.445 [98087] warn: config: path "/root/.spamassassin" is inaccessible: Permission denied Apr 14 11:57:53 2026-04-14 09:57:53,643 INFO waiting for spamd to stop Apr 14 11:57:53 2026-04-14 09:57:53,699 INFO stopped: spamd (exit status 0) Apr 14 11:57:53 2026-04-14 09:57:53,703 INFO spawned: 'spamd' with pid 98090 Apr 14 11:57:54 2026-04-14 09:57:54,706 INFO success: spamd entered RUNNING state, process has stayed up for > than 1 seconds (startsecs)