Apache, OLS and Nginx-Custom Benchmarks

-

Hi!

in the next days we start testing on 2 of the images we have prepared.

We would like to know what data you would most like to know about their performance.Our testing environment will be:

- 2 Epyc vCore

- 2 GB Ram DRR4 ECC

- NVMe PCI-e 3gen

The app will be tested with 500MB of ram and 500MB of swap.

Our goal is to have similar performance for our image and other WordPress hosting setup available, for this we have plan to test:

- Cloudron standard apache setup (unmanaged)

- OLS app that we have develop erly this summer (will be on the store early December)

- Nginx custom build, with pagespeed mod, and some server side tweak to improve performance.

All 3 with this combination:

- With and without Redis object cache

- We will use one or more cache plugin (which one is your favourite?) to test there performance with this 3 setup.

- Just with server side cache.

If you have any advice on testing tool to use, or other setup for wp to be test just commend, every thought is appreciated.

Matt.

-

Hi!

in the next days we start testing on 2 of the images we have prepared.

We would like to know what data you would most like to know about their performance.Our testing environment will be:

- 2 Epyc vCore

- 2 GB Ram DRR4 ECC

- NVMe PCI-e 3gen

The app will be tested with 500MB of ram and 500MB of swap.

Our goal is to have similar performance for our image and other WordPress hosting setup available, for this we have plan to test:

- Cloudron standard apache setup (unmanaged)

- OLS app that we have develop erly this summer (will be on the store early December)

- Nginx custom build, with pagespeed mod, and some server side tweak to improve performance.

All 3 with this combination:

- With and without Redis object cache

- We will use one or more cache plugin (which one is your favourite?) to test there performance with this 3 setup.

- Just with server side cache.

If you have any advice on testing tool to use, or other setup for wp to be test just commend, every thought is appreciated.

Matt.

@moocloud_matt it would be interesting to add a few WP WAFs to the mix, like WP Cerber and other WP FWs; to see the impact of having such added vs benefit.

-

@moocloud_matt it would be interesting to add a few WP WAFs to the mix, like WP Cerber and other WP FWs; to see the impact of having such added vs benefit.

@robi

naxsi is included in our nginx build probably -

I have used ab extensively for load testing - https://www.digitalocean.com/community/tutorials/how-to-use-apachebench-to-do-load-testing-on-an-ubuntu-13-10-vps . You can send out concurrent requests easily.

@girish ab is great, and jMeter too. Those are the two we use most frequently at work as they're the easiest but also give us the least trouble / cause less issues downstream when load testing (though the products I support at my day job are very complex API Gateways which have a habit of being temperamental lol)

-

Blazemeter makes a really slick tool called Taurus - https://gettaurus.org - which makes using the heavyweight Jmeter miles easier and like 1000x cooler-looking in your shell. Definitely recommend it.

@jimcavoli haha, yeah Blazemeter is a great product and so is Taurus. I actually work for the company that makes it too (though not on their team, I work on another product portfolio in the company), full disclosure.

-

Hi!

in the next days we start testing on 2 of the images we have prepared.

We would like to know what data you would most like to know about their performance.Our testing environment will be:

- 2 Epyc vCore

- 2 GB Ram DRR4 ECC

- NVMe PCI-e 3gen

The app will be tested with 500MB of ram and 500MB of swap.

Our goal is to have similar performance for our image and other WordPress hosting setup available, for this we have plan to test:

- Cloudron standard apache setup (unmanaged)

- OLS app that we have develop erly this summer (will be on the store early December)

- Nginx custom build, with pagespeed mod, and some server side tweak to improve performance.

All 3 with this combination:

- With and without Redis object cache

- We will use one or more cache plugin (which one is your favourite?) to test there performance with this 3 setup.

- Just with server side cache.

If you have any advice on testing tool to use, or other setup for wp to be test just commend, every thought is appreciated.

Matt.

@moocloud_matt Where will you be posting these benchmarks? This is a really fantastic initiative.

-

@moocloud_matt Where will you be posting these benchmarks? This is a really fantastic initiative.

@lonk

here below, probably this week, in 2 or 3 days. also I wanted to specify that we will work mainly with Server-Side-Cache apart from OLS which integrates the server side with wordpress. -

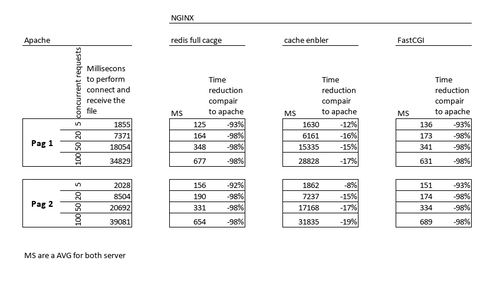

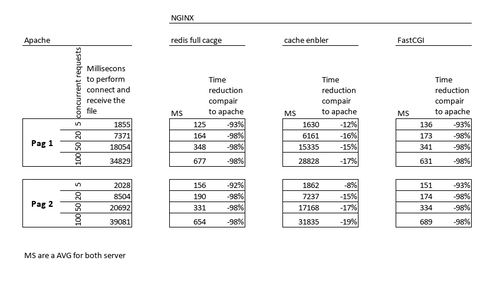

Soo here we are with some data, and information.

To best test which solution was the best we actually have to create 3 image for nginx.

- FastCGI Cache

- Redis Full Pace Cache

- Cache Enabler (html file cache) this is here to show app level cache, with direct integration with the webserver, the call is not pass to FPM.

Our main image will be Redis Full Page Cache in till the support for RAM disk inside the container, then we will see how FastCGI will perform.

Basic information.

All the test are on our cloud platform hosted in 2 datacenter using 2 different server.

Server 1:

- 2 vCPU Intel XEON

- 2GB RAM

- and NVMe SSD on M2 Slot (over chipset) on RAID 1

Server 2:

- 2 vCPU AMD Epyc

- 2GB RAM

- and NVMe SSD on PCI-E directly to CPU on RAID 1

Both server have 1Gbit's port

The test are been done using as a client a server in the same rack, with the same specks of the tested one.

Only the app what we was testing was on at that time, and have 1 Cloudron GB as memory limit, that mean 1/2 is ram 1/2 is swap.

CPU Shares are set as default at 50%How we test

Remember: this a preliminary test, not completely validated, we need to have various confirmation and runs before calming that they are perfect score.

We reboot the server, then stop all the app.

Check using HTOP if there was something going on on the server, if it was all clear we start testing.We build 2 page, using divi builder the most commune builder with Elementor.

They have inline CSS, JS and we didn't use any plugin for optimizing the content, we have only use the plugin needed to enable cache control from WP.

Both pages have varius CSS file and Image + SVG.For this test all image was the original, we will testing our auto optimize image script later.

We execute 8 test for every App.- 5, 20, 50, 100 Concurrent session for a total of 500 session each.

- For both pages + some image and CSS in the page, not all the content

Results

What we see is 2 image that have server side cache boost there performance, they use different technique, redis full page cache save (similar to what nginx proxy cache do) a copy of the output in the RAM using the 300MB standard container provided by cloudron (we have better performance on Epyc due to low latency memory supported).

FastCGI save part of the page and the information pre-elaborate on the NVMe Storage and some on the RAM (we have a 3% better performance on the Epyc server probably due to the Storage been connected directly to the CPU).

What we didn't aspect was the terrible results (compare to the other NGINX set up) that Cache Enabler image have done, this is probably due to a check and the redirect of the request to an HTML page, saved in the NVMe Storage.Why Redis Full Cache is for now the fastest?

Because it use a direct connection to Redis and is all in the cache, nginx don't need to interact with the FileSystem, and as we probably know Redis is one of the fastest "DB" available and is not limited to the IO for your disk, DRAM of your SSD, Chipset or raid card, but only from your RAM and CPU_RAM connection.

Especially with near AMD CPU that provider higher RAM frequency and bandwidth, Redis Full Cache page show to be the fastest solution that we can provide on cloudron.Remember: this a preliminary test, not completely validated, we need to have various confirmation and runs before calming that they are perfect score.

-

Soo here we are with some data, and information.

To best test which solution was the best we actually have to create 3 image for nginx.

- FastCGI Cache

- Redis Full Pace Cache

- Cache Enabler (html file cache) this is here to show app level cache, with direct integration with the webserver, the call is not pass to FPM.

Our main image will be Redis Full Page Cache in till the support for RAM disk inside the container, then we will see how FastCGI will perform.

Basic information.

All the test are on our cloud platform hosted in 2 datacenter using 2 different server.

Server 1:

- 2 vCPU Intel XEON

- 2GB RAM

- and NVMe SSD on M2 Slot (over chipset) on RAID 1

Server 2:

- 2 vCPU AMD Epyc

- 2GB RAM

- and NVMe SSD on PCI-E directly to CPU on RAID 1

Both server have 1Gbit's port

The test are been done using as a client a server in the same rack, with the same specks of the tested one.

Only the app what we was testing was on at that time, and have 1 Cloudron GB as memory limit, that mean 1/2 is ram 1/2 is swap.

CPU Shares are set as default at 50%How we test

Remember: this a preliminary test, not completely validated, we need to have various confirmation and runs before calming that they are perfect score.

We reboot the server, then stop all the app.

Check using HTOP if there was something going on on the server, if it was all clear we start testing.We build 2 page, using divi builder the most commune builder with Elementor.

They have inline CSS, JS and we didn't use any plugin for optimizing the content, we have only use the plugin needed to enable cache control from WP.

Both pages have varius CSS file and Image + SVG.For this test all image was the original, we will testing our auto optimize image script later.

We execute 8 test for every App.- 5, 20, 50, 100 Concurrent session for a total of 500 session each.

- For both pages + some image and CSS in the page, not all the content

Results

What we see is 2 image that have server side cache boost there performance, they use different technique, redis full page cache save (similar to what nginx proxy cache do) a copy of the output in the RAM using the 300MB standard container provided by cloudron (we have better performance on Epyc due to low latency memory supported).

FastCGI save part of the page and the information pre-elaborate on the NVMe Storage and some on the RAM (we have a 3% better performance on the Epyc server probably due to the Storage been connected directly to the CPU).

What we didn't aspect was the terrible results (compare to the other NGINX set up) that Cache Enabler image have done, this is probably due to a check and the redirect of the request to an HTML page, saved in the NVMe Storage.Why Redis Full Cache is for now the fastest?

Because it use a direct connection to Redis and is all in the cache, nginx don't need to interact with the FileSystem, and as we probably know Redis is one of the fastest "DB" available and is not limited to the IO for your disk, DRAM of your SSD, Chipset or raid card, but only from your RAM and CPU_RAM connection.

Especially with near AMD CPU that provider higher RAM frequency and bandwidth, Redis Full Cache page show to be the fastest solution that we can provide on cloudron.Remember: this a preliminary test, not completely validated, we need to have various confirmation and runs before calming that they are perfect score.

@moocloud_matt Just want to make sure I understand... since the current WordPress Developer package uses Apache and Redis caching already, is the only difference with your testing for Redis involvement just that it's running behind Nginx instead of Apache? Just trying to understand the comparison between what we already have vs what you're proposing with Redis cache (since it seems the fastest and most similar to what we've already got in terms of Redis cache being used).

-

@MooCloud_Matt Nice work! I went through similar comparisons a few years ago with similar results.

Ultimately, we settled on FastCGI as relatively simpler and low to no maintenance needs when we're deploying updates throughout each day that need cache-clearing every time anyway.

Do you plan on having uncached benchmark instance comparisons?

For anyone else following and unfamiliar; WP Admin, Woocommerce, logged-in users, and any other dynamic content plugins can't use full-page caching.

The simplest page to test for uncached server config performance is

/my-account/when you have Woocommerce installed and/wp-login.phpif you don't.If anyone wants to test any page's uncached performance, just add a cache-busting random GET var to the end of the URL, eg:

?v=762138and just change the number each time so it's always serves from php. -

@moocloud_matt Just want to make sure I understand... since the current WordPress Developer package uses Apache and Redis caching already, is the only difference with your testing for Redis involvement just that it's running behind Nginx instead of Apache? Just trying to understand the comparison between what we already have vs what you're proposing with Redis cache (since it seems the fastest and most similar to what we've already got in terms of Redis cache being used).

@d19dotca said in Apache, OLS and Nginx-Custom Benchmarks:

Apache and Redis caching

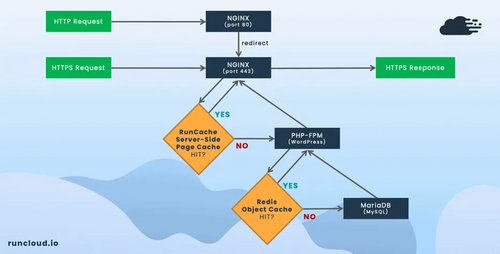

Sorry i was not so clear on this.

Cloudron now don't provide any webserver cache (for what i know), what you have now is a DB cache, it sit between PHP and the DB.What you see as "Apache" in the test is the standard cloudron app with "REDIS OBJECT CACHING", during the test in all app testes that lvl of cache was active, but in the Redis Full-Page Cache we use a integration between Nginx and Redis directly in addition to the Object Cache, so that PHP will never get involve if the page was already been serve to a similar client.

i will use this pics by runcloud to show you:

What we build is similar to what runcloud call RunCache, redis in this case is used to store HTML, CSS and JS content and not query to the DB.

-

@MooCloud_Matt Nice work! I went through similar comparisons a few years ago with similar results.

Ultimately, we settled on FastCGI as relatively simpler and low to no maintenance needs when we're deploying updates throughout each day that need cache-clearing every time anyway.

Do you plan on having uncached benchmark instance comparisons?

For anyone else following and unfamiliar; WP Admin, Woocommerce, logged-in users, and any other dynamic content plugins can't use full-page caching.

The simplest page to test for uncached server config performance is

/my-account/when you have Woocommerce installed and/wp-login.phpif you don't.If anyone wants to test any page's uncached performance, just add a cache-busting random GET var to the end of the URL, eg:

?v=762138and just change the number each time so it's always serves from php.@marcusquinn said in Apache, OLS and Nginx-Custom Benchmarks:

Nice work!

Ty!

@marcusquinn said in Apache, OLS and Nginx-Custom Benchmarks:

uncached benchmark

Yes we have already done it, we have a 20% on the complete page (whit all the css, js, pics, ...) tanks to the better resources management by Nginx on static content, we will provide more details later this week.

But no big improvement on just php-fpm (nignx) or fastcgi (apache) performance, apache is pretty much the best in just php content.@marcusquinn said in Apache, OLS and Nginx-Custom Benchmarks:

Woocommerce

WC will be supported just on the Redis Full-Page Cache (Redis FPC) cache for now, we plan to support it on fastcgi early next year, with the support for tmpfs on Cloudron APP.

Btw on Redis FPC we will support partial cache soon, so wp-admin will be cached to, but partially.

-

@marcusquinn said in Apache, OLS and Nginx-Custom Benchmarks:

Nice work!

Ty!

@marcusquinn said in Apache, OLS and Nginx-Custom Benchmarks:

uncached benchmark

Yes we have already done it, we have a 20% on the complete page (whit all the css, js, pics, ...) tanks to the better resources management by Nginx on static content, we will provide more details later this week.

But no big improvement on just php-fpm (nignx) or fastcgi (apache) performance, apache is pretty much the best in just php content.@marcusquinn said in Apache, OLS and Nginx-Custom Benchmarks:

Woocommerce

WC will be supported just on the Redis Full-Page Cache (Redis FPC) cache for now, we plan to support it on fastcgi early next year, with the support for tmpfs on Cloudron APP.

Btw on Redis FPC we will support partial cache soon, so wp-admin will be cached to, but partially.

@moocloud_matt Cool, happy to help with some A/B testing on our WP stack which is rather bulky but quite well optimised on the app level. Eg, this site is running on Cloudron now:

Something like 200 plugins, including Woocommerce.

We use WP Super Cache for fragment caching, and happy to recommend it. Although, caching is the number one cause of issues with relatively new WP devs, so I always recommend optimising the app without any caching first, especially slow queries and php warnings before getting involved with caching.

The blessing and curse of WP plugins eh, anyone can publish them

-

@MooCloud_Matt This is huge. Those gains are insane with not that difficult of an infrastructure change.

Curious, did you ever test an OLS implementation with their official OLS Caching plugin? The subject implied you were going to but maybe I misinterpreted the acronym (Open Lightspeed?).

-

@MooCloud_Matt This is huge. Those gains are insane with not that difficult of an infrastructure change.

Curious, did you ever test an OLS implementation with their official OLS Caching plugin? The subject implied you were going to but maybe I misinterpreted the acronym (Open Lightspeed?).

@lonk

we pause the OLS development, (is in beta and is stable) to focus on Nginx Setup.

Now our focus is to create a open beta soon, then post those app on the Cloudron store.

and early next year make a some image intercompatible, so you can change from FastCGI + PageSpeed to only FastCGI, or From RedisFPC to FastCGI, ...But we don't know for now what we will able to do, we are focusing to prepare open beta for now.

-

@lonk

we pause the OLS development, (is in beta and is stable) to focus on Nginx Setup.

Now our focus is to create a open beta soon, then post those app on the Cloudron store.

and early next year make a some image intercompatible, so you can change from FastCGI + PageSpeed to only FastCGI, or From RedisFPC to FastCGI, ...But we don't know for now what we will able to do, we are focusing to prepare open beta for now.

@moocloud_matt said in Apache, OLS and Nginx-Custom Benchmarks:

@lonk

we pause the OLS development, (is in beta and is stable) to focus on Nginx Setup.

Now our focus is to create a open beta soon, then post those app on the Cloudron store.

and early next year make a some image intercompatible, so you can change from FastCGI + PageSpeed to only FastCGI, or From RedisFPC to FastCGI, ...But we don't know for now what we will able to do, we are focusing to prepare open beta for now.

I would love to be part of the Open Beta since I don't use my Cloudron for anything production, just dev.

-

Update on WAF:

We have disable the WAF features for now, that we had plan due to instability in TTFB timings.

Maybe we will add a CDN to host blacklists and information repository that WAF module need to work.

Because now it take from .9 to 2 seconds to check the ip/browser and our objective is to have mostly all normal page load in 3 or less seconds.update on redis full Page cache (fpc)

We are working on the partial page cache, or sr-cache module.

But we are not shore if this will improve performance even more.

Is just a better management of the cache.WordPress nginx helper

We are using nginx-helper plugin to let you clear your cache from WordPress, but due to how we are implementing stuff, we will create a fork to better sweet our nginx stack.

prestashop php-fpm + cache

We are working on prestashop for cloudron too.

This is a test to see how much flexible is our FPM+fastcgi cache setup(No redis cache possibile, due to license on plugin to support it)

Hello! It looks like you're interested in this conversation, but you don't have an account yet.

Getting fed up of having to scroll through the same posts each visit? When you register for an account, you'll always come back to exactly where you were before, and choose to be notified of new replies (either via email, or push notification). You'll also be able to save bookmarks and upvote posts to show your appreciation to other community members.

With your input, this post could be even better 💗

Register Login